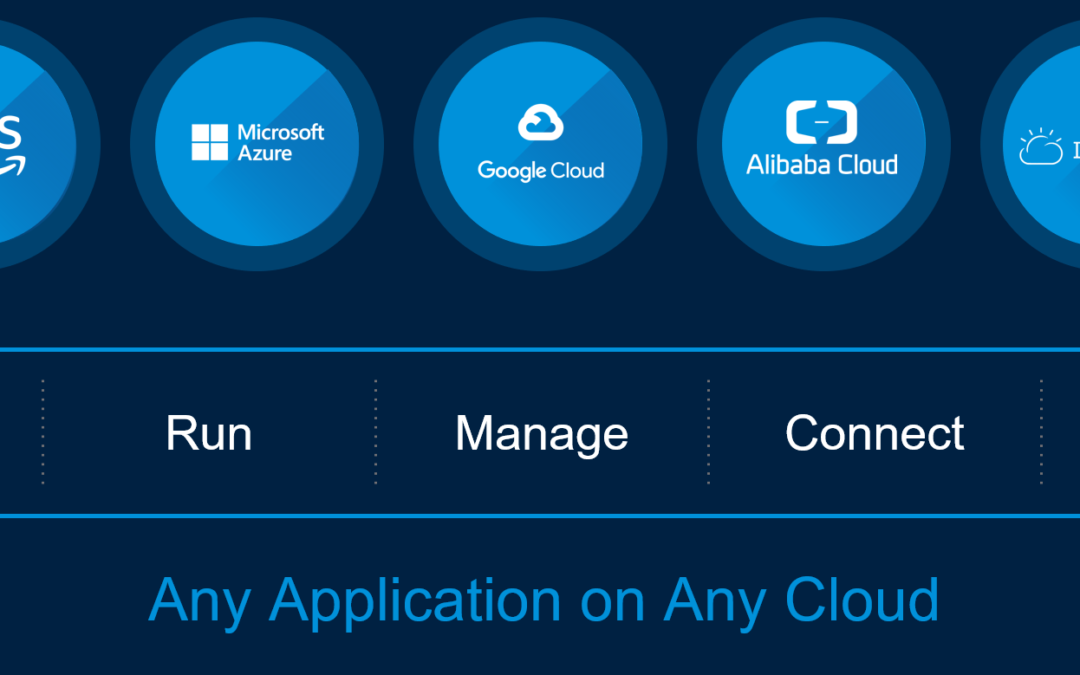

In my previous article Cross-Cloud Mobility with VMware HCX I already very briefly touched VMware’s hybrid and multi-cloud vision and strategy. I mentioned, that VMware is coming from the on-premises world if you compare them with AWS, Azure or Google, but have the same “consistent infrastructure with consistent operations” messaging. And that the difference would be, that VMware is not only hardware-agnostic, but even cloud-agnostic. To abstract the technology format and infrastructure in the public cloud, their idea is to run VMware Cloud Foundation (VCF) everywhere (e.g. Azure VMware Solution), on-premises on top of any hardware and in the cloud on any global infrastructure from any hyperscaler like AWS, Azure, Google, Oracle, IBM, Alibaba. Or you can run your workloads in a VMware cloud provider’s cloud based on VCF. That’s the VMware multi-cloud.

The goal of this article is not compare any features from different vendors and products, but to give you a better idea why multi-cloud is becoming a strategic priority for most enterprises and why VMware could be right partner for your journey to the cloud.

To get started, let’s get an understanding what the three big hyperscalers are doing is when it comes to a hybrid or multi-cloud.

Microsoft

To bring Azure services to your data center and to benefit from a hybrid cloud approach, you would probably go for Azure Stack to run virtualized applications on-premises. Their goal is to build consistent experiences in the cloud and at the edge, even for scenarios where you have no internet connection. This would be by VMware’s definition a typical hybrid cloud architecture.

Multi-cloud refers to the use of multiple public cloud service providers in a multi-cloud architecture, whereas hybrid cloud describes the use of public cloud in conjunction with private cloud. In a hybrid cloud environment, specific applications leverage both the private and public clouds to operate. In a multi-cloud environment, two or more public cloud vendors provide a variety of cloud-based services to a business.

With the announcement of Azure Arc at MS Ignite 2019, Microsoft introduced a new product, which “simplifies complex and distributed environments across on-premises, edge and multi-cloud“. Beside the fact that you can run Azure data services anywhere, it gives you the possibility to govern and secure your Windows servers, Linux servers and Kubernetes (K8s) clusters across different clouds. Arc can also deploy and manage K8s applications consistently (from source control).

You could summarize it like this, that Microsoft is bringing Azure infrastructure and services to any infrastructure. It’s not necessary to understand the technical details of Azure Stack and Azure Arc. More important is the messaging and the strategy. It’s about managing and securing Windows/Linux servers, virtual machines and K8s clusters everywhere and this with their Azure Resource Manager (ARM). Arc ensures that the right configurations and policies are in place to fulfill governance requirements across clouds. Run your workloads where you need it and where it makes sense, even it isn’t Azure.

You could summarize it like this, that Microsoft is bringing Azure infrastructure and services to any infrastructure. It’s not necessary to understand the technical details of Azure Stack and Azure Arc. More important is the messaging and the strategy. It’s about managing and securing Windows/Linux servers, virtual machines and K8s clusters everywhere and this with their Azure Resource Manager (ARM). Arc ensures that the right configurations and policies are in place to fulfill governance requirements across clouds. Run your workloads where you need it and where it makes sense, even it isn’t Azure.

Google Anthos

Google open-sourced their own implementation of containers to the Linux kernel in about 2006 or 2007. It was called cgroups, which stands for control groups. Docker appeared in 2013 and provided some nice tooling for containers. Over the next years, Microservices were used more often to divide monoliths into different pieces and services. Because of the growing numbers of containers, Google saw the need to make this technology easy to manage and orchestrate for everyone. This was six years ago when they released Kubernetes.

By the way, two of the three Kubernetes founders, namely Joe Beda and Craig McLuckie, are working for VMware since their company Heptio has been acquired by VMware in November 2018.

Today, Kubernetes is the standard way to run containers at scale.

We know by now that the future is hybrid or even multi-cloud, and not public cloud only. Also Google realized that years ago. Besides that, a lot of enterprises made the experience that moving to the cloud and re-engineering the whole application at the same time mostly fail. This means, that moving applications from your on-premises data center, refactoring the application at the same time and run it in the public cloud, is not that easy.

Why isn’t it easy? Because you are re-engineering the whole application, have to take care of other application and network dependencies, think about security, governance and have to train your staff to cope with all the new management consoles and processes.

Google’s answer and approach here is to modernize applications on-premises and then move them to the cloud after the modernization happened. They say that you need a platform, that runs in the cloud and in your data center. A platform, that runs consistently across different environments – same technology, same tools and policies everywhere.

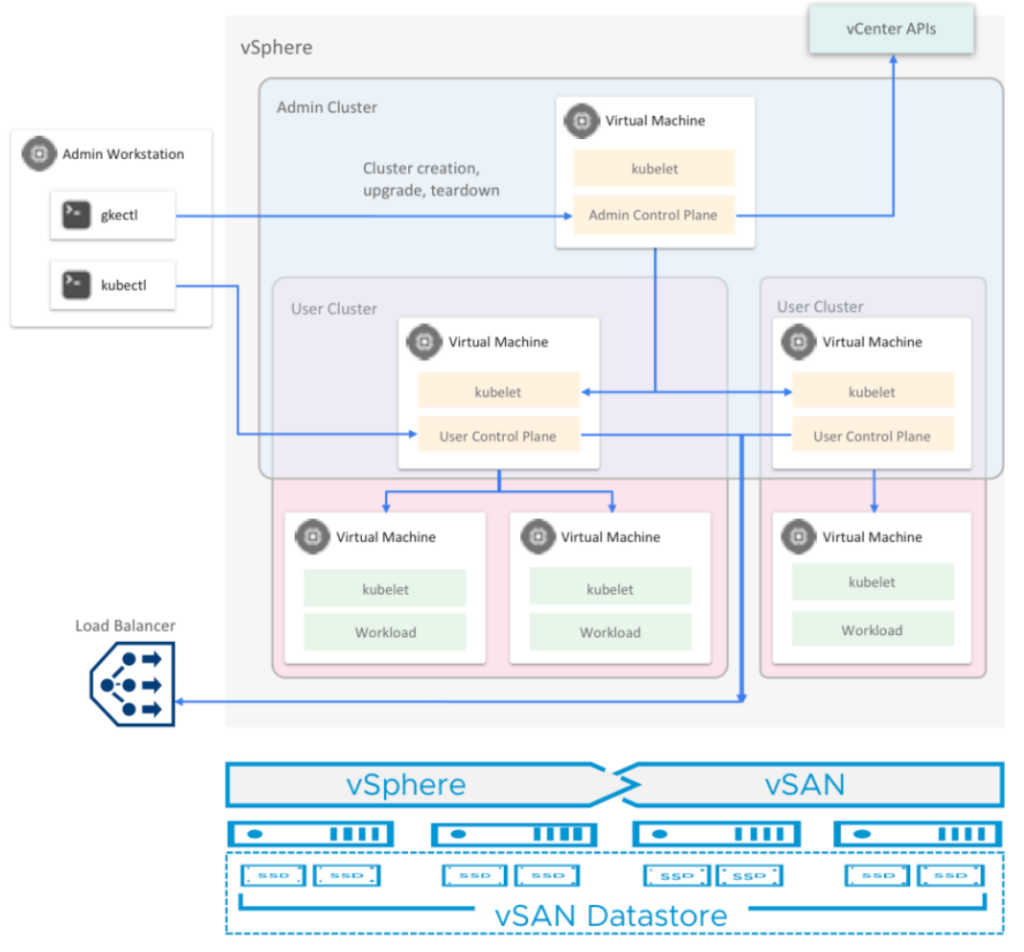

This platform is called Google Anthos. Anthos is 100% software-defined and (hardware) vendor-agnostic. To deliver their desired developer experience on-prem as well, they rely on VMware. This is GKE running on-prem on top of vSphere:

Amazon Web Services

The last solution I would like to mention is AWS Outposts, which is a fully managed service that extends their AWS infrastructure, services and tools to any data center for a “truly consistent hybrid experience”. What are the AWS services running on Outposts?

- Containers (EKS)

- Compute (EC2)

- Storage (EBS)

- Databases (Amazon RDS)

- Data Analytics (Amazon EMR)

- Different tools and APIs

AWS Outposts are delivered as an industry-standard 42U rack. The Outpost rack is 80 inches (203.2cm) tall, 24 inches (60.96cm) wide, and 48 inches (121.92cm) deep. Inside we have hosts, switches, a network patch panel, a power shelf, and blank panels. It has redundant active components including network switches and hot spare hosts.

If you visit the Outposts website, you’ll find the following information:

Coming soon in 2020, a VMware variant of AWS Outposts will be available. VMware Cloud on AWS Outposts delivers a fully managed VMware Software-Defined Data Center (SDDC) running on AWS Outposts infrastructure on premises.

VMC on AWS Outposts is for customers, who want to use the same VMware software conventions and control plane as they have been using for years. It can be seen as an extension from the regular VMC on AWS offering which is now made available on-premises (on top of the AWS Outposts infrastructure) for a hybrid approach.

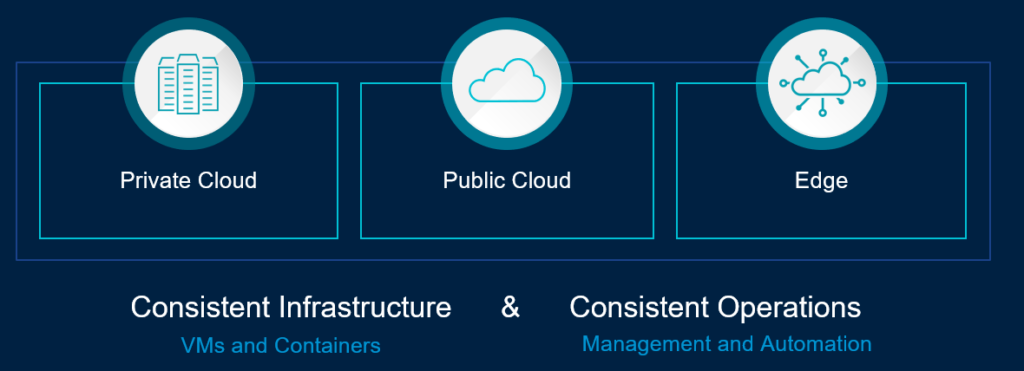

What do all these options have in common? It is always about consistent infrastructure with consistent operations. To have one platform in the cloud and on-premises in your data center or at the edge. Most of today’s hybrid cloud strategies rely on the facts, that migrations to the cloud are not easy, fail a lot and so it’s clear why we still have 90% of all workloads running on-premises. We are going to have many million containers more in the future, which need to be orchestrated with Kubernetes, but virtual machines are not just disappearing or being replaced tomorrow.

My conclusion here is, that every hyperscaler is seeing cloud-native in our (near) future and wants to provide their services in the cloud and on-prem. That customer can build their new applications with a service-oriented architecture or partially modernize existing monoliths (big legacy applications) on the same technology stack.

All hyperscalers mention as well, that you have to take care of different management and security consoles, skills set and tools in general. Except Microsoft with Azure Arc, nobody else is having a “real” multi-cloud solution or platform. I want to highlight, that even Azure Arc is only here for some servers, Kubernetes clusters and takes care of governance.

Let’s assume you have a hybrid cloud setup in place. Your current project requirements tell you to develop new applications in the Google Cloud using GKE. That’s fine. Your current on-premises data centers run with VMware vSphere for virtualization. Tomorrow, you have to think about edge computing for specific use cases where AI and ML-based workloads are involved. Then you decide to go for Azure and create a hybrid architecture with Azure Stack and Arc. Now you are using two different public cloud providers, one with their specific hybrid cloud offering and also VMware vSphere on-premises.

What are you going to do now? How do you manage and secure all these different clouds and technologies? Or do you think about migrating all the application workloads from on-prem to GCP and Azure? Or do you start with Anthos now for other use cases and applications? Maybe you decide later to move away from VMware and evacuate the VMware-based private cloud to any hyperscaler? Is it even possible to do that? If yes, how long would this technology change and migration take?

Let’s assume for this exercise, that this would be a feasable option with an acceptable timeframe. How are you going to manage the different servers, applications, dependencies and secure everything at the same time? How can you manage and provision infrastructure in an easy and efficient way? What about cost control? What happens if you don’t see Azure as strategic anymore and want to move to AWS tomorrow? Then you figure out, that cloud is more expensive than you thought and experience yourself why only 10% of all workloads are running in the public cloud today.

I think people can pretty easy handle an infrastructure which runs VMware on-premises and have maximum one public cloud only – a hybrid cloud architecture. If we are talking about a greenfield scenario where you could start from scratch and choose AWS including AWS Outposts, because you think it’s best for you and matches all the requirements, go for it. You know what is right for you.

But I believe, and this is also what I see with larger customers, the current reality is hybrid and the future is multi-cloud.

VMware Multi-Cloud Strategy

And a multi-cloud environment is a totally different game to manage. What is the VMware multi-cloud strategy exactly and why is it different?

VMware’s approach is always to abstract complexity. This doesn’t mean that everything is getting less complex, but you will get the right platform and tooling to deal with this complexity.

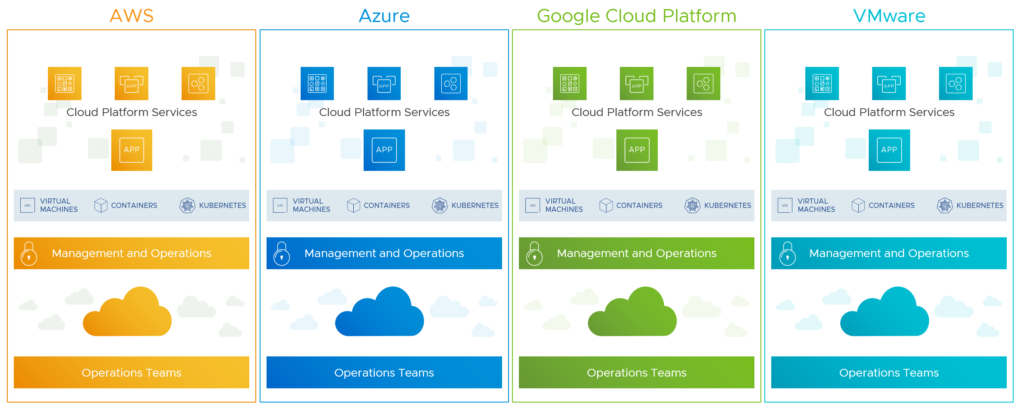

A decade ago, abstracting meant providing a hypervisor (vSphere) for any hardware (being vendor-agnostic). After that we had software-defined storage (vSAN) followed software-defined networking (NSX). Beside these three major software pieces, we also have the vRealize suite, which is mainly known for products like vRealize Automation and vRealize Operations. The technology stack consisting of vSphere, vSAN, NSX, vRealize and some management components from the software-defined data center and is called VMware Cloud Foundation. A technology stack that allows you to experience the ease of public cloud in your data center. Again, if wanted and required, you can run this stack on top of any hyperscaler like AWS, Azure, Google Cloud, Alibaba Cloud, Oracle Cloud or IBM.

It’s a platform which can deliver services as you would expect in the public cloud. The vRealize suite can help you to automatically provision virtual machines and containers including the right network and storage (any vSphere-based cloud or cloud-native on AWS, GCP, Azure or Alibaba). Build your own templates or blueprints (Infrastructure as Code) to deliver services IaaS, DBaaS, CaaS, DaaS, FaaS, PaaS, SaaS and DRaaS, which can be ordered and consumed by your users or your IT. Put a price tag behind any service or workload you deploy, and include your public cloud spending as well (e.g. with CloudHealth) in this calculation.

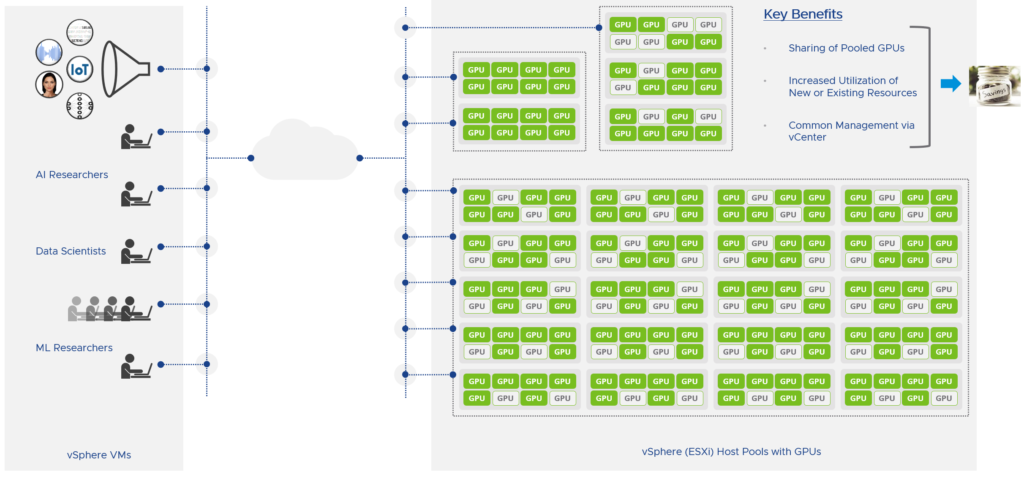

You want to deliver vGPU enabled virtual machines or containers? Also possible with vSphere. Modern AI/ML based applications need compute acceleration to handle large and complex computation. vSphere Bitfusion allows you to access GPUs in a virtualized environment over the network (ethernet). Bitfusion works across any cloud and environment and can be accessed from any workload from any network. This topic gets very interesting if we talk about edge computing for example.

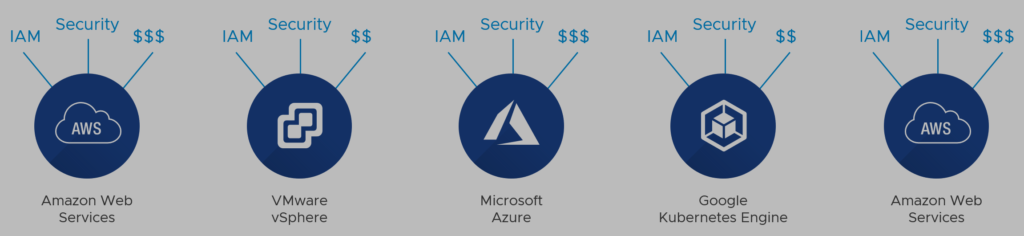

Modern applications obviously demand a modern infrastructure. An infrastructure with a hybrid or multi-cloud architecture. With that you are facing the challenge of maintaining control and visibility over a growing number of environments. In such a modern environment, how do you automate configuration and management? What about networking and security policies applied at a cluster level? How you handle identity and access management (IAM)? Any clue about backup and restore? And what would be your approach for cost management in a multi-cloud world?

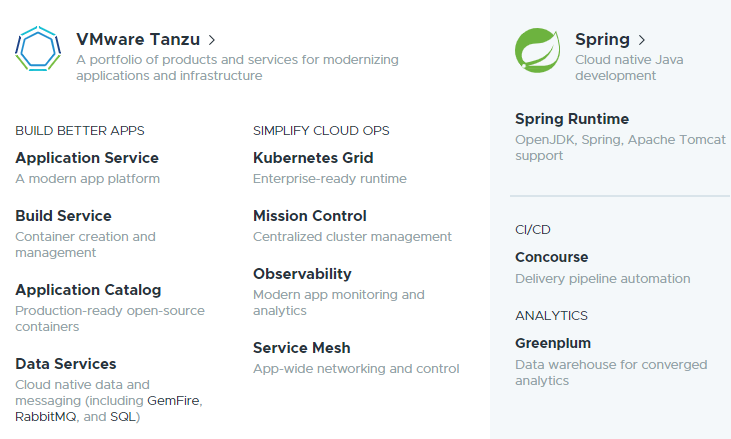

To improve the IT ops and developer experience, VMware announced the Tanzu portfolio including something they call the Tanzu Kubernetes Grid (TKG). The promise of TKG is to provide developers a consistent and on-demand access to infrastructure across clouds and is considered to be the enterprise-ready Kubernetes runtime.

Since vSphere 7, TKG has been embedded into the control plane vSphere 7 with Kubernetes as a service. Finally, as Kubernetes is natively integrated into the hypervisor, we have a converged platform for VMs and containers. IT ops now can see and manage Kubernetes objects (e.g. pods) from the vSphere client and developers use the Kubernetes APIs to access the SDDC infrastructure.

There are different ways to consume TKG beside “vSphere 7 with Kubernetes“. TKG is a consistent and upstream compatible Kubernetes runtime with preintegrated and validated components, that also runs in any public cloud or edge environments.

If you have to run Kubernetes clusters natively on Azure, AWS, Google and on vSphere on-premises, how would you manage IAM, lifecycle, policies, visibility, compliance and security? How would you manage any new or existing clusters?

Here, VMware’s solution would be Tanzu Mission Control (TMC). A centralized management platform (operated by VMware as SaaS) for all your clusters in any cloud. TMC allows you to provision TKG workload clusters to your environment of choice and manage the lifecycle of each cluster via TMC. To date, the supported deployments are in vSphere and AWS EC2 accounts. The deployment on Azure is coming very soon.

Existing Kubernetes clusters from any vendor such as EKS, AKS, GKE or OpenShift can be attached to TMC. As long as you are maintaining CNCF conformant clusters, you can attach them to TMC so that you can manage all of them centrally.

The Tanzu portfolio is much bigger and includes more than TKG and TMC, which only address the “where and how to run Kubernetes” and “how to deploy and manage Kubernetes”. Tanzu has other solutions like an application catalog, build service, application service (previously Pivotal Cloud Foundry) and observability (monitoring and metrics) for example.

And this Tanzu products can be complemented with cloud-scale networking solutions like an application delivery controller (ADC) or software-defined WAN (SD-WAN). To deliver the “public cloud experience” to developers for any infrastructure, we need to provide agility. From an infrastructure perspective we’ll find VMware Cloud Foundation and from application or developer perspective we learned that Tanzu covers that.

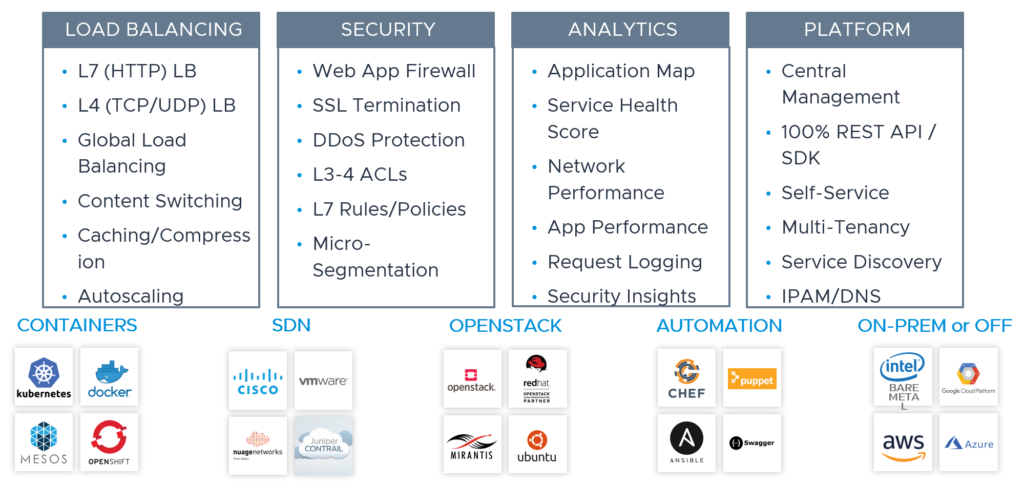

For a distributed application architecture, you also need a software-defined ADC architecture that is fully distributed, auto scalable and provides real-time analytics and security for VMs or containers. VMware’s NSX Advanced Load Balancer (formerly known as Avi Networks) runs on AWS, GCP, Azure, OpenStack and VMware and has a rich feature set:

Hypervisor versus Public Cloud

What I am trying to say here, is, that cloud-native at scale requires much more than containers only. While hypervisors are obviously not disappearing and getting replaced by containers from the public cloud very soon, they will co-exist and therefore it is very important to implement solutions which can be used everywhere. If you can ignore the cost factor for a moment, probably the best solution would be using the exact same technology stack and tools for all the clouds your workloads are running on.

You need to rely on a partner and solution portfolio that could address or solve anything (or almost anything) you are building in your IT landscape. As I already said, VCF and Tanzu are just a few pieces of the big puzzle. Important would be an end-to-end approach from any layer or perspective.

Therefore, I believe, VMware is very relevant and very well-positioned to support your journey to the multi-cloud.

The application you migrate or modernize need to be accessed by your users in a simple and secure way. This would lead us for example to the next topic, where we could start a discussion about the digital workspace or end-user computing (EUC).

Talking about EUC and the future-ready workplace would involve other IT initiatives like hybrid or multi-cloud, application modernization, data center and cloud networking, workspace security, network security and so on. A discussion which would touch all strategic pillars VMware defined and presented since VMworld 2019.

If your goal is also to remove silos, provide a better user and admin experience, and this in a secure way over any cloud, then I would say that VMware’s unique platform approach is the best option you’ll find on the market.

And since VMware can and will co-exist with the hyperscalers, and even run on top of all them, I would consider to talk about the “big four” and not “big three” hyperscalers from now on.

Excellent overview. Well written for both a business and technical audience. – Thanks!

The video link below compliments Michael’s article really well. It provides some product history while digging into more of the details from Michael’s article.

https://www.youtube.com/watch?v=-QMC7_GKYr4

I would enjoy seeing Jason and Michael collaborating on a educational piece for both business and tech!

Thank you so much for your feedback. Very appreciated, Michael!