VMware Cloud Foundation 5.1 – Technical Overview

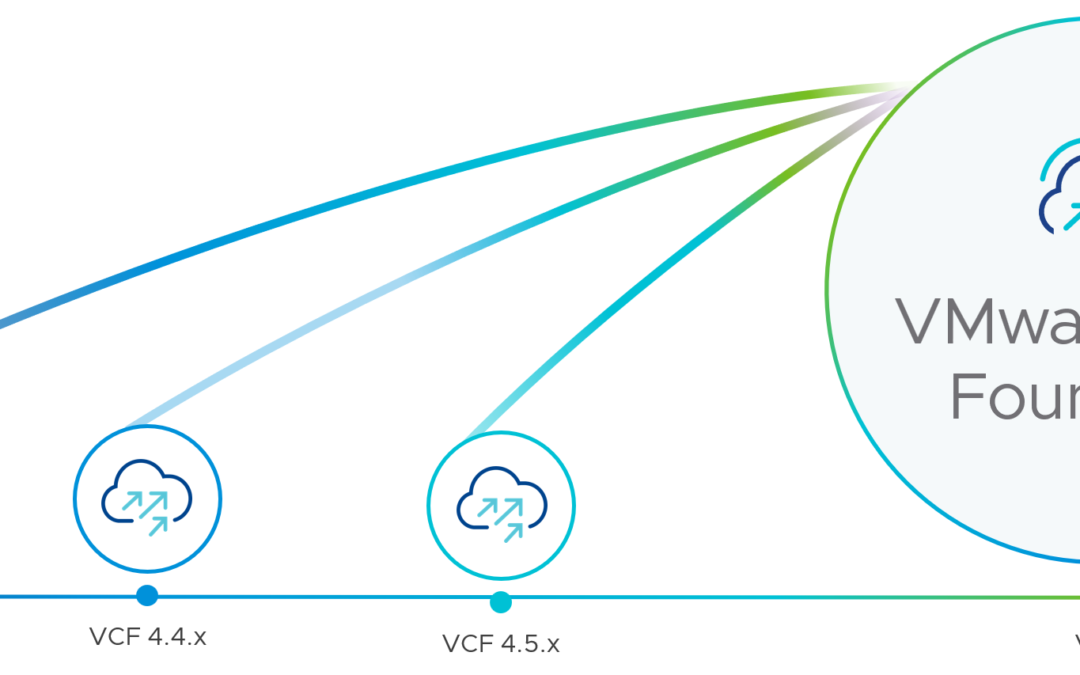

This technical overview supersedes this version, which was based on VMware Cloud Foundation 5.0, and now covers all capabilities and enhancements that were delivered with VCF 5.1.

What is VMware Cloud Foundation (VCF)?

VMware Cloud Foundation is a multi-cloud platform that provides a full-stack hyperconverged infrastructure (HCI) that is made for modernizing data centers and deploying modern container-based applications. VCF is based on different components like vSphere (compute), vSAN (storage), NSX (networking), and some parts of the Aria Suite (formerly vRealize Suite). The idea of VCF follows a standardized, automated, and validated approach that simplifies the management of all the needed software-defined infrastructure resources.

This stack provides customers with consistent infrastructure and operations in a cloud operating model that can be deployed on-premises, at the edge, or in the public cloud.

What software is being delivered in VMware Cloud Foundation?

Update February 16th, 2024: Please have a look at this article to understand the current VCF licensing. I will publish an updated version of this blog as soon as VMware Cloud Foundation 5.2 has been released.

The BoM (bill of materials) is changing with each VCF release. With VCF 5.1 the following components and software versions are included:

|

Software Component |

Version |

Date |

Build Number |

|---|---|---|---|

|

Cloud Builder VM |

5.1 |

07 NOV 2023 |

22688368 |

|

SDDC Manager |

5.1 |

07 NOV 2023 |

22688368 |

|

VMware vCenter Server Appliance |

8.0 Update 2a |

26 OCT 2023 |

22617221 |

|

8.0 Update 2 |

21 SEP 2023 |

22380479 |

|

|

8.0 Update 2 |

21 SEP 2023 |

22385739 |

|

|

4.1.2.1 |

7 NOV 2023 |

22667789 |

|

|

8.14 |

19 OCT 2023 |

22630473 |

- VMware vSAN is included in the VMware ESXi bundle.

- You can use VMware Aria Suite Lifecycle to deploy VMware Aria Automation, VMware Aria Operations, VMware Aria Operations for Logs, and Workspace ONE Access. VMware Aria Suite Lifecycle determines which versions of these products are compatible and only allows you to install/upgrade to supported versions.

- VMware Aria Operations for Logs content packs are installed when you deploy VMware Aria Operations for Logs.

- The VMware Aria Operations management pack is installed when you deploy VMware Aria Operations.

- You can access the latest versions of the content packs for VMware Aria Operations for Logs from the VMware Solution Exchange and the VMware Aria Operations for Logs in-product marketplace store.

What’s new with VCF 5.1?

Important changes mentioned in the release notes:

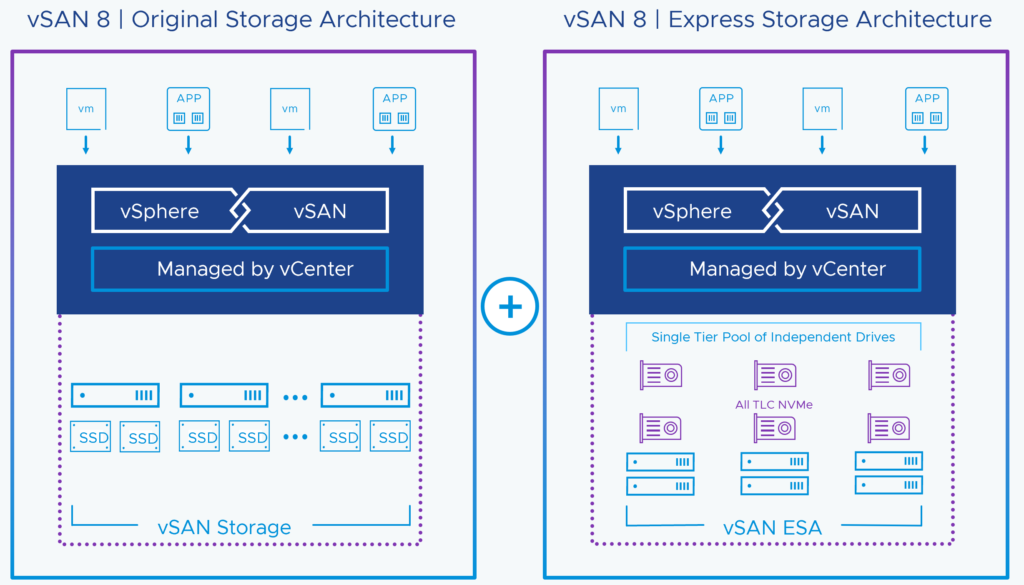

- Support for vSAN ESA.vSAN ESA is an alternative, single-tier architecture designed ground-up for NVMe-based platforms to deliver higher performance with more predictable I/O latencies, higher space efficiency, per-object based data services, and native, high-performant snapshots.

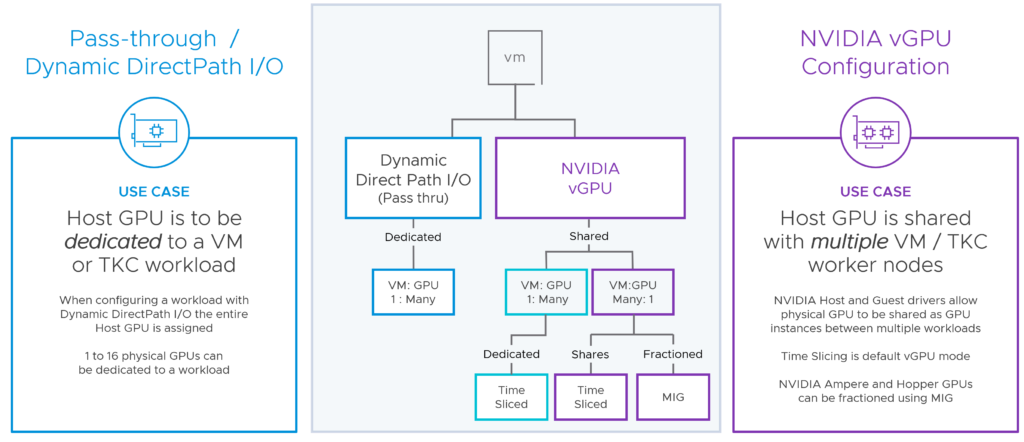

- vSphere Distributed Services engine for Ready nodes. AMD-Pensando and NVIDIA BlueField-2 DPUs are now supported. Offloading the Virtual Distributed Switch (VDS) and NSX network and security functions to the hardware provides significant performance improvements for low latency and high bandwidth applications. NSX distributed firewall processing is also offloaded from the server CPUs to the network silicon.

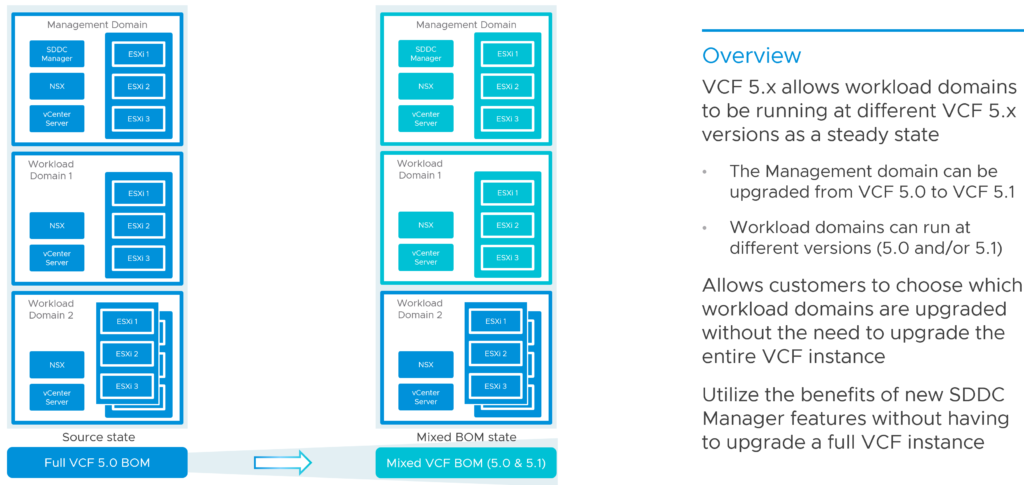

- Mixed-mode Support for Workload Domains. A VCF instance can exist in a mixed BOM state where the workload domains are on different VCF 5.x versions. Note: The management domain should be on the highest version in the instance.

- Support for mixed license deployment. A combination of keyed and keyless licenses can be used within the same VCF instance.

- VMware vRealize rebranding. VMware recently renamed vRealize Suite of products to VMware Aria Suite. See the Aria Naming Updates blog post for more details.

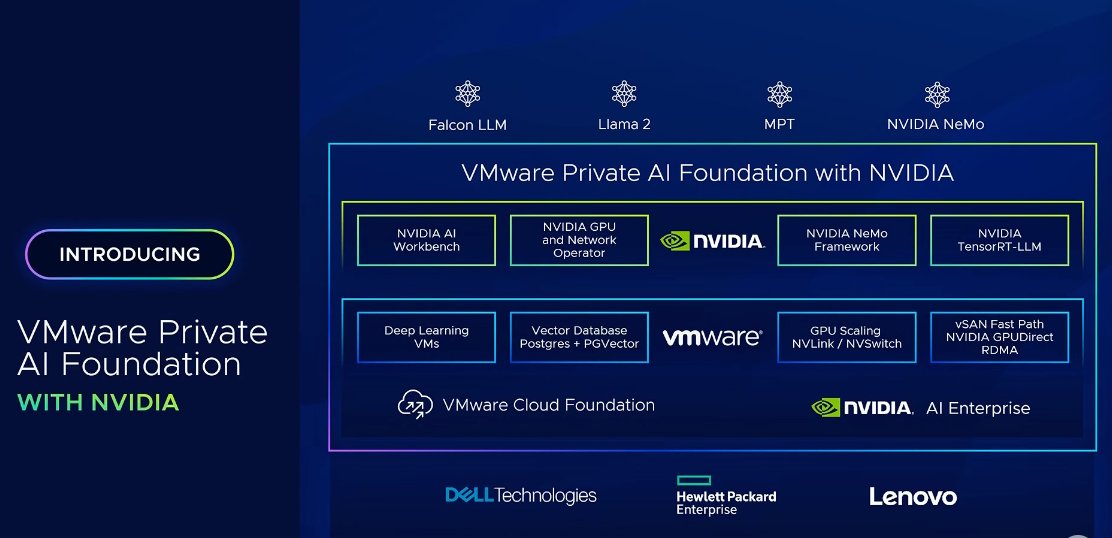

- Increased GPU scale. VMware Cloud Foundation 5.1 provides increased support for VMs to be configured with up to 16 GPU devices.

What are the VMware Cloud Foundation components?

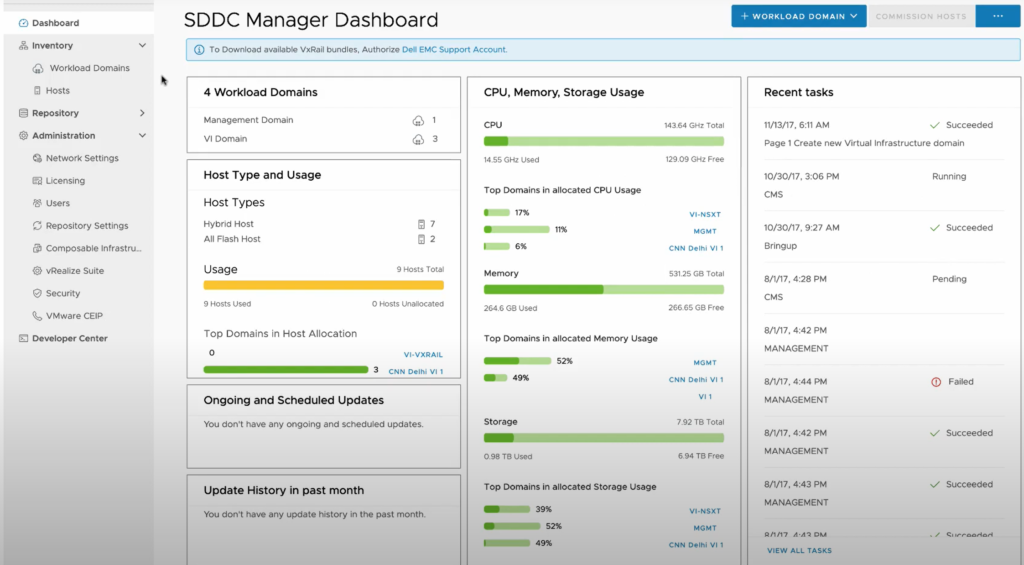

To manage the logical infrastructure in the private cloud, VMware Cloud Foundation augments the VMware virtualization and management components with VMware Cloud Builder and VMware Cloud Foundation SDDC Manager.

| VMware Cloud Foundation Component | Description |

|---|---|

| VMware Cloud Builder | VMware Cloud Builder automates the deployment of the software-defined stack, creating the first software-defined unit known as the management domain. |

| SDDC Manager |

SDDC Manager automates the entire system life cycle, that is, from configuration and provisioning to upgrades and patching including host firmware, and simplifies day-to-day management and operations. From this interface, the virtual infrastructure administrator or cloud administrator can provision new private cloud resources, monitor changes to the logical infrastructure, and manage life cycle and other operational activities.

|

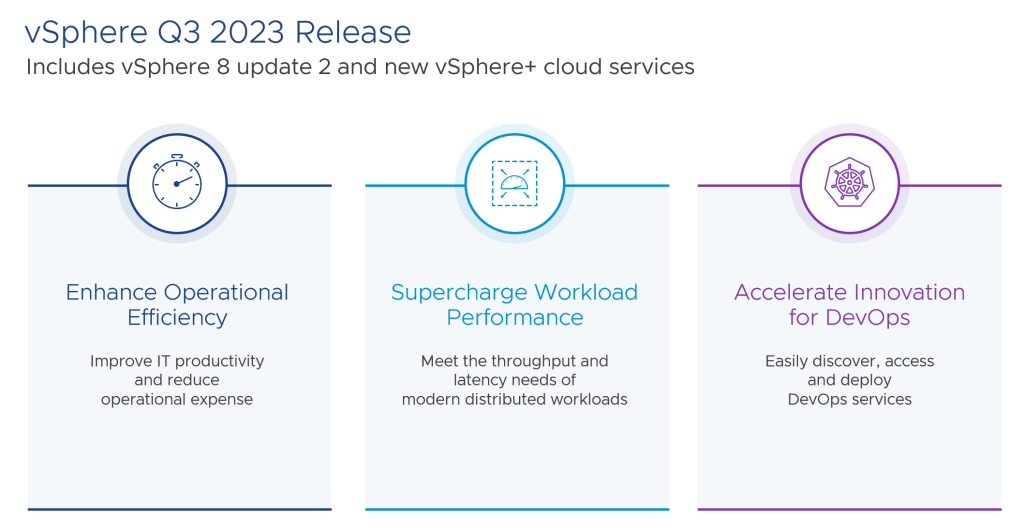

| vSphere |

vSphere uses virtualization to transform individual data centers into aggregated computing infrastructures that include CPU, storage, and networking resources. VMware vSphere manages these infrastructures as a unified operating environment and provides you with the tools to administer the data centers that participate in that environment. The two core components of vSphere are ESXi and vCenter Server. ESXi is the virtualization platform where you create and run virtual machines and virtual appliances. vCenter Server is the service through which you manage multiple hosts connected in a network and pool host resources. |

| vSAN |

vSAN aggregates local or direct-attached data storage devices to create a single storage pool that is shared across all hosts in the vSAN cluster. Using vSAN removes the need for external shared storage, and simplifies storage configuration and virtual machine provisioning. Built-in policies allow for flexibility in data availability. |

| NSX | NSX is focused on providing networking, security, automation, and operational simplicity for emerging application frameworks and architectures that have heterogeneous endpoint environments and technology stacks. NSX supports cloud-native applications, bare-metal workloads, multi-hypervisor environments, public clouds, and multiple clouds. |

| vSphere with Tanzu | By using the integration between VMware Tanzu and VMware Cloud Foundation, you can deploy and operate the compute, networking, and storage infrastructure for vSphere with Tanzu, also called Workload Management. vSphere with Tanzu transforms vSphere to a platform for running Kubernetes workloads natively on the hypervisor layer. When enabled on a vSphere cluster, vSphere with Tanzu provides the capability to run Kubernetes workloads directly on ESXi hosts and to create upstream Kubernetes clusters within dedicated resource pools. |

| VMware Aria Suite |

VMware Cloud Foundation supports automated deployment of VMware Aria Suite Lifecycle. You can then deploy and manage the life cycle of Workspace ONE Access and the VMware Aria Suite products (VMware Aria Operations for Logs, VMware Aria Automation, and VMware Aria Operations) by using VMware Aria Suite Lifecycle. VMware Aria Suite is a purpose-built management solution for the heterogeneous data center and the hybrid cloud. It is designed to deliver and manage infrastructure and applications to increase business agility while maintaining IT control. It provides the most comprehensive management stack for private and public clouds, multiple hypervisors, and physical infrastructure. |

VMware Cloud Foundation Architecture

VCF is made for greenfield deployments (brownfield not supported) and supports two different architecture models:

- Standard Architecture

- Consolidated Architecture

The standard architecture separates management workloads and lets them run on a dedicated management workload domain. Customer workloads are deployed on a separate virtual infrastructure workload domain (VI workload domain). Each workload domain is managed by a separate vCenter Server instance, which allows autonomous licensing and lifecycle management.

Note: The standard architecture is the recommended model because it separates management workloads from customer workloads.

Customers with a small environment (or a PoC) can start with a consolidated architecture. This allows you to run customer and management workloads together on the same workload domain (WLD).

Management Domain

The management domain is created during the bring-up process by VMware Cloud Builder and contains the VMware Cloud Foundation management components as follows:

-

Minimum four ESXi hosts

-

An instance of vCenter Server

-

A three-node NSX Manager cluster

-

SDDC Manager

-

vSAN datastore

-

One or more vSphere clusters each of which can scale up to the vSphere maximum of 64

VI Workload Domains

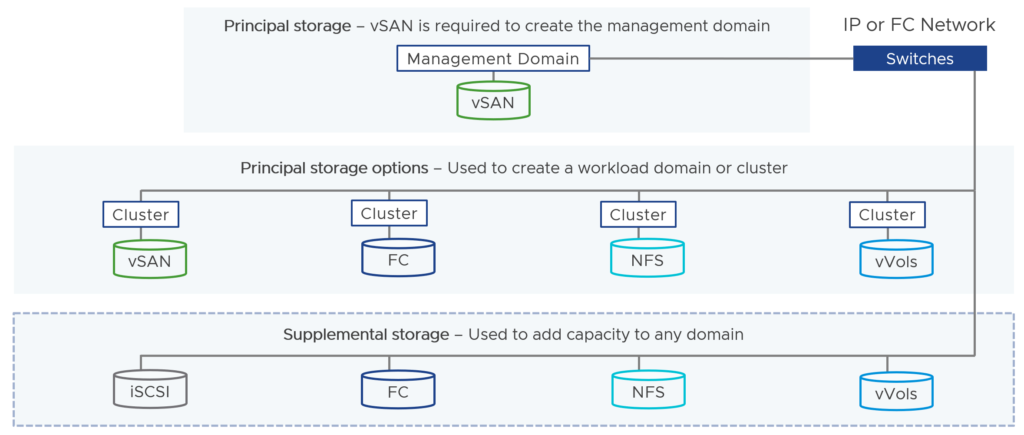

You create VI workload domains to run customer workloads. For each VI workload domain, you can choose the storage option – vSAN, NFS, vVols, or VMFS on FC.

A VI workload domain consists of one or more vSphere clusters. Each cluster starts with a minimum of three hosts and can scale up to the vSphere maximum of 64 hosts. SDDC Manager automates the creation of the VI workload domain and the underlying vSphere clusters.

For the first VI workload domain in your environment, SDDC Manager deploys a vCenter Server instance and a three-node NSX Manager cluster in the management domain. For each subsequent VI workload domain, SDDC Manager deploys an additional vCenter Server instance. New VI workload domains can share the same NSX Manager cluster with an existing VI workload domain or you can deploy a new NSX Manager cluster. VI workload domains cannot use the NSX Manager cluster for the management domain.

What is a vSAN Stretched Cluster?

vSAN stretched clusters extend a vSAN cluster from a single site to two sites for a higher level of availability and inter-site load balancing.

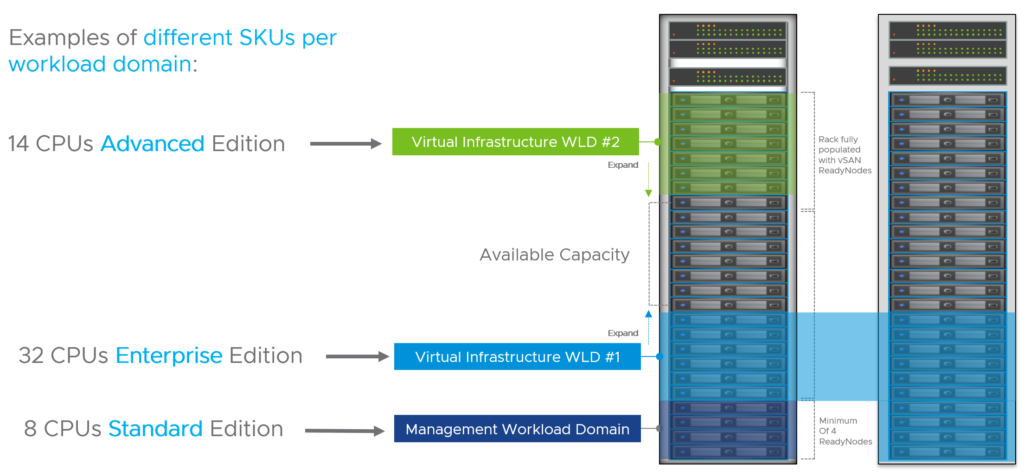

Does VCF provide flexible workload domain sizing?

Yes, that’s possible. You can license the WLDs based on your needs and use the editions that make the most sense depending on your use cases.

How many physical nodes are required to deploy VMware Cloud Foundation?

A minimum of four physical nodes is required to start in a consolidated architecture or to build your management workload domain. Four nodes are required to ensure that the environment can tolerate a failure while another node is being updated.

VI workload domains require a minimum of three nodes.

Can I mix vSAN ReadyNodes and Dell EMC VxRail deployments?

No. This is not possible.

What about edge/remote use cases?

When you would like to deploy VMware Cloud Foundation workload domains at a remote site, you can deploy so-called “VCF Remote Clusters”. Those remote workload domains are managed by the VCF instance at the central site and you can perform the same full-stack lifecycle management for the remote sites from the central SDDC Manager.

Prerequisites to deploy remote clusters can be found here.

Note: If vSAN is used, VCF only supports a minimum of 3 nodes and a maximum of 4 nodes per VCF Remote Cluster. If NFS, vVOLs or Fiber Channel is used as principal storage, then VCF supports a minimum of 2 and a maximum of 4 nodes.

Important: Remote clusters and remote workload domains are not supported when VCF+ is enabled.

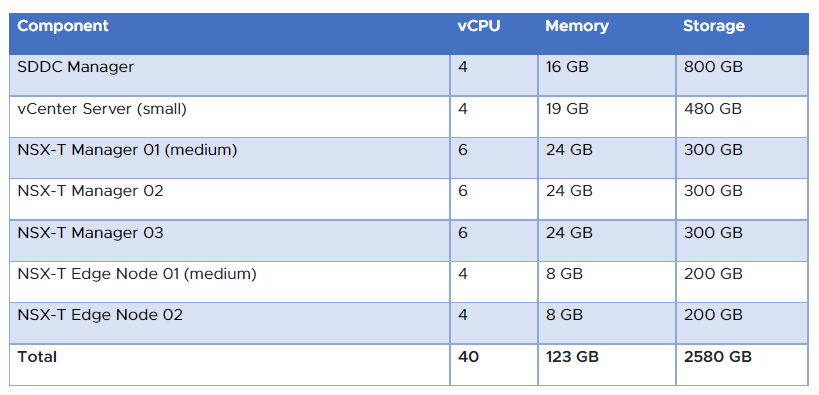

How many resources does the VCF management WLD need during the bring-up process?

We know that VCF includes vSphere (ESXi and vCenter), vSAN, SDDC Manager, NSX and eventually some components of the vRealize Suite. The following table should give you an idea what the resource requirements look like to get VCF up and running:

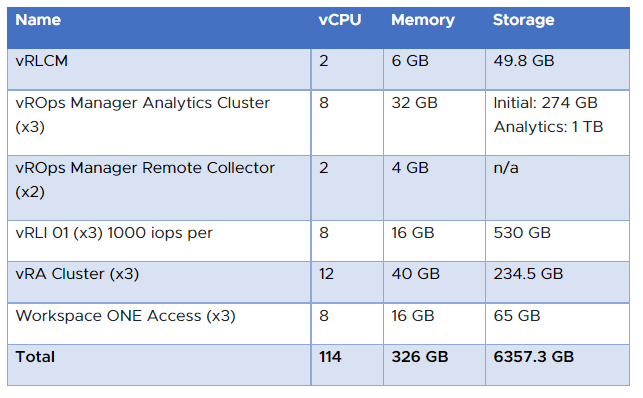

If you are interested to know how many resources the Aria Suite (formerly vRealize Suite) will consume of the management workload domain, have a look at this table:

Does VCF support HCI Mesh?

Yes. VMware Cloud Foundation 4.2 and later supports sharing remote datastores with HCI Mesh for VI workload domains.

HCI Mesh is a software-based approach for disaggregation of compute and storage resources in vSAN. HCI Mesh brings together multiple independent vSAN clusters by enabling cross-cluster utilization of remote datastore capacity within vCenter Server. HCI Mesh enables you to efficiently utilize and consume data center resources, which provides simple storage management at scale.

Note: At this time, HCI Mesh is not supported with VCF ROBO.

Important: HCI Mesh can be configured with vSAN OSA or ESA. HCI Mesh is not supported between a mix of

vSAN OSA and ESA clusters.

Does VMware Cloud Foundation support vSAN Max?

At the time of writing, no.

How is VMware Cloud Foundation licensed?

Currently, VCF is sold as part of VMware Cloud editions.

How can I migrate my workloads from a non-VCF environment to a new VCF deployment?

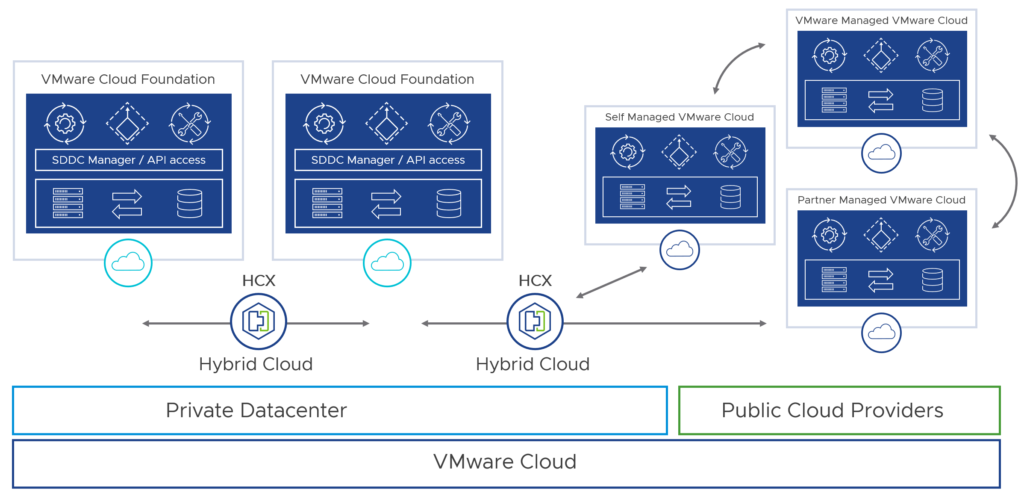

VMware HCX provides a path to modernize from a legacy data center architecture by migrating to VMware Cloud Foundation.

Can I install VCF in my home lab?

Yes, you can. With the VLC Lab Constructor, you can deploy an automated VCF instance in a nested configuration. There is also a Slack VLC community for support.

Note: Please have a look at “VCF Holodeck” if you would like to create a smaller “sandbox” for testing or training purposes

Where can I find more information about VCF?

Please consult the VMware Cloud Foundation FAQ for more information.