API Security with Spring Cloud Gateway and Tanzu Service Mesh

Today, more than ever, both humans and machines consume or process data. We, humans, consume data through multiple applications that are hosted in different clouds from different devices like smartphones, laptops, and tablets. Companies are building applications that need to look good and work well on any platform/device.

At the same time, developers are building new applications following cloud-native principles. A cloud-native architecture is a design pattern for applications that are built for the cloud. Most cloud-native apps are organized as microservices which are used to break up larger applications into loosely coupled units that can be managed by smaller teams. Resilience and scale are achieved through horizontal scaling, distributed processing, and automated placement of failed components.

Different people have a different understanding of “cloud-native” and the chances are high that you will get different answers. Let us look at the official definition from CNCF:

“Cloud native technologies empower organizations to build and run scalable applications in modern, dynamic environments such as public, private, and hybrid clouds. Containers, service meshes, microservices, immutable infrastructure, and declarative APIs exemplify this approach.

These techniques enable loosely coupled systems that are resilient, manageable, and observable. Combined with robust automation, they allow engineers to make high-impact changes frequently and predictably with minimal toil.”

12-Factor App

A widely accepted methodology for building cloud-based applications is the “Twelve-Factor Application”. It uses declarative formats for automation to minimize time and costs. It should offer maximum portability between execution environments and be suitable for the deployment on modern cloud platforms. The 12-factor methodology can be applied with any programming language and may use any combination of backing servers (caching, queuing, databases).

Interestingly, we now see other factors like API-first, telemetry, and security complementing this list.

While doing research for my book about “workload mobility and application portability”, I saw the term “API-first” many times.

Then I started to remember that VMware acquired Mesh7 a while ago and they announced Tanzu Service Mesh Enterprise last year at VMworld Europe (now known as VMware Explore). API security was even one of their main topics during the networking & security solutions keynote presented by Tom Gillis.

That is why I thought it is time to better understand this topic and write a piece about APIs. Let us start with some basics first.

What is an API?

An application programming interface (API) is a way for two or more software components to communicate with each other using a set of defined protocols and definitions. APIs are here to make the developer’s life easier.

I bet you have seen parts of Google Maps already embedded in different websites when you were looking for a specific business or restaurant location. Most websites and developers would use Google Maps in this case, because it just makes sense for us, right? That is why Google exposes the Google Maps API so developers can embed Google Maps objects very easily in a standardized way. Or have you seen anyone who wants to develop their own version of Google Maps?

In the case of enterprises, APIs are a very elegant way to share data with customers or other external users. Such public APIs like Google Maps APIs can be used by partners who then can access your data. And we all know that data is the new oil. Companies can make a lot of money today by sharing their data.

Even when using private APIs (internal use only), you decide who can access your API and data. This is one of the reasons why API security and API management become more important. You want to provide secure access when sensitive data is being exposed.

What is an API Gateway?

For microservices-based apps, it makes sense to implement an API gateway, because it can act as a single entry point for all API calls made to your system. And it doesn’t matter if your system/application is hosted on-premises, in the public cloud, or a combination of both. The API gateway takes care of the request (API call) and returns the requested data.

Image Source: https://www.tibco.com/reference-center/what-is-an-api-gateway

API gateways can also handle other tasks like authentication, rate management, and statistics. This is important for example when you want to monetize some of your APIs by offering a service to consumers or other companies.

What is Spring Cloud Gateway for VMware Tanzu?

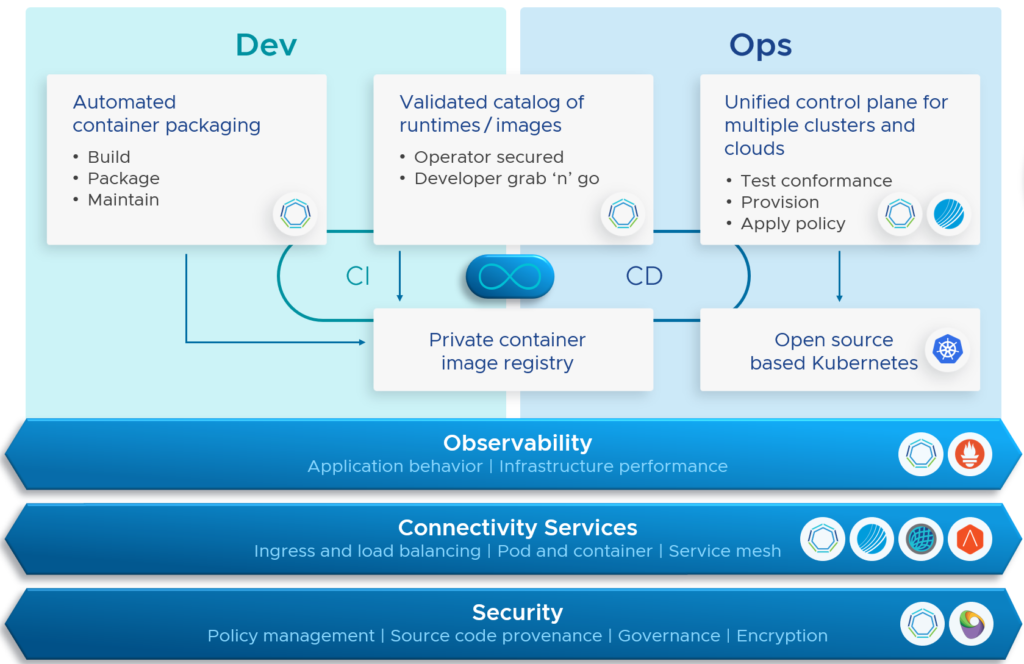

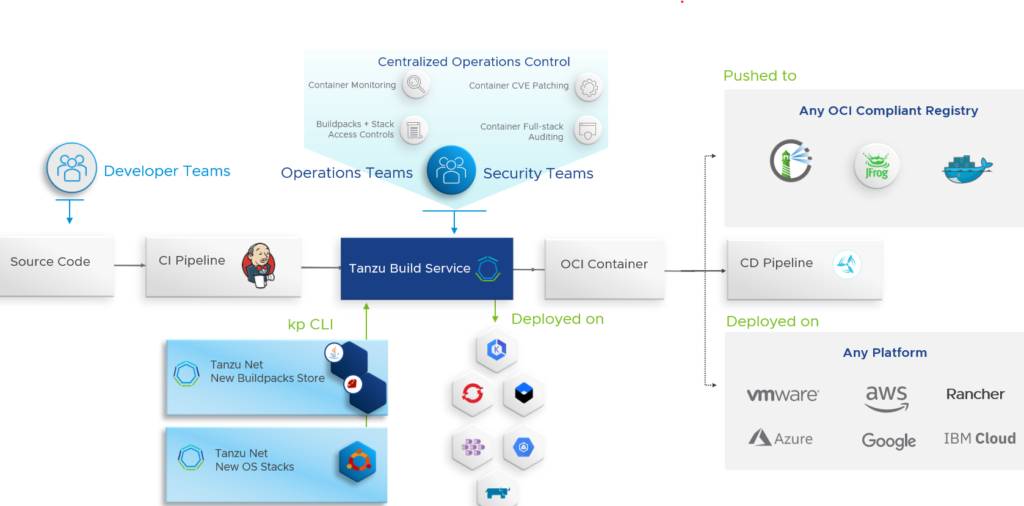

Spring Cloud Gateway for VMware Tanzu provides a simple way to route internal and external API requests to application services that expose APIs. This solution is based on the open-source Spring Cloud Gateway project and provides a library for building API gateways on top of Spring and Java.

Because it is intended that Spring Cloud Gateway sits between a requester and the resource that is being requested, it is in the position to intercept, analyze and modify requests.

Revitalize Legacy Apps with APIs

Before we had microservices, there were monolithic applications. An all-in-one application architecture, where all services are installed on the same virtual machine and depend on each other.

There are multiple reasons why such a monolith cannot be broken up into smaller pieces and modernized. Sometimes it’s not (technically) possible, not worth it, or it just takes too long. Hence many companies still use such monolithic (legacy) applications. The best example here is the mainframe which often still runs business-critical applications.

I always thought that my customers only have two options when modernizing applications:

- Start from scratch (throw the old app away)

- Refactor/Rewrite an application

Rewriting an application needs time and costs money. Imagine that you would refactor 50 of your applications, split these monoliths up in microservices, connect these hundreds or thousands of microservices, and at the same time must take care of security (e.g., vulnerabilities).

So, what are you going to do now?

APIs seem to provide a very cost-effective way to integrate some of the older applications with newer ones. With this approach, one can abstract away the data and services from the underlying (legacy) application infrastructure. APIs can extend the life of a legacy application and could be the start of a phased application modernization approach.

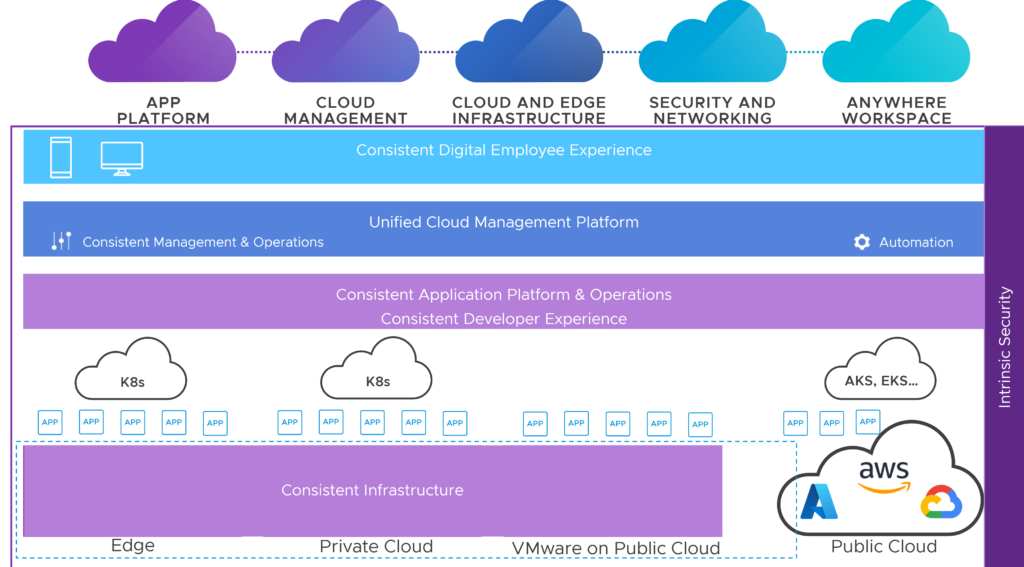

Tanzu Service Mesh Enterprise

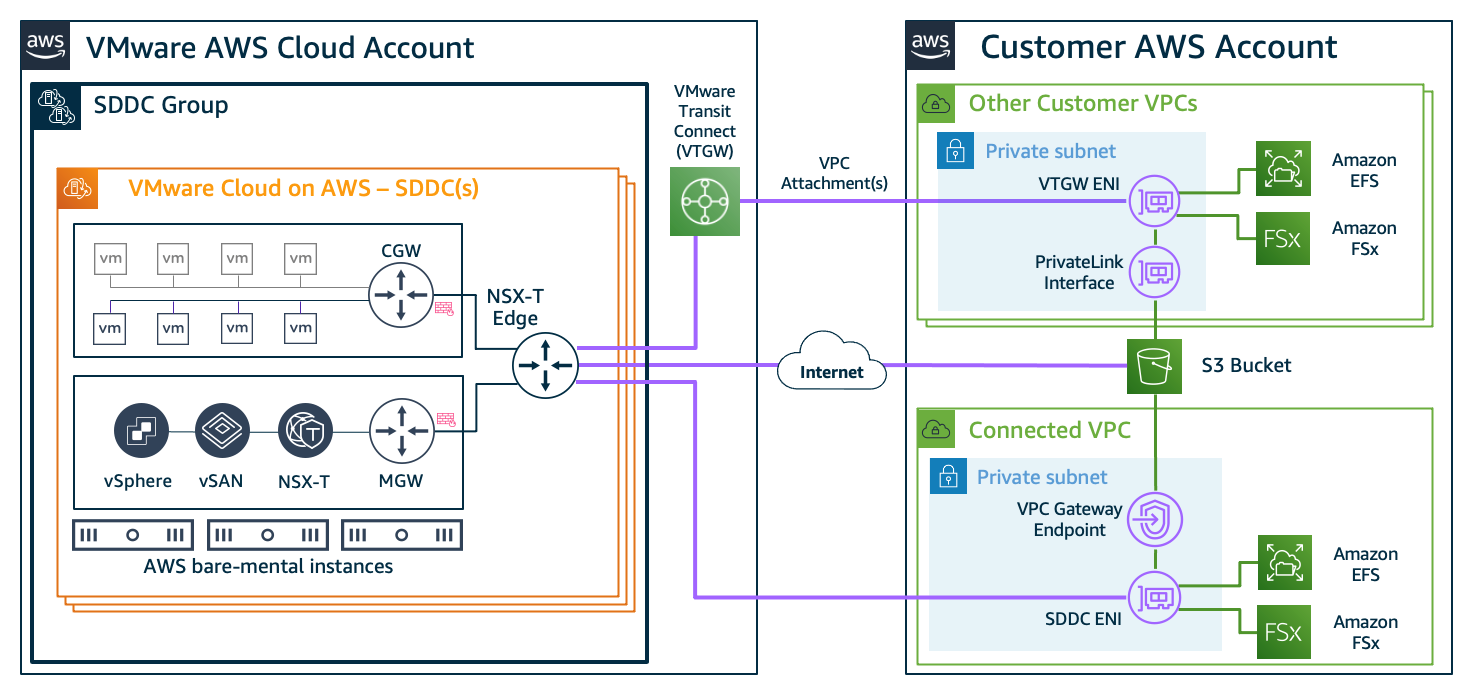

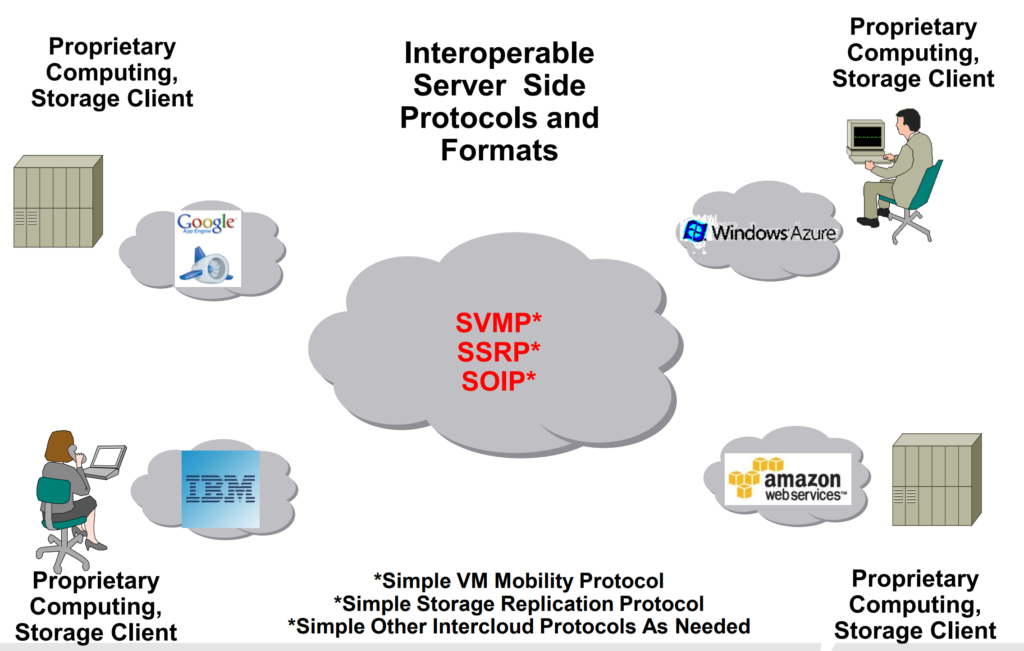

At the moment, we only have an API gateway that sits in front of our microservices. Multiple (micro)services in an aggregated fashion create the API you want to expose to your internal or external customers. The question now is, how you do plan to expose this API when your microservices are distributed over one or more private or public clouds?

When we talk about APIs, we talk about data in motion. That is why we must secure this data that is sent from its source to any location. And you want to secure the application and data without increasing the application latency and decreasing the user’s experience.

Now it makes sense to me why VMware acquired Mesh7 in March 2021 and announced Tanzu Service Mesh Enterprise about 6 months later with these additional features:

- API Security. API security is achieved through API vulnerability detection and mitigation, API baselining, and API drift detection (including API parameters and schema validation)

- Personally Identifiable Information (PII) segmentation and detection. PII data is segmented using attribute-based access control (ABAC) and is detected via proper PII data detection and tracking, and end-user detection mechanisms.

- API Security Visibility. API security is monitored using API discovery, security posture dashboards, and rich event auditing.

Final Words

APIs are used to connect different applications. They are also used to aggregate services or functions that can be consumed by other businesses or partners. Modern and containerized applications bring a large number of APIs with them, that can be hosted in any cloud.

With Spring Cloud Gateway and Tanzu Service Mesh Enterprise, VMware can deliver application connectivity services that enable improved developer experience and more secure operations.

It took me almost a year to realize the strengths of these (combined) products and why VMware for example acquired Mesh7. But it makes sense to me now. Even I do not completely understand all the key features of Spring Cloud Gateway and Tanzu Service Mesh.