Digitale Souveränität und der Broadcom-Wendepunkt – Warum Oktober 2027 für den Public Sector kritisch wird

English Version: https://www.linkedin.com/pulse/broadcoms-october-2027-turning-point-why-public-sector-rebmann-t3vme/

Digitale Souveränität ist in den letzten Jahren zu einem zentralen Begriff geworden, insbesondere im öffentlichen Sektor. Dennoch wird die Diskussion häufig an der Oberfläche geführt. Oft geht es um Datenstandorte, um europäische Cloud-Initiativen oder um zusätzliche Sicherheitsmechanismen. Was dabei übersehen wird, ist die eigentliche Ebene, auf der Souveränität entsteht oder verloren geht. Nämlich bei Architektur der Plattformen, auf denen unsere IT basiert.

Über viele Jahre hinweg haben Organisationen ihre Infrastruktur auf VMware aufgebaut. Virtualisierung war der stabile Kern, auf dem sich moderne Rechenzentren und später auch Private-Cloud-Umgebungen entwickelt haben. Diese Umgebungen waren in ihrer ursprünglichen Form modular. Compute, Storage und Netzwerk inkl. Management konnten unabhängig voneinander betrieben und weiterentwickelt werden. Diese Modularität war ein entscheidender Erfolgsfaktor – vor allem im KMU-Segment. Sie ermöglichte es Organisationen, ihre Architektur schrittweise anzupassen, Technologien auszutauschen oder zu ergänzen und Betriebsmodelle weiterzuentwickeln, ohne jedes Mal das gesamte Fundament neu bauen zu müssen.

Gleichzeitig hat sich über die Jahre eine starke Marktkonzentration aufgebaut. Es ist realistisch davon auszugehen, dass heute rund 80 Prozent des Public Sectors auf VMware-Technologie basieren. Diese breite Verbreitung war lange ein Vorteil, weil sie Standardisierung, Know-how-Aufbau und ein starkes Partner-Ökosystem ermöglicht hat. Heute wird genau diese Konzentration jedoch zu einem strukturellen Risiko. Denn wenn ein einzelner Anbieter seine Strategie grundlegend verändert, betrifft das nicht einzelne Organisationen, sondern einen Grossteil des gesamten Ökosystems. Ja, sogar einen Grossteil von Schweizer Rechenzentren.

Mit der Übernahme von VMware durch Broadcom hat sich diese Ausgangslage grundlegend verändert. Die Transformation erfolgt dabei nicht in einem Schritt, sondern in mehreren, klar erkennbaren Phasen.

Phase 1

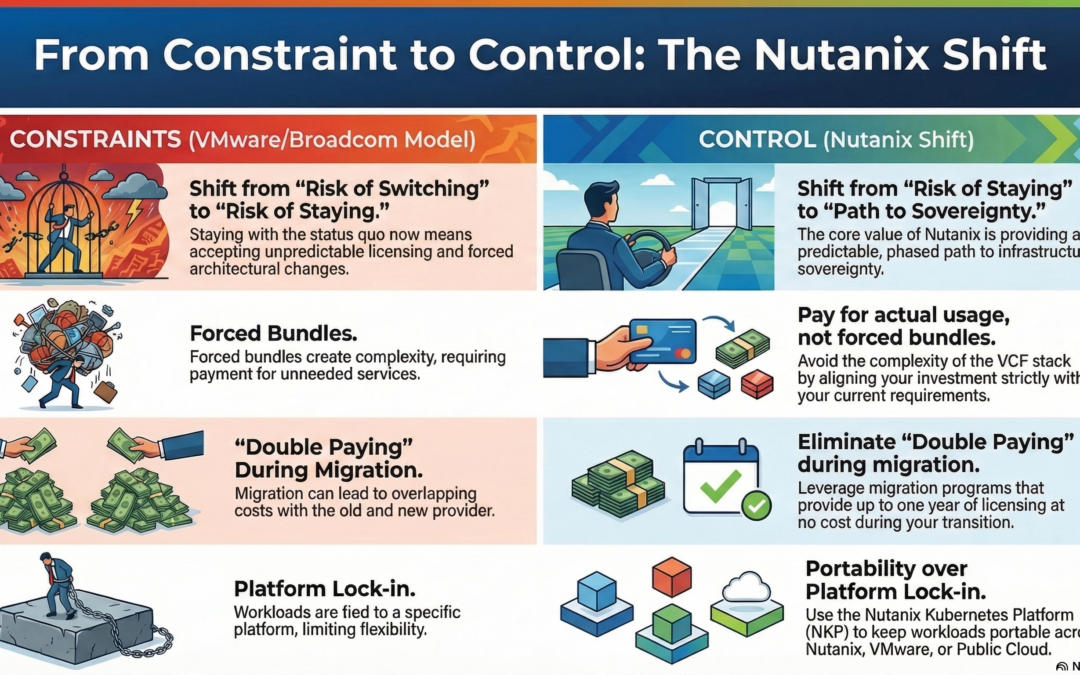

Der erste Einschnitt war wirtschaftlicher Natur. Neue Lizenzmodelle und Bündelungen haben die Kostenstruktur verändert und in vielen Fällen deutlich erhöht. Damit wurde die wirtschaftliche Souveränität vieler Organisationen bereits spürbar eingeschränkt. Entscheidungen konnten nicht mehr allein auf Basis des tatsächlichen Bedarfs getroffen werden, sondern mussten sich zunehmend an vorgegebenen Lizenzmodellen orientieren.

Phase 2

Parallel dazu hat sich das Partner-Ökosystem verändert. Viele VMware-Partner sind verschwunden oder haben ihre Rolle angepasst. Für Kunden bedeutet das eine reduzierte Auswahl an Integratoren und Dienstleistern, weniger Wettbewerb und damit indirekt auch weniger Einflussmöglichkeiten. Souveränität zeigt sich nicht nur in Technologie, sondern auch in der Fähigkeit, zwischen verschiedenen Partnern und Betriebsmodellen wählen zu können. Wenn diese Auswahl kleiner wird, sinkt auch die Handlungsfreiheit.

Phase 3

Die dritte Phase, die sich aktuell abzeichnet, ist die technisch-strukturelle. Mit der strategischen Ausrichtung auf VMware Cloud Foundation 9 (VCF) als dominierendes Zielmodell wird die Architektur selbst zum Steuerungsinstrument. Was früher ein flexibler Baukasten war, entwickelt sich zunehmend zu einem integrierten Gesamtstack, in dem einzelne Komponenten nicht mehr unabhängig voneinander betrachtet werden können.

Technisch betrachtet bringt ein solcher Ansatz Vorteile mit sich. Standardisierung reduziert Komplexität, integrierte Betriebsmodelle können Effizienzgewinne ermöglichen, und ein klar definierter Stack vereinfacht den Betrieb. Doch diese Integration hat eine Konsequenz, die in der aktuellen Diskussion oft unterschätzt wird. Sie verändert die grundlegende Beziehung zwischen Kunde und Plattform.

Man kann die digitale Souveränität anhand von drei zentralen Fähigkeiten messen:

- Der Möglichkeit zu wechseln,

- der Fähigkeit zur Gestaltung und

- der Fähigkeit zur Einflussnahme

Diese drei Dimensionen sind entscheidend, weil sie darüber bestimmen, ob eine Organisation ihre IT aktiv steuern kann oder ob sie zunehmend in ein vorgegebenes Modell hineinwächst.

Genau diese Fähigkeiten werden durch die aktuelle Entwicklung schrittweise reduziert. Die Wechselmöglichkeit bleibt formal bestehen, wird aber faktisch deutlich erschwert, weil ein Wechsel nicht mehr den Austausch einzelner Komponenten bedeutet, sondern die Transformation eines gesamten Systems. Die Gestaltungsfähigkeit nimmt ab, weil Architekturentscheidungen zunehmend durch den Anbieter (Broadcom) definiert werden. Und auch die Einflussnahme sinkt, da die Verhandlungsmacht mit wachsender Abhängigkeit vom integrierten Stack strukturell abnimmt. Ähnlich wie bei der Public Cloud.

Viele Organisationen haben auf die ersten Veränderungen reagiert. Zum Beispiel haben grössere Spitäler und Kantone ihre Verträge mit Broadcom verlängert, um kurzfristig Planungssicherheit zu gewinnen und operative Ruhe zu schaffen. Diese Entscheidung ist nachvollziehbar. Sie verschafft Zeit, stabilisiert Budgets und vermeidet kurzfristige Risiken.

VCF9 zwingend ab Oktober 2027

Doch genau hier liegt ein Missverständnis, das in vielen Gesprächen sichtbar wird. Diese Verlängerungen haben keine zusätzliche Zeit geschaffen.

Die eigentliche Entwicklung läuft unabhängig davon weiter. Die strategische Ausrichtung auf VCF (VCF9) und die damit verbundene Transformation der Architektur bleiben bestehen. Der relevante Zeitpunkt verschiebt sich nicht durch ein Vertrags-Renewal.

Der eigentliche Wendepunkt bleibt bestehen. Oktober 2027.

Wie VCF Operations das Zielmodell erzwingt

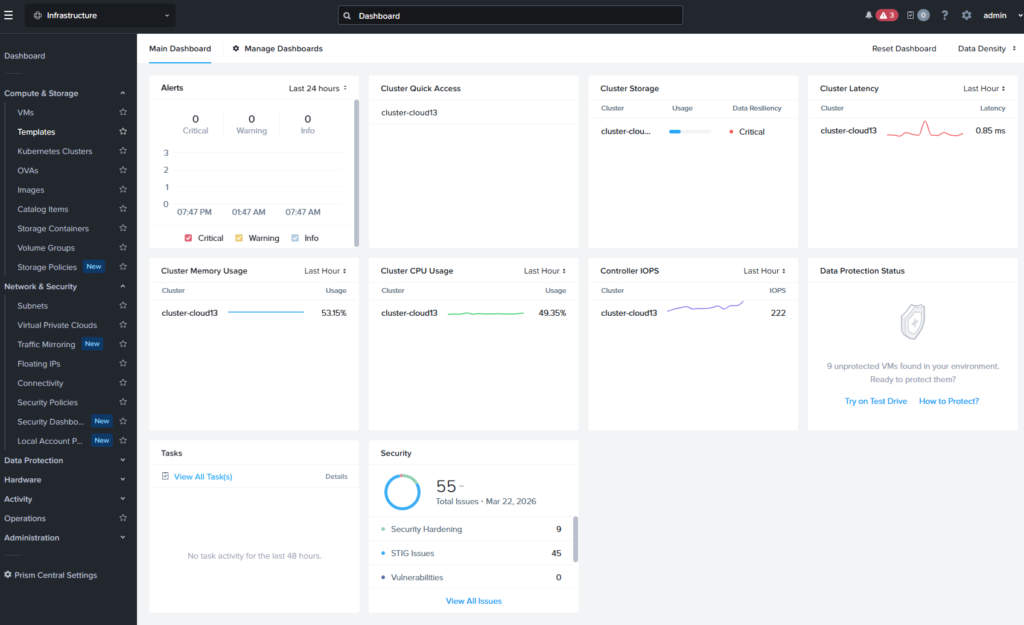

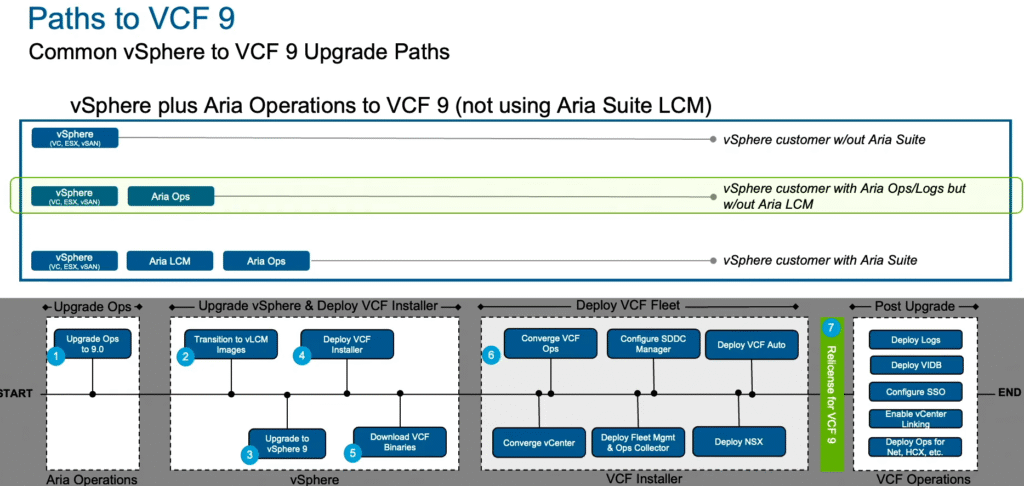

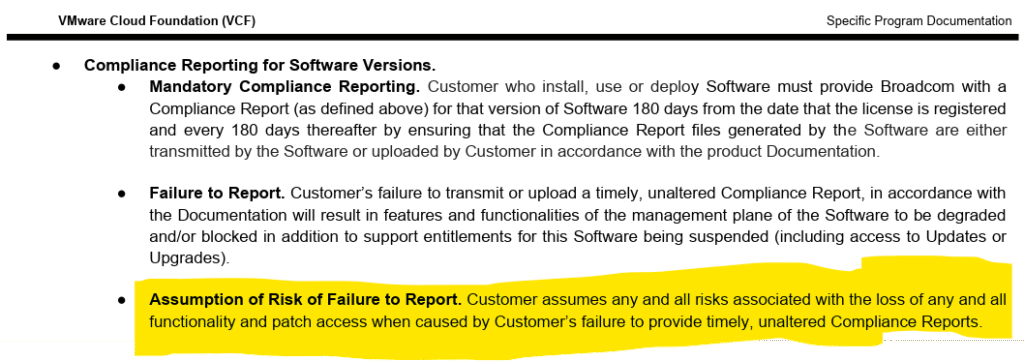

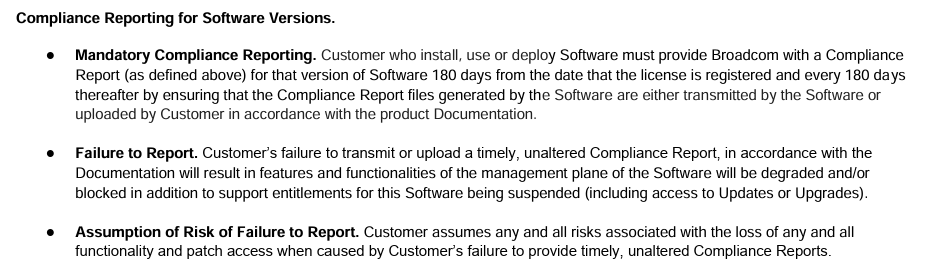

Mit Version 9 von VMware Cloud Foundation verändert sich nicht nur die Architektur, sondern auch die Art und Weise, wie Compliance im Betrieb umgesetzt wird. Gemäss den aktuellen Lizenz- und Nutzungsbedingungen wird für Umgebungen ab Version 9 ein verpflichtendes Compliance Reporting eingeführt.

VCF is sold as a single product; the included components and capabilities can only be utilized on, or for the same physical Cores where the vSphere in VCF Core license is deployed.

Organisationen, die VCF einsetzen, sind demnach verpflichtet, regelmässig Compliance-Berichte zu erstellen und bereitzustellen – initial nach 180 Tagen und danach in wiederkehrenden Intervallen.

Das Compliance Reporting wird über VCF Operations abgewickelt. Damit wird diese Komponente faktisch zur Voraussetzung (VCF9 is also Voraussetzung) für den regelkonformen Betrieb. Ohne entsprechende Integration ist die Einhaltung der Vorgaben nicht mehr vollständig gewährleistet.

Damit entsteht ein zusätzlicher Mechanismus, der die Nutzung des vollständigen VCF-Stacks verstärkt.

In Kombination mit Lizenzmodellen, Architekturvorgaben und integrierten Betriebsfunktionen ergibt sich ein konsistentes Muster. Der Weg in das Zielmodell wird nicht nur empfohlen, sondern zunehmend strukturell abgesichert.

Quelle: https://ftpdocs.broadcom.com/cadocs/0/contentimages/VCF_SPD_July2025.pdf

Was mit VCF 9 tatsächlich installiert wird

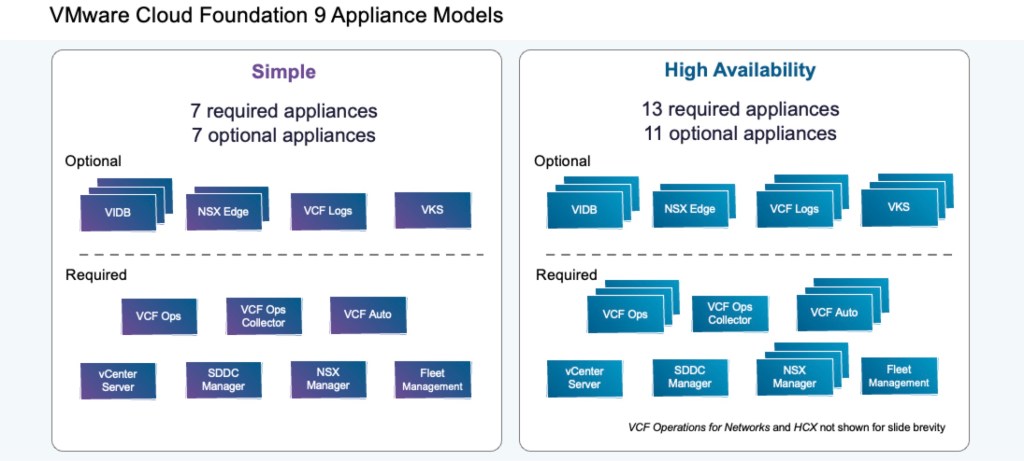

Beim Deployment einer VCF-Umgebung wird nicht nur eine Virtualisierungsplattform (ESX Hypervisor) installiert. Vielmehr wird ein vollständiger, integrierter Stack aus Infrastruktur-, Netzwerk- und Betriebskomponenten bereitgestellt.

Konkret umfasst eine Standardinstallation mehrere zentrale Bausteine:

- vSphere (ESX & vCenter) als Compute- und Management-Layer

- NSX für Netzwerk und Security

- vSAN oder alternative Storage-Integrationen

- SDDC Manager und Fleet Management

- sowie VCF Operations und VCF Automation als zentrale Betriebs- und Steuerungsschicht

Die einzelnen Komponenten sind nicht mehr unabhängig voneinander sinnvoll betreibbar. Sie werden zu einem zusammenhängenden System, das nur im Gesamtmodell seine volle Funktionalität entfaltet.

Quelle: https://blogs.vmware.com/cloud-foundation/2025/07/03/vcf-9-0-deployment-pathways

Neue Anforderungen an Architektur und Betrieb

Diese Veränderung bleibt nicht auf der technischen Ebene stehen. Sie hat direkte Auswirkungen auf die Leute, die diese Plattformen planen und betreiben.

Architekten und Betriebsteams müssen sich in ein deutlich breiteres und komplexeres System einarbeiten. Während sich viele Organisationen bisher stark auf den Hypervisor und klassische Virtualisierungskomponenten konzentriert haben, kommen nun zusätzliche Schichten hinzu, die zwingend Teil des Betriebsmodells sind.

Organisationen müssen neue Kompetenzen aufbauen, Prozesse anpassen und ein tieferes Verständnis für das Zusammenspiel der einzelnen Komponenten entwickeln.

Der Fokus verschiebt sich weg vom Betrieb einzelner Technologien hin zum Betrieb eines integrierten Systems. Entscheidungen in einem Bereich wirken sich unmittelbar auf andere Bereiche aus. Architektur, Betrieb und Automatisierung sind enger miteinander verknüpft als je zuvor.

Diese Entwicklung ist nicht ungewöhnlich. Sie entspricht dem generellen Trend in Richtung Plattformisierung. Doch sie hat eine klare Konsequenz. Und dieser muss man sich bewusst sein.

Informationsdefizit

Was die Situation zusätzlich verschärft, ist ein strukturelles Informationsdefizit. Viele Kunden und auch viele Partner sind sich der Tragweite dieser Veränderung noch nicht bewusst. Die Entwicklung hin zu einem integrierten, erzwungenen Plattformmodell wird oft als schrittweise Evolution wahrgenommen, nicht als fundamentaler Architekturbruch.

In der Praxis bedeutet das, dass sich ein Grossteil des Marktes heute in einer Phase scheinbarer Stabilität befindet, während sich gleichzeitig eine strukturelle Veränderung vorbereitet, die in etwa 18 Monaten ihre volle Wirkung entfalten wird.

Souveränität grossflächig in Gefahr

Bis dahin werden viele Organisationen gezwungen sein, ihre bestehenden Umgebungen zu transformieren oder neu auszurichten. Supportzyklen laufen aus, technologische Abhängigkeiten verstärken sich, und die Migration in integrierte Modelle wird zunehmend zur Voraussetzung für den Weiterbetrieb. Was heute wie eine temporäre Stabilisierung wirkt, ist in Wirklichkeit eine Phase vor einer strukturellen Entscheidung.

Anmerkung: Die VMware-Technologie ist nach wie vor exzellent. Jedoch ist VMware nicht mehr “VMware”, sondern nun Broadcom.

Digitale Souveränität geht nicht verloren, weil die Technologie schlecht ist.

Sie geht verloren, wenn Entscheidungen an Reversibilität verlieren. Wenn Architektur, Betrieb und Lizenzmodell so eng miteinander verknüpft sind, dass Alternativen zwar existieren, aber praktisch kaum mehr realistisch umsetzbar sind, verschiebt sich die Kontrolle nachhaltig.

Für den Public Sector in der Schweiz bedeutet das, dass sich die Ausgangslage fundamental verändert. Viele Organisationen betreiben heute VMware-basierte Private Clouds, haben über Jahre Know-How aufgebaut und ihre Betriebsmodelle darauf ausgerichtet. Der Übergang zu einem integrierten Modell wie VCF9 ist deshalb kein einfacher Technologieschritt, sondern eine strategische Weichenstellung.

Es ist nicht unrealistisch anzunehmen, dass ein grosser Teil der öffentlichen IT-Landschaft ab Oktober 2027 nicht mehr den zentralen Kriterien digitaler Souveränität entsprechen wird.

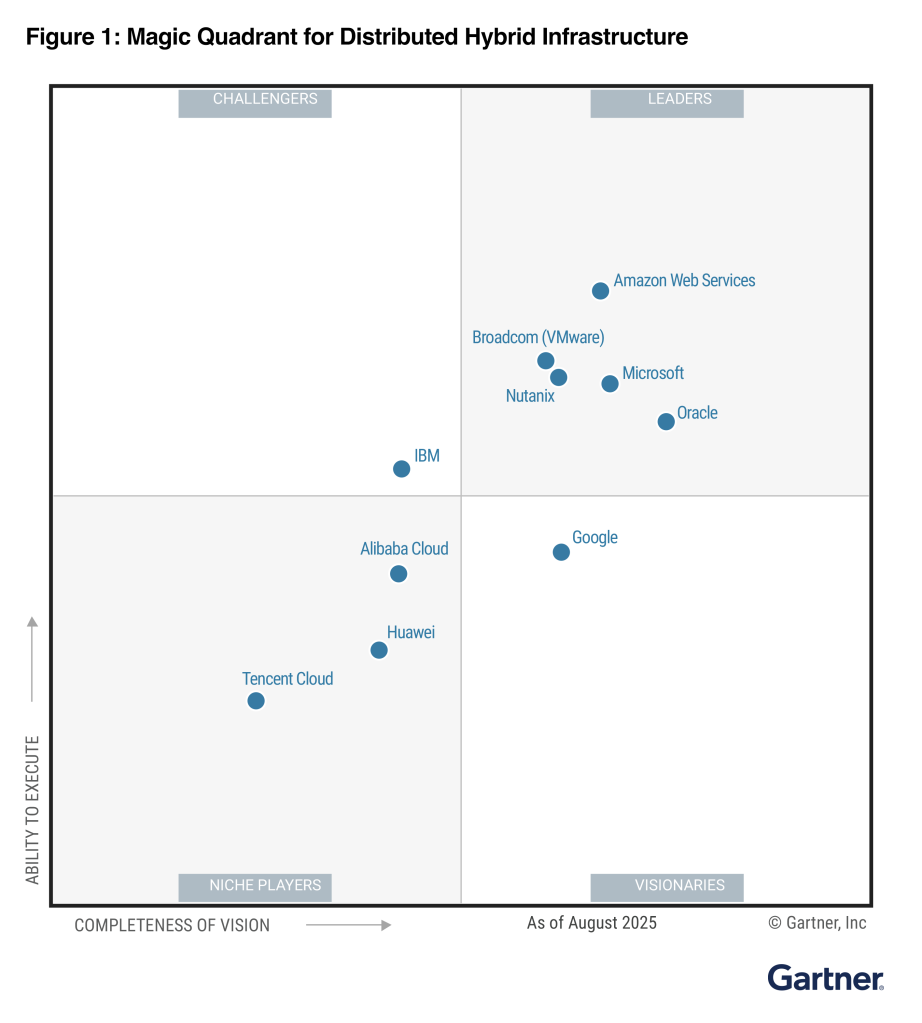

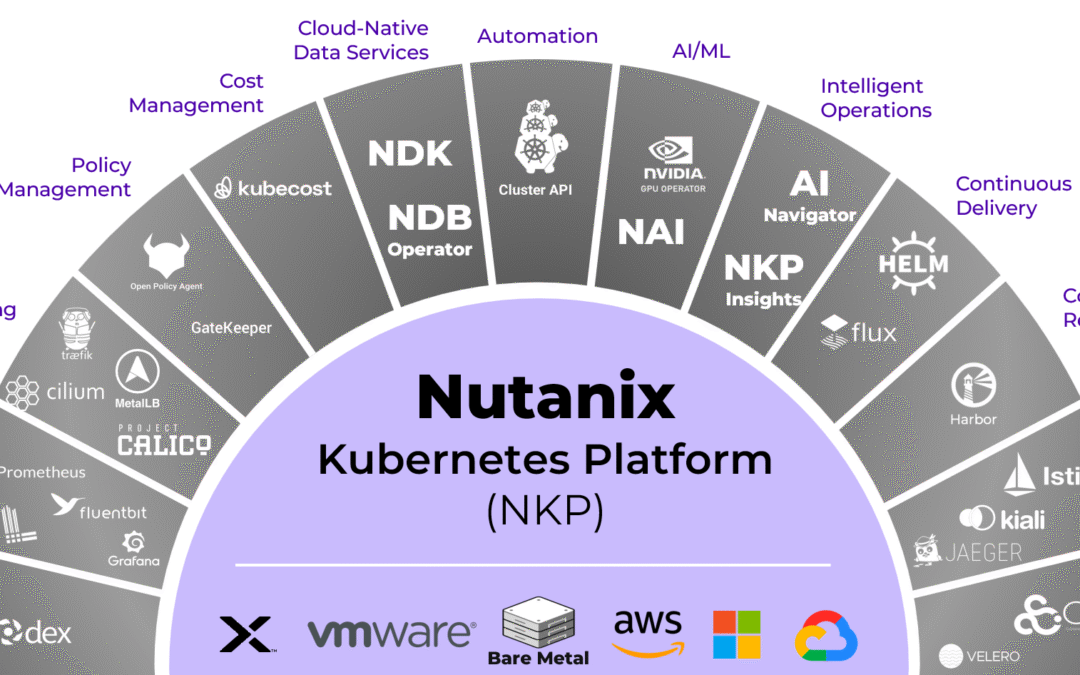

Nutanix als Alternative – Zurück zur Modularität

In diesem Kontext wird Nutanix häufig als Alternative genannt. Interessant ist dabei weniger die Positionierung als “Private-Cloud-Anbieter”, sondern die zugrunde liegende Architekturphilosophie.

Auch Nutanix bietet heute eine vollständige Private-Cloud-Plattform. Infrastruktur, Automatisierung, Datenservices und moderne Plattformdienste können integriert bereitgestellt werden. Auf den ersten Blick ähnelt dieses Modell dem, was auch VMware Cloud Foundation verfolgt. Der entscheidende Unterschied liegt jedoch nicht im Funktionsumfang, sondern in der Art und Weise, wie dieser bereitgestellt wird.

Während sich VMware (by Broadcom) zunehmend in Richtung eines verpflichtenden, eng integrierten Gesamtstacks entwickelt, folgt Nutanix weiterhin einem modularen Ansatz. Funktionen können kombiniert werden, müssen es aber nicht. Organisationen können entscheiden, welche Komponenten sie tatsächlich benötigen und in welchem Umfang sie diese einsetzen.

Genau diese Eigenschaft war auch ein wesentlicher Grund für den Erfolg von VMware in der Zeit vor der Broadcom-Übernahme.

Nutanix knüpft in gewisser Weise an dieses Prinzip an. Die Plattform kann als vollständige Private Cloud betrieben werden, ohne dass sie zu einem starren Zielmodell wird. Gleichzeitig ermöglicht sie unterschiedliche Betriebsmodelle, vom klassischen Rechenzentrum über Service-Provider-Umgebungen bis hin zu hybriden Szenarien. Entscheidend ist dabei, dass die operative Logik konsistent bleibt. Workloads und Betriebsprozesse sind nicht an ein einzelnes Modell gebunden, sondern können sich entlang der Anforderungen entwickeln.

Die Einordnung von Nutanix als Alternative sollte dennoch differenziert erfolgen. Auch hier handelt es sich um eine kommerzielle Plattform mit eigener Roadmap, eigenem (breiten) Ökosystem und eigenen Abhängigkeiten. Digitale Souveränität entsteht durch das Zusammenspiel von Technologie, Governance, Kompetenzen und strategischen Entscheidungen.

Eine Frage, die bisher zu selten gestellt wird

Die Risiken der Public Cloud sind im öffentlichen Sektor seit Jahren Gegenstand intensiver Diskussionen. Fragen zu Abhängigkeiten, Preisentwicklung, geopolitischem Einfluss und fehlender Kontrolle gehören heute zur Standardbewertung jeder grösseren Cloud-Entscheidung. Im Private-Cloud-Umfeld hingegen wird eine vergleichbare Debatte bisher gar nicht geführt.

Dabei deutet sich eine strukturell ähnliche Entwicklung an.

Aus einer Souveränitätsperspektive stellt sich jedoch noch eine andere Frage.

Welche Konsequenzen hat es, wenn ein grosser Teil des Public Sectors auf eine einheitliche Plattformarchitektur standardisiert, deren Betriebsmodell, Lizenzstruktur und Weiterentwicklung massgeblich von einem Anbieter bestimmt werden?

Ein Vergleich mit der Public Cloud hilft, diese Fragestellung zu beantworten.

Würde heute ein Grossteil der öffentlichen Verwaltung seine IT vollständig auf Plattformen wie Microsoft Azure, Amazon Web Services oder Google Cloud Platform betreiben und in der Folge eine signifikante Preissteigerung im Bereich von 50 bis 100 Prozent erfolgen, wäre die Reaktion absehbar. Die Diskussion über Abhängigkeiten, Alternativen und strategische Steuerbarkeit würde unmittelbar an Intensität gewinnen.

Im Private-Cloud-Umfeld ist eine vergleichbare Dynamik bereits erkennbar, wird jedoch anders wahrgenommen.

Während Risiken in der Public Cloud frühzeitig adressiert wurden, wird die gleiche Entwicklung im Private-Cloud-Umfeld häufig noch als rein technologische Evolution betrachtet. Die zugrunde liegende Abhängigkeit ist jedoch vergleichbar.

- Welche Massnahmen werden also heute ergriffen, um diese Form der Abhängigkeit aktiv zu steuern?

- Welche Strategien existieren, um Wechseloptionen realistisch zu erhalten?

- Und in welchem Umfang werden Alternativen geprüft, solange diese noch mit vertretbarem Aufwand umsetzbar sind?

Diese Fragen lassen sich nur beantworten, wenn die zugrunde liegenden Veränderungen überhaupt den Kunden und Partnern klar sind.

Ein Blick auf die Beschaffung

Ein Blick auf aktuelle Ausschreibungen auf simap.ch zeigt ein klares Bild. VMware ist im Schweizer Public Sector tief verankert. Zahlreiche Organisationen haben in den Jahren 2024 und 2025 ihre bestehenden Umgebungen verlängert oder weiter ausgebaut. Die Vertragsvolumen bewegen sich im Millionenbereich und sind in vielen Fällen über mehrere Jahre ausgelegt – häufig bis 2028, 2029 oder darüber hinaus.

Viele dieser Entscheidungen wurden in einer Phase getroffen, in der Stabilität, Planbarkeit und operative Kontinuität im Vordergrund standen. Vertragsverlängerungen boten kurzfristige Sicherheit, insbesondere vor dem Hintergrund veränderter Lizenzmodelle und steigender Kosten.Wie schon erwähnt, hat man sich hier wohl Planungssicherheit verschaffen möchten, war sich jedoch nicht bewusst, dass ab Oktober 2027 ein neue Architektur und ein neues Betriebsmodell aufgezwungen werden könnte.

Gleichzeitig wurde damit eine bestehende Architektur vorgeschrieben. Die Folge ist kein unmittelbarer Bruch, sondern eine schrittweise Verfestigung.

Über mehrere Jahre hinweg entstehen Bindungen, die technisch und wirtschaftlich zunehmend schwerer zu verändern sind. Der Handlungsspielraum bleibt formal bestehen, wird aber faktisch enger.

Quellen

- Broadcom delivers VMware Cloud Foundation 9 – the release that realizes its private cloud vision

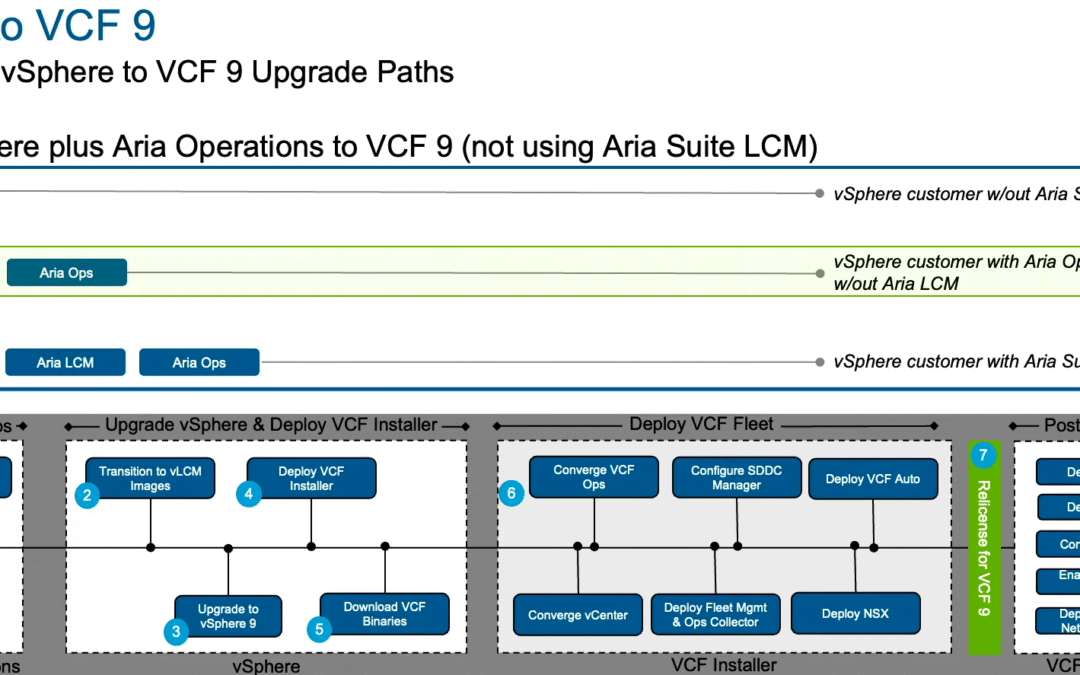

- VMware Cloud Foundation 9.0 – Customer Journey Map – Build – Convert existing vSphere to VCF 9 with new VCF Operations

- Half of VMware users plan to reduce usage by 2028

- VMware to lose 35 percent of workloads in three years – some to its friends at ‘proper clouds’

- Broadcom set to remove VMware partner tier in EMEA as market challenges persist

- ‘Death sentence’: EU cloud lobby takes Broadcom to Brussels over VMware partner purge

- VMware vSphere 8 end-of-support challenges

- VMware kills vSphere Foundation in parts of EMEA