Nutanix Is Quietly Redrawing the Boundaries of What an Infrastructure Platform Can Be

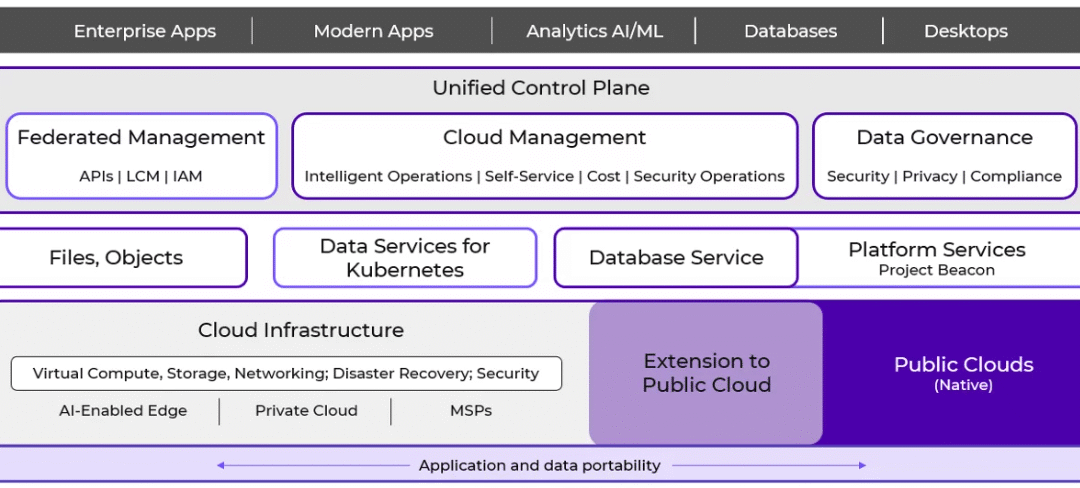

Real change happens when a platform evolves in ways that remove old constraints, open new economic paths, and give IT teams strategic room to maneuver. Nutanix has introduced enhancements that, taken individually, appear to be technical refinements, but observed together, they represent something...

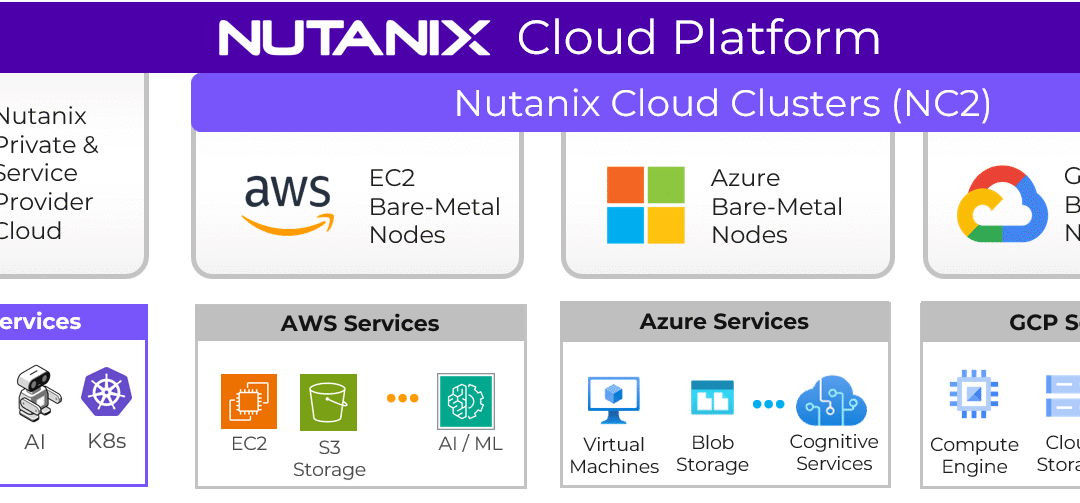

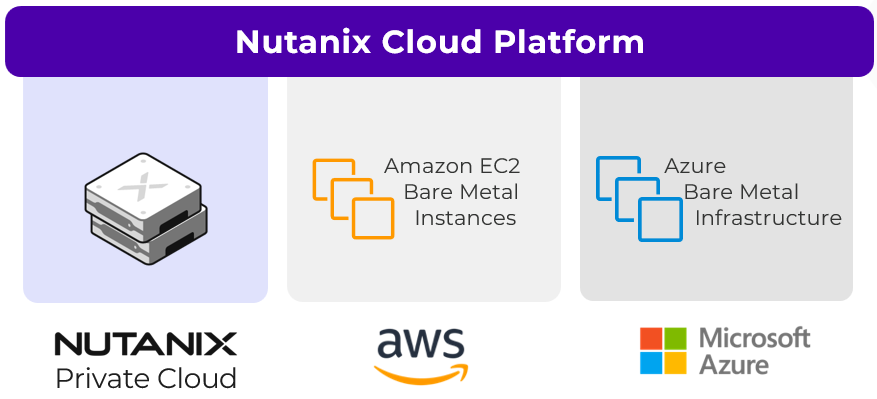

A Primer on Nutanix Cloud Clusters (NC2)

If you strip cloud strategy down to its essentials, you quickly notice that IT leaders are protecting three things. I am talking about continuity, autonomy and freedom of movement. Yet most clouds, private or public, quietly decimate at least one of these freedoms. You can gain elasticity but lose...

When “Staying” Becomes a Journey And Why Nutanix Lets You Take Back Control

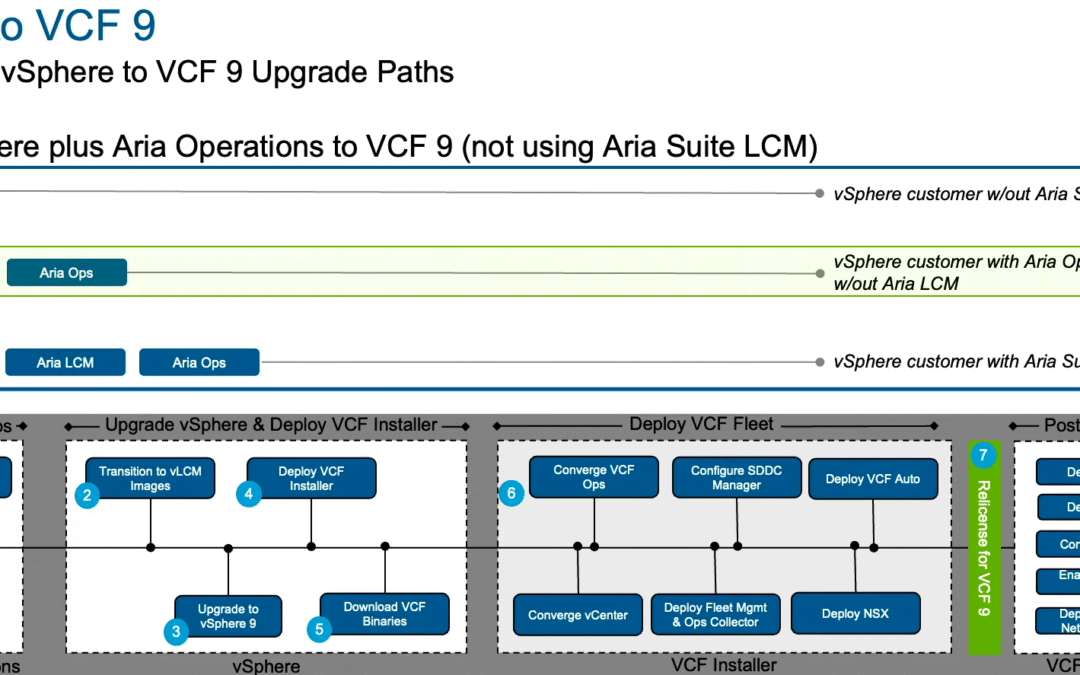

There are moments in IT where the real disruption is not the change you choose, but the change that quietly happens around you. Many VMware customers find themselves in exactly such a moment. On the surface, everything feels familiar. The same hypervisor, the same vendors, the same vocabulary. But...

Moving away from VMware to Nutanix makes sense when…

You shouldn't be asking "Which platform has the longest feature list?" but "What outcome justifies the cost of moving from one private cloud stack to another?". This is precisely where many VMware by Broadcom customers find themselves today. While there are still many loyal VMware customers, there...

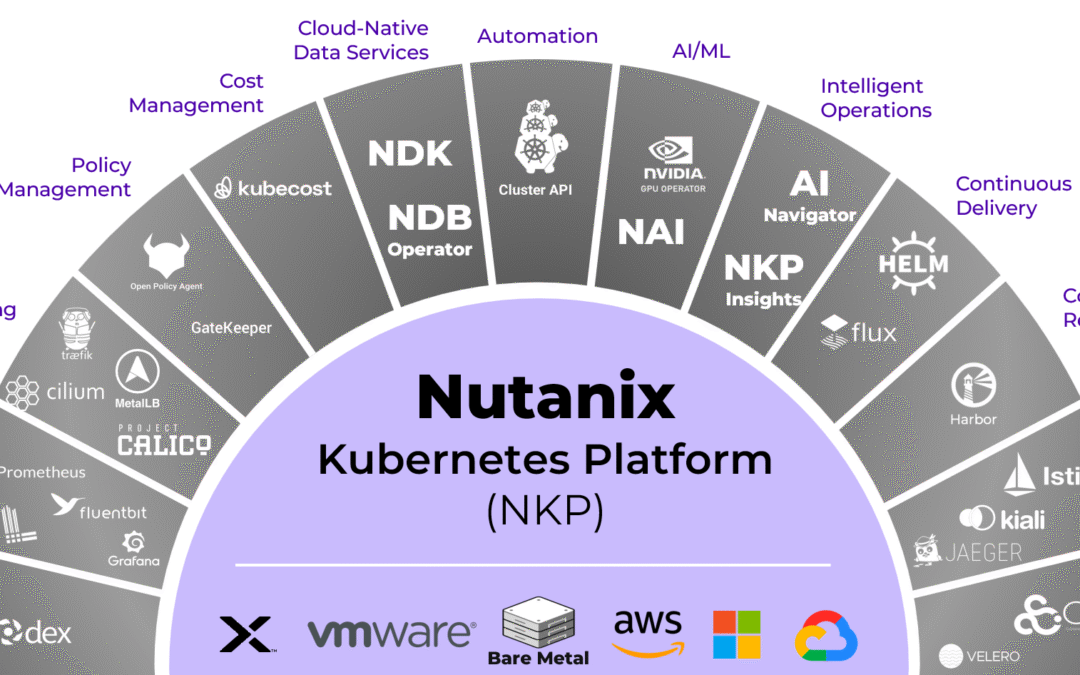

Open source gives you freedom. Nutanix makes that freedom actually usable.

Every organisation that wants to modernise its infrastructure eventually arrives at the same question: How open should my cloud be? Not open as in "free and uncontrolled", but open as in transparent, portable, verifiable. Open as in "I want to reduce my dependencies, regain autonomy and shape my...

VMware by Broadcom – The Standard of Independence Has Become a Structure of Dependency

There comes a point in every IT strategy where doing nothing becomes the most expensive choice. Many VMware by Broadcom customers know this moment well, and they sense that Broadcom's direction isn't theirs, but still hesitate to move. The truth is, the real risk isn't in changing platforms but...

Why I Left Oracle and Joined Nutanix

There are moments in a career when you stop and realise that the path beneath your feet is no longer the path you set out to walk. Sometimes the change is subtle, almost invisible and other times it becomes impossible to ignore. For me, this moment arrived somewhere between large public sector...

Why the Sovereign AI Platform from Nutanix Ends the DIY Illusion

AI has moved into every boardroom conversation. However, meaningful results don't come from building everything from scratch. For enterprises and public organizations, sovereignty has become the real test of digital trust, and platforms like NCP, NKP, and NAI give an answer where others struggle....