Application Modernization and Multi-Cloud Portability with VMware Tanzu

It was 2019 when VMware announced Tanzu and Project Pacific. A lot has happened since then and almost everyone is talking about application modernization nowadays. With my strong IT infrastructure background, I had to learn a lot of new things to survive initial conversations with application owners, developers and software architects. And in the same time VMware’s Kubernetes offering grew and became very complex – not only for customers, but for everyone I believe. 🙂

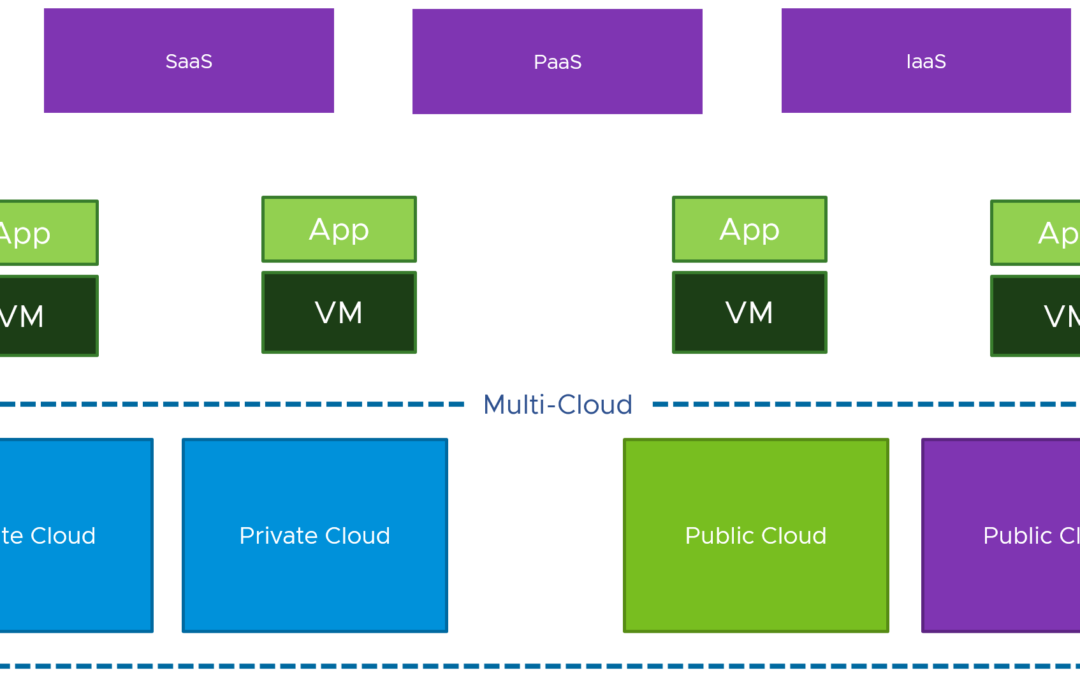

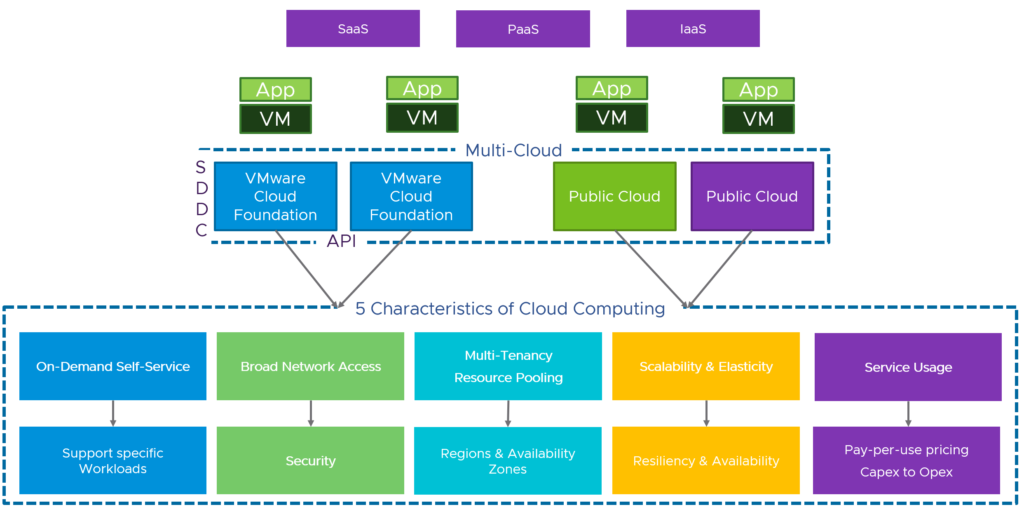

I already wrote about VMware’s vision with Tanzu: To put a consistent “Kubernetes grid” over any cloud

This is the simple message and value hidden behind the much larger topics when discussing application modernization and application/data portability across clouds.

The goal of this article is to give you a better understanding about the real value of VMware Tanzu and to explain that it’s less about Kubernetes and the Kubernetes integration with vSphere.

Application Modernization

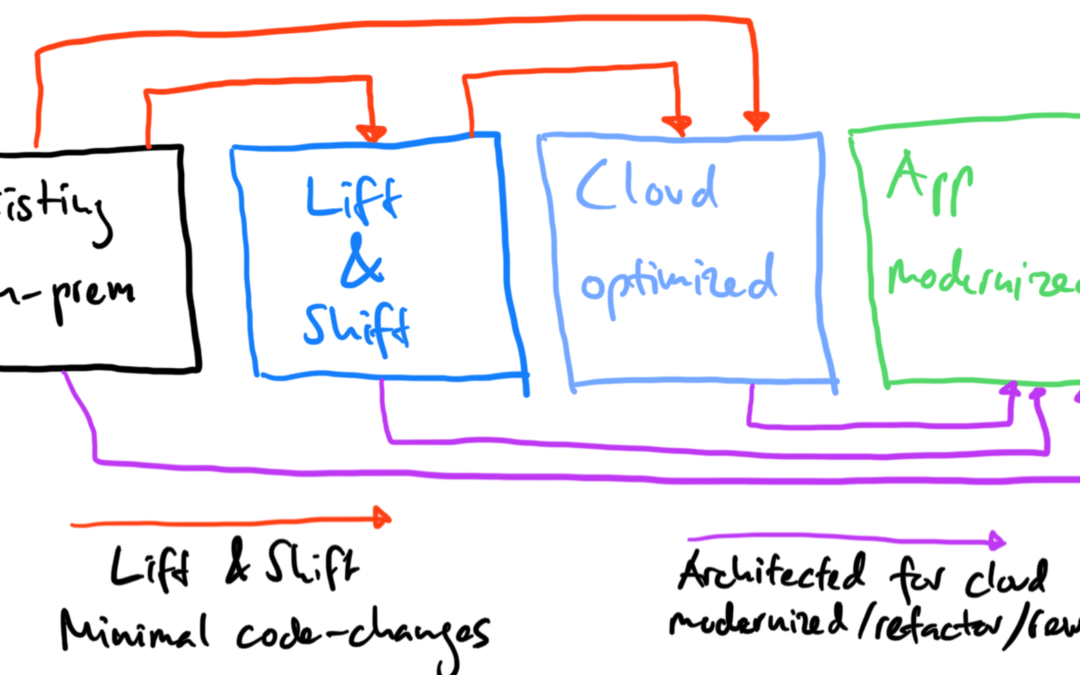

Before we can talk about the modernization of applications or the different migration approaches like:

- Retain – Optimize and retain existing apps, as-is

- Rehost/Migration (lift & shift) – Move an application to the public cloud without making any changes

- Replatform (lift and reshape) – Put apps in containers and run in Kubernetes. Move apps to the public cloud

- Rebuild and Refactor – Rewrite apps using cloud native technologies

- Retire – Retire traditional apps and convert to new SaaS apps

…we need to have a look at the palette of our applications:

- Web Apps – Apache Tomcat, Nginx, Java

- SQL Databases – MySQL, Oracle DB, PostgreSQL

- NoSQL Databases – MongoDB, Cassandra, Prometheus, Couchbase, Redis

- Big Data – Splunk, Elasticsearch, ELK stack, Greenplum, Kafka, Hadoop

In an app modernization discussion, we very quickly start to classify applications as microservices or monoliths. From an infrastructure point of view you look at apps differently and call them “stateless” (web apps) or “stateful” (SQL, NoSQL, Big Data) apps.

And with Kubernetes we are trying to overcome the challenges, which come with the stateful applications related to app modernization:

- What does modernization really mean?

- How do I define “modernization”?

- What is the benefit by modernizing applications?

- What are the tools? What are my options?

What has changed? Why is everyone talking about modernization? Why are we talking so much about Kubernetes and cloud native? Why now?

To understand the benefits (and challenges) of app modernization, we can start looking at the definition from IBM for a “modern app”:

“Application modernization is the process of taking existing legacy applications and modernizing their platform infrastructure, internal architecture, and/or features. Much of the discussion around application modernization today is focused on monolithic, on-premises applications—typically updated and maintained using waterfall development processes—and how those applications can be brought into cloud architecture and release patterns, namely microservices“

Modern applications are collections of microservices, which are light, fault tolerant and small. Microservices can run in containers deployed on a private or public cloud.

Which means, that a modern application is something that can adapt to any environment and perform equally well.

Note: App modernization can also mean, that you must move your application from .NET Framework to .NET Core.

I have a customer, that is just getting started with the app modernization topic and has hundreds of Windows applications based on the .NET Framework. Porting an existing .NET app to .NET Core requires some work, but is the general recommendation for the future. This would also give you the option to run your .NET Core apps on Windows, Linux and macOS (and not only on Windows).

A modern application is something than can run on bare-metal, VMs, public cloud and containers, and that easily integrates with any component of your infrastructure. It must be something, that is elastic. Something, that can grow and shrink depending on the load and usage. Since it is something that needs to be able to adapt, it must be agile and therefore portable.

Cloud Native Architectures and Modern Designs

If I ask my VMware colleagues from our so-called MAPBU (Modern Application Platform Business Unit) how customers can achieve application portability, the answer is always: “Cloud Native!”

Many organizations and people see cloud native as going to Kubernetes. But cloud native is so much more than the provisioning and orchestration of containers with Kubernetes. It’s a about collaboration, DevOps, internal processes and supply chains, observability/self-healing, continuous delivery/deployment and cloud infrastructure.

There are so many definitions around “cloud native”, that Kamal Arora from Amazon Web Services and others wrote the book “Cloud Native Architecture“, which describes a maturity model. This model helps you to understand, that cloud native is more a journey than only restrictive definition.

The adoption of cloud services and applying an application-centric design are very important, but the book also mentions that security and scalability rely on automation. And this for example could bring the requirement for Infrastructure as Code (IaC).

In the past, virtualization – moving from bare-metal to vSphere – didn’t force organizations to modernize their applications. The application didn’t need to change and VMware abstracted and emulated the bare-metal server. So, the transition (P2V) of an application was very smooth and not complicated.

And this is what has changed today. We have new architectures, new technologies and new clouds running with different technology stacks. We have Kubernetes as framework, which requires applications to be redesigned for these platforms.

That is the reason why enterprises have to modernize their applications.

One of the “five R’s” mentioned above is the lift and shift approach. If you don’t want or need to modernize some of your applications, but move to the public cloud in an easy, fast and cost efficient way, have a look at VMware’ hybrid cloud extension (HCX).

In this article I focus more on the replatform and refactor approaches in a multi-cloud world.

Kubernetize and productize your applications

Assuming that you also define Kubernetes as the standard to orchestrate your containers where your microservices are running in, usually the next decision would be about the Kubernetes “product” (on-prem, OpenShift, public cloud).

Looking at the current CNCF Cloud Native Landscape, we can count over 50 storage vendors and over 20 networks vendors providing cloud native storage and networking solutions for containers and Kubernetes.

Talking to my customers, most of them mention the storage and network integration as one of their big challenges with Kubernetes. Their concern is about performance, resiliency, different storage and network patterns, automation, data protection/replication, scalability and cloud portability.

Why do organizations need portability?

There are many use cases and requirements that portability (infrastructure independence) becomes relevant. Maybe it’s about a hardware refresh or data center evacuation, to avoid vendor/cloud lock-in, not enough performance with the current infrastructure or it could be about dev/test environments, where resources are deployed and consumed on-demand.

Multi-Cloud Application Portability with VMware Tanzu

To explore the value of Tanzu, I would like to start by setting the scene with the following customer use case:

- On-premises: VMware vSphere infrastructure, no containerization yet, only legacy applications

- Azure: Using Azure Kubernetes Service (AKS) and Azure Native Services

- AWS: Using Amazon Elastic Kubernetes Services (EKS) and AWS Native Services

In this case the customer is following a cloud-appropriate approach to define which cloud is the right landing zone for their applications. They decided to develop new applications in the public cloud and use the native services from Azure and AWS. The customers still has hundreds of legacy applications (monoliths) on-premises and didn’t decide yet, if they want to follow a “lift and shift and then modernize” approach to migrate a number applications to the public cloud.

But some of their application owners already gave the feedback, that their applications are not allowed to be hosted in the public cloud, have to stay on-premises and need to be modernized locally.

At the same time the IT architecture team receives the feedback from other application owners, that the journey to the public cloud is great on paper, but brings huge operational challenges with it. So, IT operations asks the architecture team if they can do something about that problem.

Both cloud operations for Azure and AWS teams deliver a different quality of their services, changes and deployments take longer with one of their public clouds, they have problems with overlapping networks, different storage performance characteristics and APIs.

Another challenge is the role-based access to the different clouds, Kubernetes clusters and APIs. There is no central log aggregation and no observability (intelligent monitoring & alerting). Traffic distribution and load balancing are also other items on this list.

Because of the feedback from operations to architecture, IT engineering received the task to define a multi-cloud strategy, that solves this operational complexity.

Notes: These are the regular multi-cloud challenges, where clouds are the new silos and enterprises have different teams with different expertise using different management and security tools.

This is the time when VMware’s multi-cloud approach Tanzu become very interesting for such customers.

Consistent Infrastructure and Management

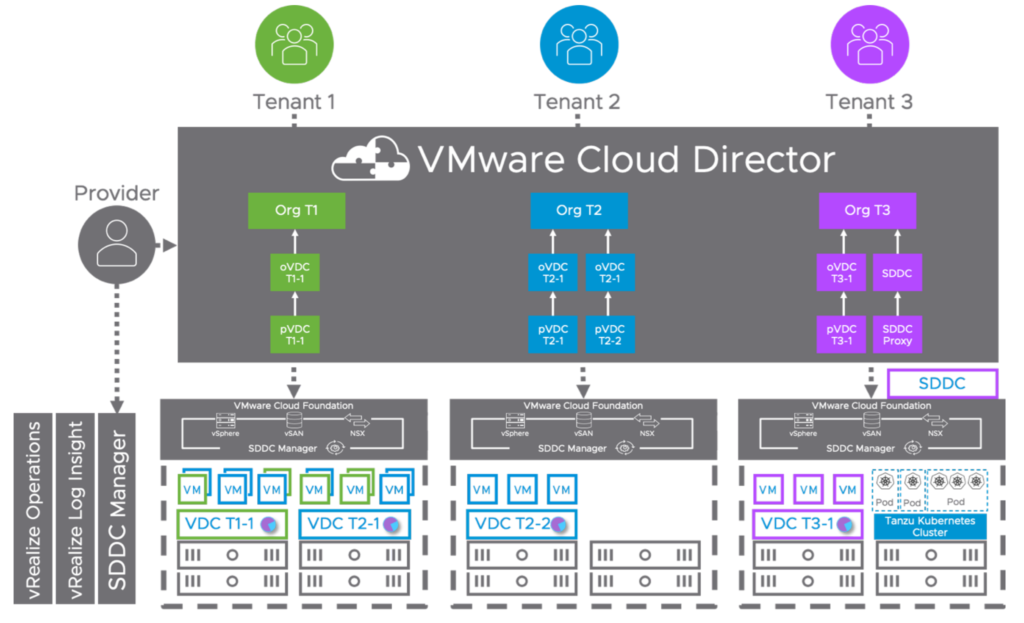

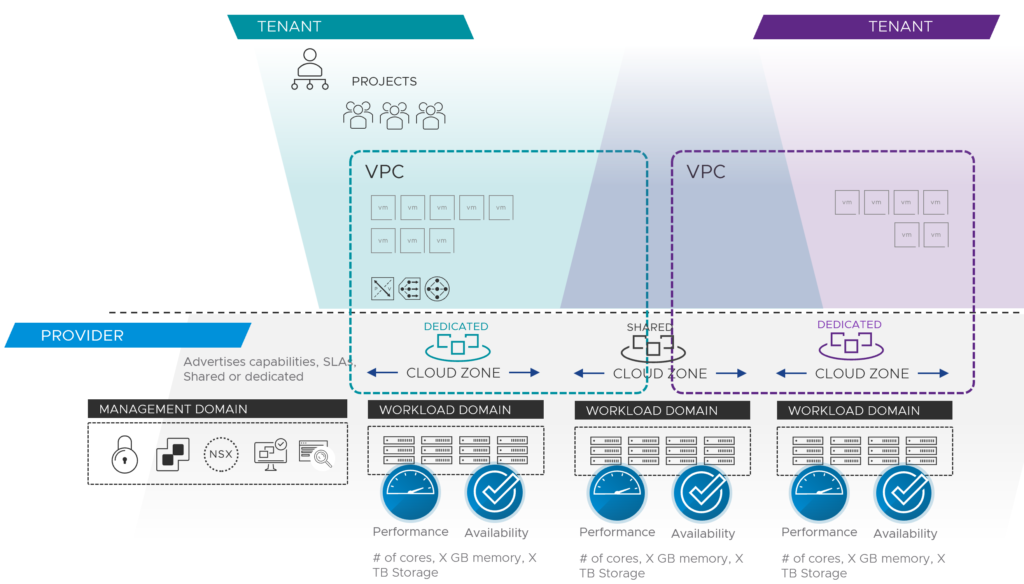

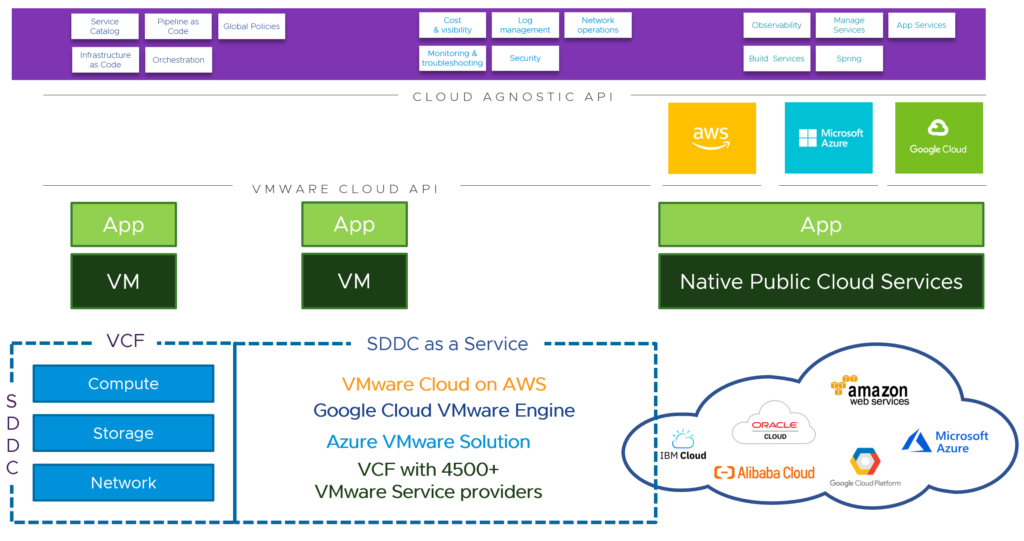

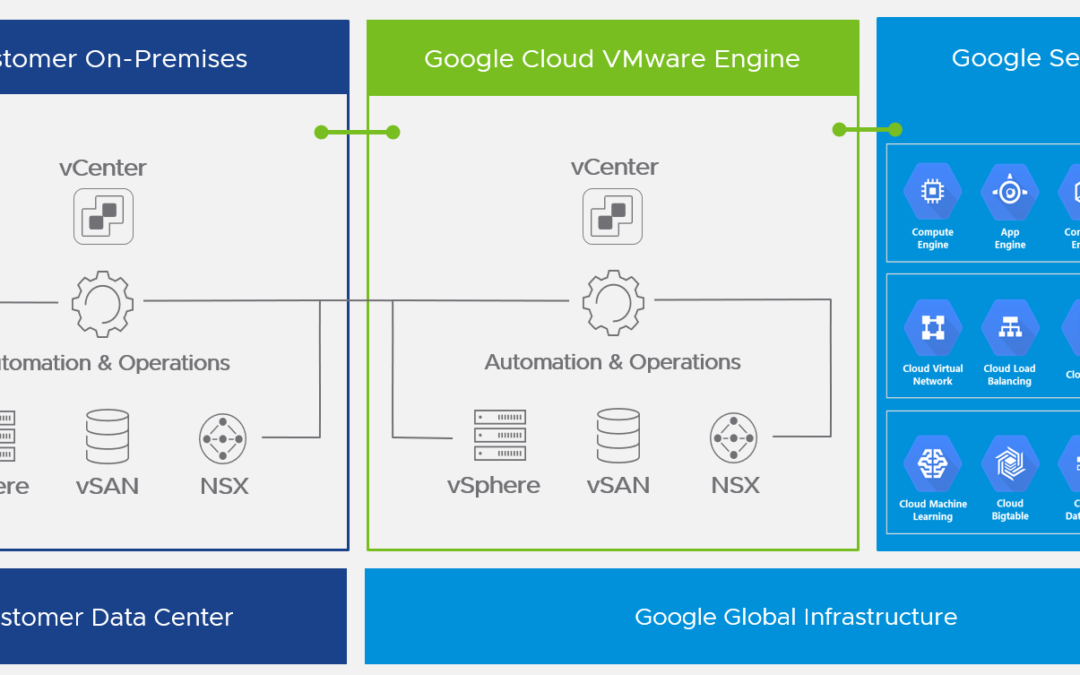

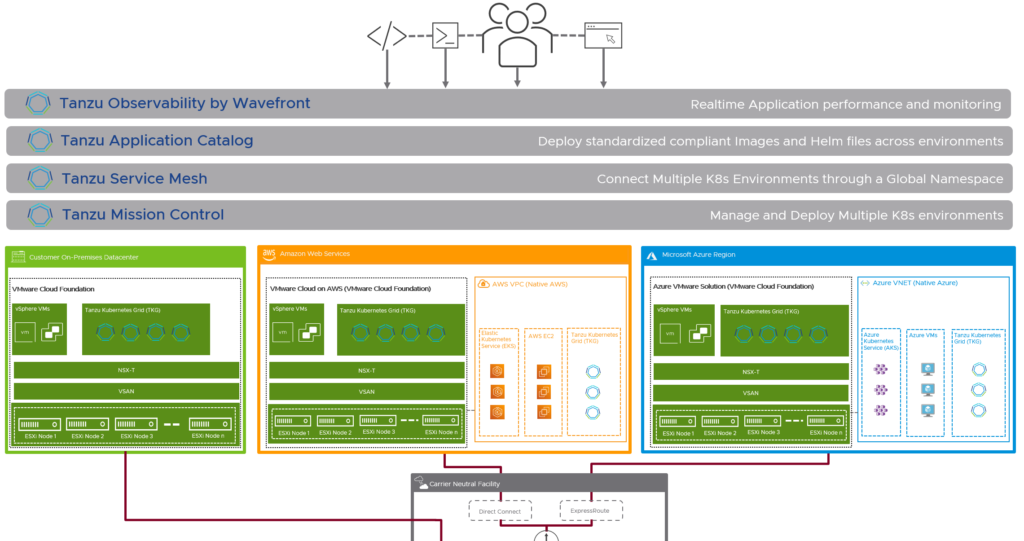

The first discussion point here would be the infrastructure. It’s important, that the different private and public clouds are not handled and seen as silos. VMware’s approach is to connect all the clouds with the same underlying technology stack based on VMware Cloud Foundation.

Beside the fact, that lift and shift migrations would be very easy now, this approach brings two very important advantages for the containerized workloads and the cloud infrastructure in general. It solves the challenge with the huge storage and networking ecosystem available for Kubernetes workloads by using vSAN and NSX Data Center in any of the existing clouds. Storage and networking and security are now integrated and consistent.

For existing workloads running natively in public clouds, customers can use NSX Cloud, which uses the same management plane and control plane as NSX Data Center. That’s another major step forward.

Using consistent infrastructure enables customers for consistent operations and automation.

Consistent Application Platform and Developer Experience

Looking at organization’s application and container platforms, achieving consistent infrastructure is not required, but obviously very helpful in terms of operational and cost efficiency.

To provide a consistent developer experience and to abstract the underlying application or Kubernetes platform, you would follow the same VMware approach as always: to put a layer on top.

Here the solution is called Tanzu Kubernetes Grid (TKG), that provides a consistent, upstream-compatible implementation of Kubernetes, that is tested, signed and supported by VMware.

A Tanzu Kubernetes cluster is an opinionated installation of Kubernetes open-source software that is built and supported by VMware. In all the offerings, you provision and use Tanzu Kubernetes clusters in a declarative manner that is familiar to Kubernetes operators and developers. The different Tanzu Kubernetes Grid offerings provision and manage Tanzu Kubernetes clusters on different platforms, in ways that are designed to be as similar as possible, but that are subtly different.

VMware Tanzu Kubernetes Grid (TKG aka TKGm)

Tanzu Kubernetes Grid can be deployed across software-defined datacenters (SDDC) and public cloud environments, including vSphere, Microsoft Azure, and Amazon EC2. I would assume, that the Google Cloud is a roadmap item.

TKG allows you to run Kubernetes with consistency and makes it available to your developers as a utility, just like the electricity grid. TKG provides the services such as networking, authentication, ingress control, and logging that a production Kubernetes environment requires.

This TKG version is also known as TKGm for “TKG multi-cloud”.

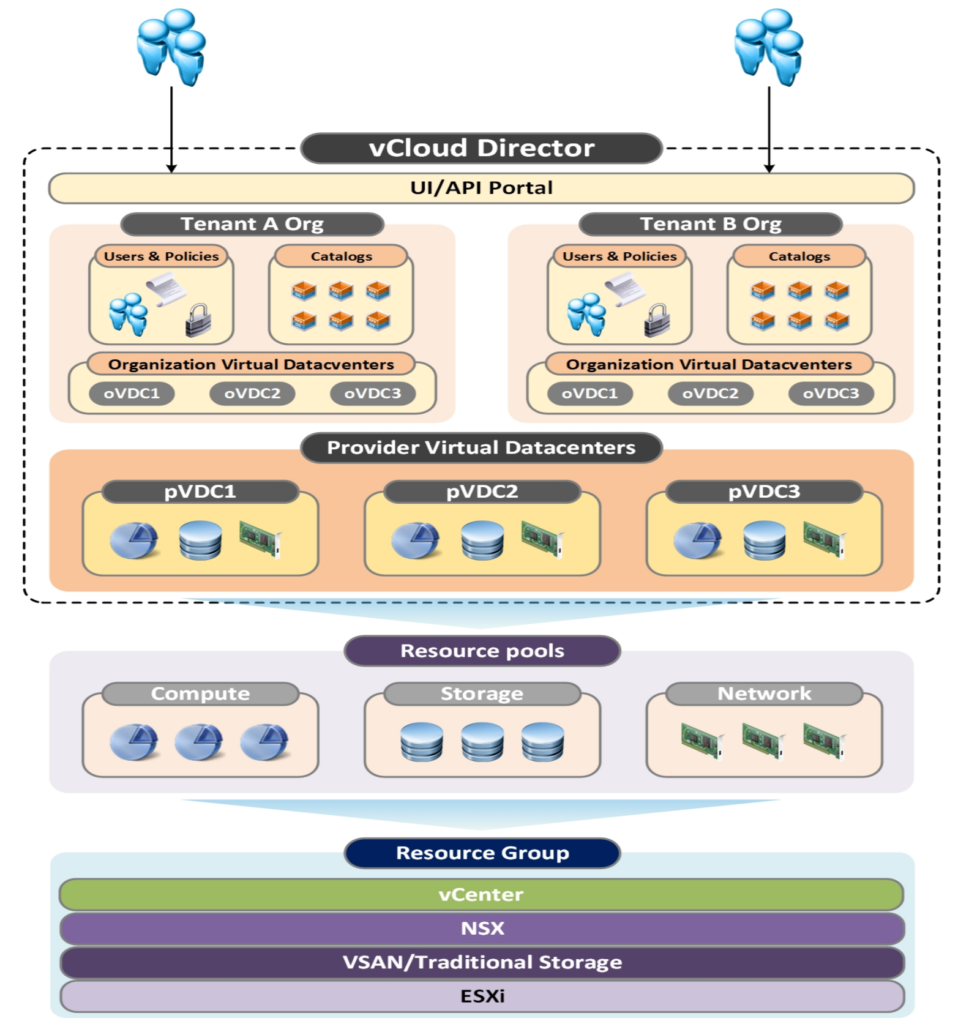

VMware Tanzu Kubernetes Grid Service (TKGS aka vSphere with Tanzu)

TKGS is the option vSphere admins want to hear about first, because it allows you to turn a vSphere cluster to a platform running Kubernetes workloads in dedicated resources pools. TKGS is the thing that was known as “Project Pacific” in the past.

Once enabled on a vSphere cluster, vSphere with Tanzu creates a Kubernetes control plane directly in the hypervisor layer. You can then run Kubernetes containers by deploying vSphere Pods, or you can create upstream Kubernetes clusters through the VMware Tanzu Kubernetes Grid Service and run your applications inside these clusters.

VMware Tanzu Mission Control (TMC)

In our use case before, we have AKS and EKS for running Kubernetes clusters in the public cloud.

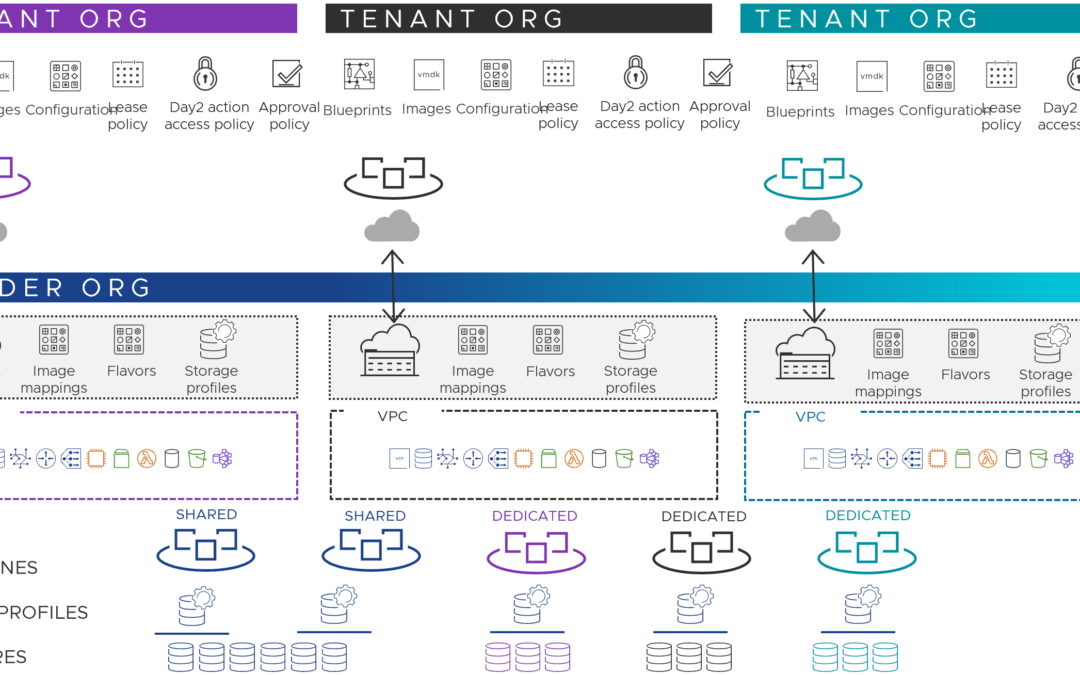

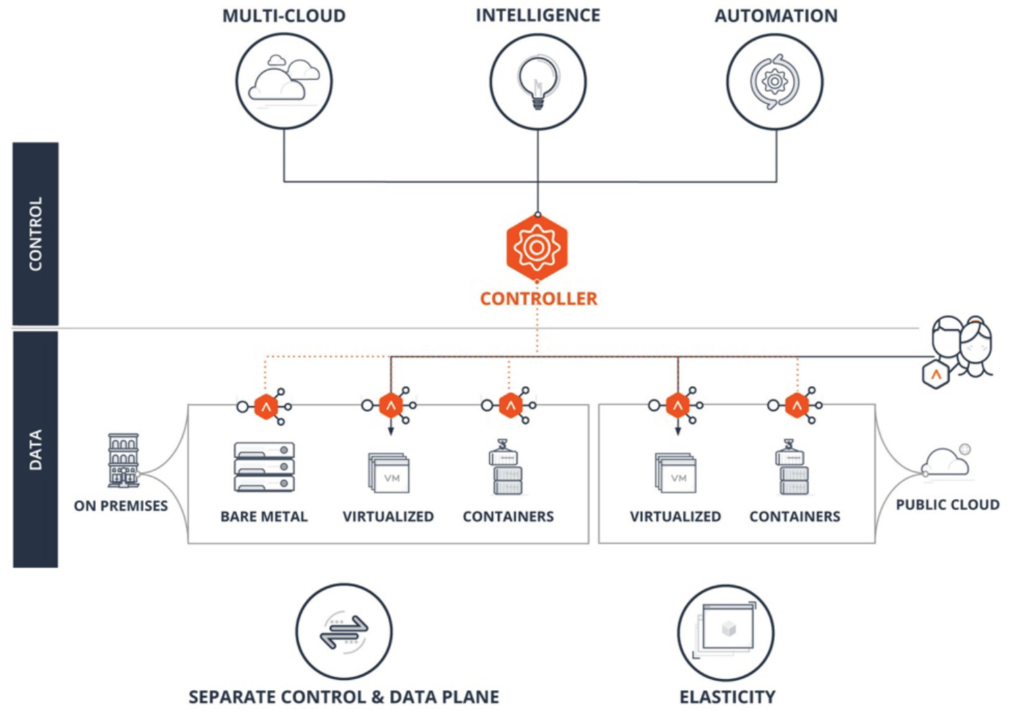

The VMware solution for multi-cluster Kubernetes management across clouds is called Tanzu Mission Control, which is a centralized management platform for the consistency and security the IT engineering team was looking for.

Available through VMware Cloud Services as SaaS offering, TMC provides IT operators with a single control point to provide their developers self-service access to Kubernetes clusters.

TMC also provides cluster lifecycle management for TKG clusters across environment such as vSphere, AWS and Azure.

It allows you to bring the clusters you already have in the public clouds or other environments (with Rancher or OpenShift for example) under one roof via the attachment of conformant Kubernetes clusters.

Not only do you gain global visibility across clusters, teams and clouds, but you also get centralized authentication and authorization, consistent policy management and data protection functionalities.

VMware Tanzu Observability by Wavefront (TO)

Tanzu Observability extends the basic observability provided by TMC with enterprise-grade observability and analytics.

Wavefront by VMware helps Tanzu operators, DevOps teams, and developers get metrics-driven insights into the real-time performance of their custom code, Tanzu platform and its underlying components. Wavefront proactively detects and alerts on production issues and improves agility in code releases.

TO is also a SaaS-based platform, that can handle the high-scale requirements of cloud native applications.

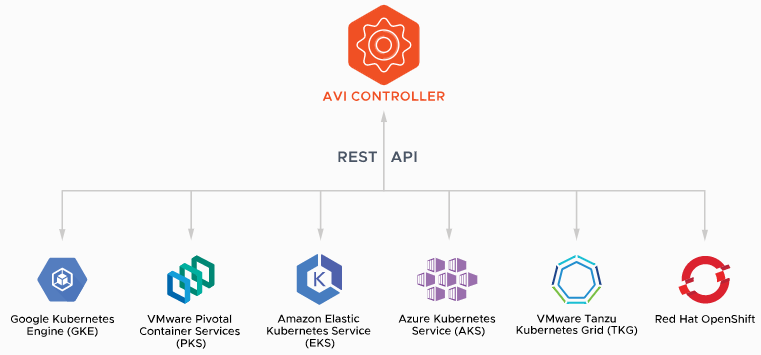

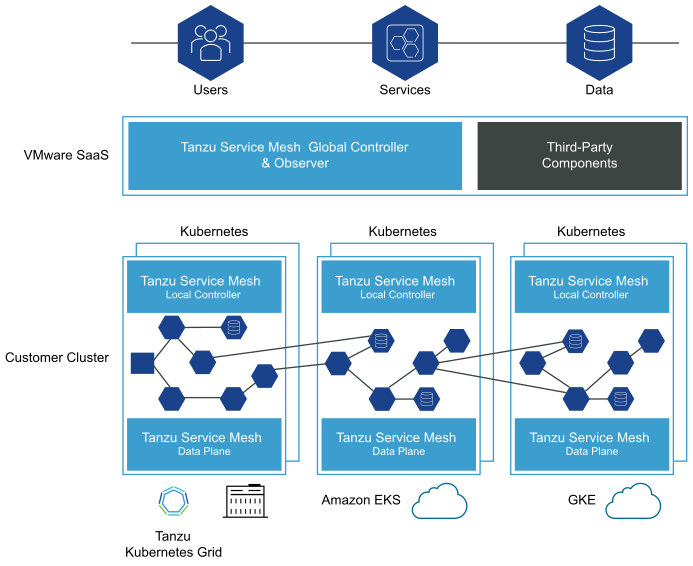

VMware Tanzu Service Mesh (TSM)

Tanzu Service Mesh, formerly known as NSX Service Mesh, provides consistent connectivity and security for microservices across all clouds and Kubernetes clusters. TSM can be installed in TKG clusters and third-party Kubernetes-conformant clusters.

Organizations that are using or looking at the popular Calico cloud native networking option for their Kubernetes ecosystem often consider an integration with Istio (Service Mesh) to connect services and to secure the communication between these services.

The combination of Calico and Istio can be replaced by TSM, which is built on VMware NSX for networking and that uses an Istio data plane abstraction. This version of Istio is signed and supported by VMware and is the same as the upstream version. TSM brings enterprise-grade support for Istio and a simplified installation process.

One of the primary constructs of Tanzu Service Mesh is the concept of a Global Namespace (GNS). GNS allows developers using Tanzu Service Mesh, regardless of where they are, to connect application services without having to specify (or even know) any underlying infrastructure details, as all of that is done automatically. With the power of this abstraction, your application microservices can “live” anywhere, in any cloud, allowing you to make placement decisions based on application and organizational requirements—not infrastructure constraints.

Note: On the 18th of March 2021 VMware announced the acquisition of Mesh7 and the integration of Mesh7’s contextual API behavior security solution with Tanzu Service Mesh to simplify DevSecOps.

Tanzu Editions

The VMware Tanzu portfolio comes with three different editions: Basic, Standard, Advanced

Tanzu Basic enables the straightforward implementation of Kubernetes in vSphere so that vSphere admins can leverage familiar tools used for managing VMs when managing clusters = TKGS

Tanzu Standard provides multi-cloud support, enabling Kubernetes deployment across on-premises, public cloud, and edge environments. In addition, Tanzu Standard includes a centralized multi-cluster SaaS control plane for a more consistent and efficient operation of clusters across environments = TKGS + TKGm + TMC

Tanzu Advanced builds on Tanzu Standard to simplify and secure the container lifecycle, enabling teams to accelerate the delivery of modern apps at scale across clouds. It adds a comprehensive global control plane with observability and service mesh, consolidated Kubernetes ingress services, data services, container catalog, and automated container builds = TKG (TKGS & TKGm) + TMC + TO + TSM + MUCH MORE

Tanzu Data Services

Another topic to reduce dependencies and avoid vendor lock-in would be Tanzu Data Services – a separate part of the Tanzu portfolio with on-demand caching (Tanzu Gemfire), messaging (Tanzu RabbitMQ) and database software (Tanzu SQL & Tanzu Greenplum) products.

Bringing all together

As always, I’m trying to summarize and simplify things where needed and I hope it helped you to better understand the value and capabilities of VMware Tanzu.

There are so many more products available in the Tanzu portfolio, that help you to build, run, manage, connect and protect your applications. In case you are interested to read more about VMware Tanzu, the have a look at my article 10 Things You Didn’t Know About VMware Tanzu.

If you would like to know more about application and cloud transformation make sure to attend the 45 minute VMware event on March 31 (Americas) or April 1 (EMEA/APJ)!