Multi-Cloud is a mess. You cannot solve that multi-cloud complexity with a single vendor or one single supercloud (or intercloud), it’s just not possible. But different vendors can help you on your multi-cloud journey to make your and the platform team’s life easier. The whole world talks about DevOps or DevSecOps and then there’s the shift-left approach which puts more responsibility on developers. It seems to me that too many times we forget the “ops” part of DevOps. That is why I would like to highlight the need for Tanzu Mission Control (which is part of Tanzu for Kubernetes Operations) and Tanzu Application Platform.

Challenges for Operations

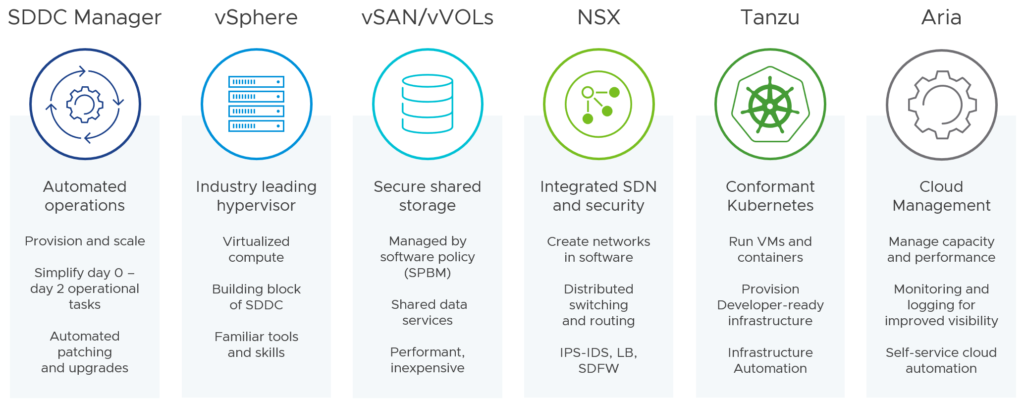

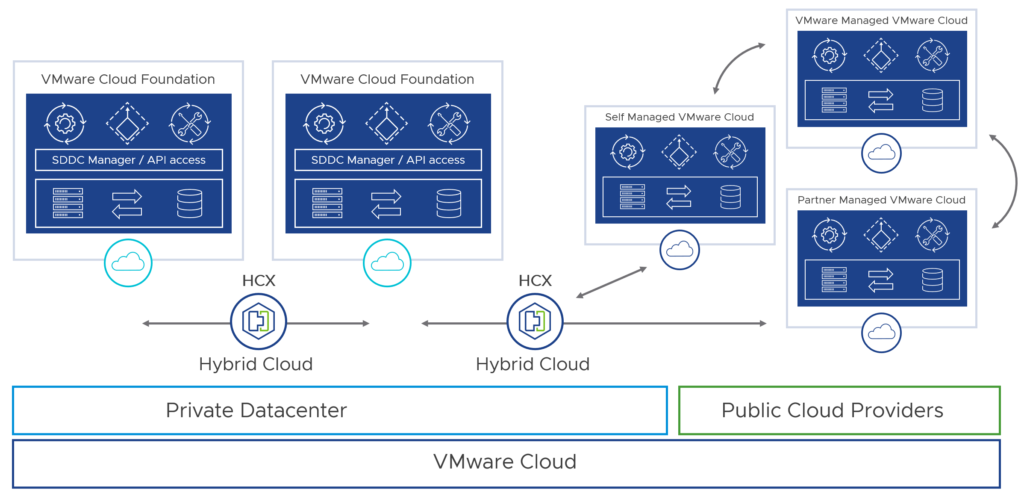

What has started with a VMware-based cloud in your data centers, has evolved to a very heterogeneous architecture with two or more public clouds like Amazon Web Services (AWS), Microsoft Azure or Google Cloud Platform. IT analysts tell us that 75% of businesses are already using two or more public clouds. Businesses choose their public cloud providers based on workload or application characteristics and a public clouds known strengths. Companies want to modernize their current legacy applications in the public clouds, because in most cases a simple rehost or migration (lift & shift) doesn’t bring value or innovation they are aiming for.

A modern application is a collection of microservices, which are light, fault tolerant and small. Microservices can run in containers deployed in a private or public cloud. Many operations and platform teams see cloud-native as going to Kubernetes. But cloud-native is so much more than the provisioning and orchestration of containers with Kubernetes. It’s about collaboration, DevOps, internal processes and supply chains, observability/self-healing, continuous delivery/deployment and cloud infrastructures.

Expectation of Kubernetes

Kubernetes 1.0 was contributed as an open source seed technology by Google to the Linux Foundation in 2015, which formed the sub-foundation “Cloud Native Computing Foundation” (CNCF). Founding CNCF members include companies like Google, Red Hat, Intel, Cisco, IBM and VMware.

Currently, the CNCF has over 167k project contributors, around 800 members and more than 130 certified Kubernetes distributions and platforms. Open source projects and the adoption of cloud native technologies are constantly growing.

If we access the CNCF Cloud Native Interactive Landscape, one will get an understanding how many open source projects are supported by the CNCF and maintained this open source community. Since donated to CNCF, almost every company on this planet is using Kubernetes, or a distribution of it:

These were just a few of total 63 certified Kubernetes distributions. What about the certified hosted Kubernetes service offerings? Let me list here some of the popular ones:

- Alibaba Cloud Container Service for Kubernetes

- Amazon Elastic Container Service for Kubernetes (EKS)

- Azure Kubernetes Service (AKS)

- Google Kubernetes Engine (GKE)

- Nutanix Karbon

- Oracle Container Engine

- OVH Managed Kubernetes Service

- Red Hat OpenShift Dedicated

All these clouds and vendors expose Kubernetes implementations, but writing software that performs equally well across all clouds seems to be still a myth. At least we have a common denominator, a consistency across all clouds, right? That’s Kubernetes.

Consistent Operations and Experience

It is very interesting to see that the big three hyperscalers Amazon, AWS and Google are moving towards multi-cloud enabled services and products to provide a consistent experience from an operations standpoint, especially for Kubernetes clusters.

Microsoft got Azure Arc now, Google provides Anthos (GKE clusters) for any cloud and AWS also realized that the future consists of multiple clouds and plans to provide AKS “anywhere”.

They all have realized that customers need a centralized management and control plane. Customers are looking for simplified operations and consistent experience when managing multi-cloud K8s clusters.

Tanzu Mission Control (TMC)

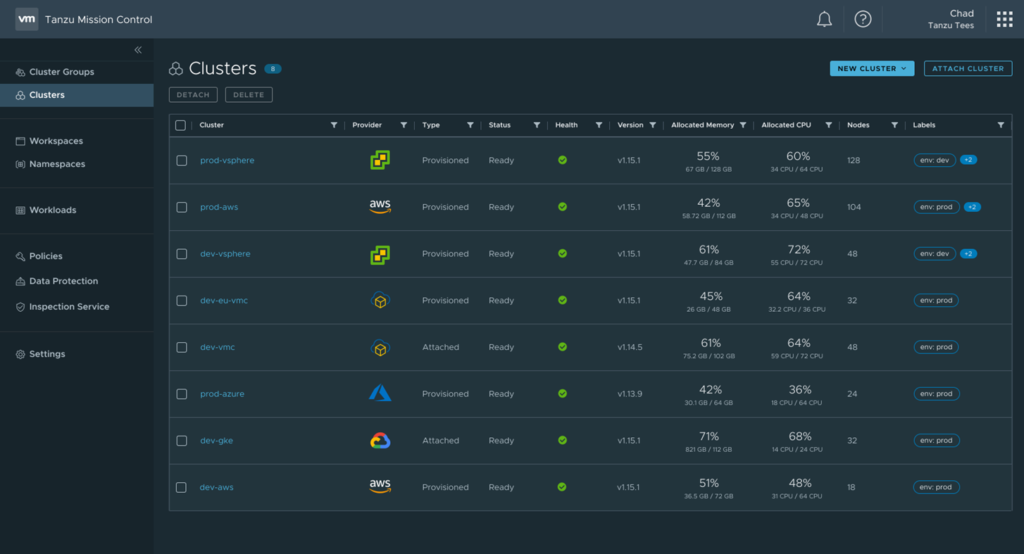

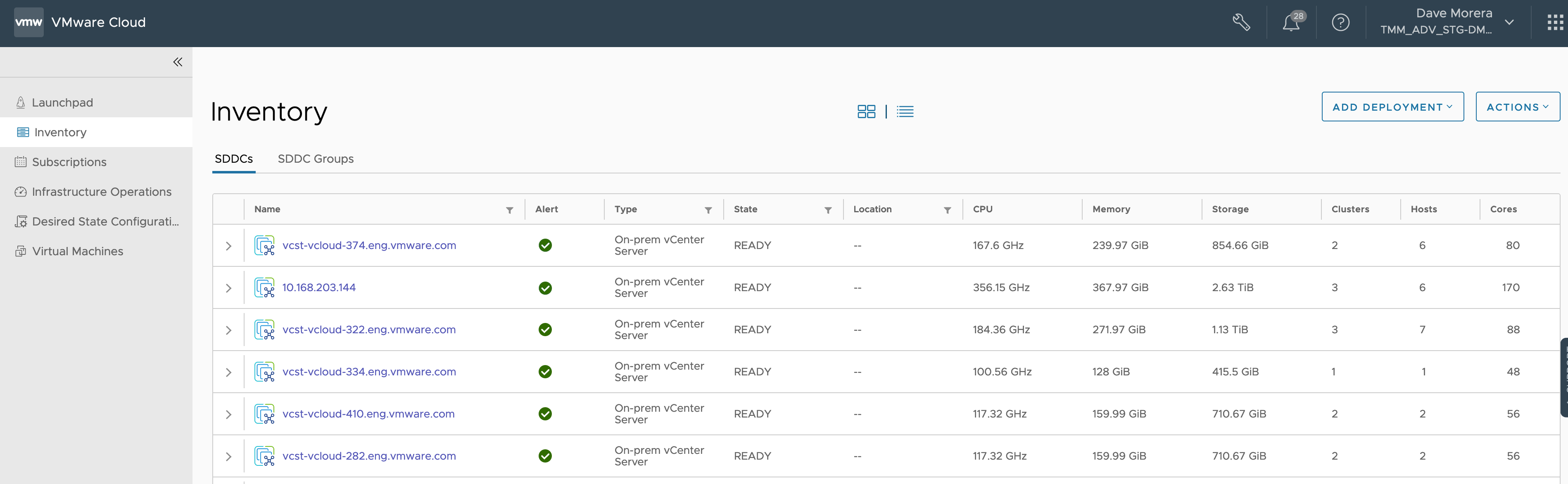

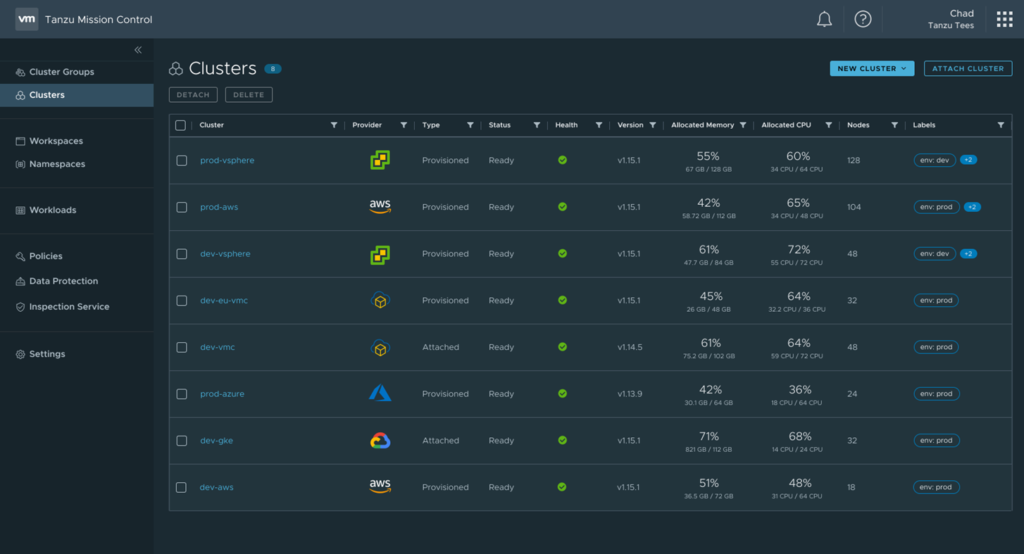

Imagine that you have a centralized dashboard with management capabilities, which provide a unified policy engine and allows you to lifecycle all the different K8s clusters you have.

TMC offers built-in security policies and cluster inspection capabilities (CIS benchmarks) so you can apply additional controls on your Kubernetes deployments. Leveraging the open source project Velero, Tanzu Mission Control gives ops teams the capability to very easily backup and restore your clusters and namespaces. Just 4 weeks ago, VMware announced cross-cluster backup and restore capabilities for Tanzu Mission Control, that let Kubernetes-based applications “become” infrastructure and distribution agnostic.

Tanzu Mission Control lets you attach any CNCF-conformant K8s cluster. When attached to TMC, you can manage policies for all Kubernetes distributions such as Tanzu Kubernetes Grid (TKG), Azure Kubernetes Service, Google Kubernetes Engine or OpenShift.

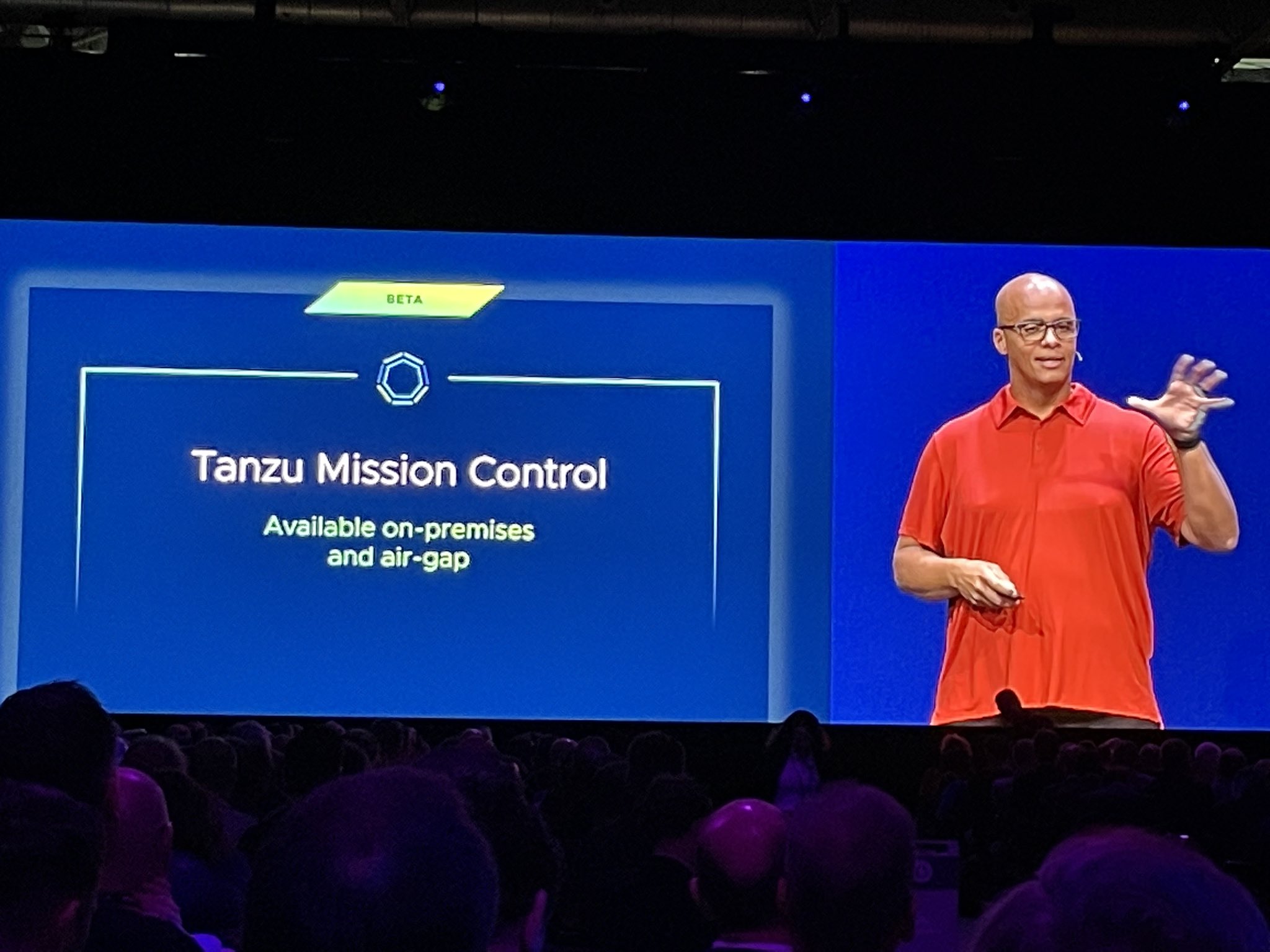

In VMware’s ongoing commitment to support customers in their multi-cloud application modernization efforts, the Tanzu Mission Control team introduced the preview of lifecycle management of Amazon AKS clusters at VMware Explore US 2022:

Preview for lifecycle management of Amazon Elastic Kubernetes Service (EKS) clusters can enable direct provisioning and management of Amazon EKS clusters so that developers and operators have less friction and more choices for cluster types. Teams will be able to simplify multi-cloud, multi-cluster Kubernetes management with centralized lifecycle management of Tanzu Kubernetes Grid and Amazon EKS cluster types.

Note: With this announcement I would expect that the support for Azure Kubernetes Service (AKS) is also coming soon.

Read the Tanzu Mission Control solution brief to get more information about its benefits and capabilities.

Challenges for Developers

Tanzu Mission Control provides cross-cloud services for your Kubernetes clusters deployed in multiple clouds. But there is still another problem.

Developers are being asked to write code and provide business logic that could run on-prem, on AWS, on Azure or any other public cloud. Every cloud provider has an interest to provide you their technologies and services. This includes the hosted Kubernetes offerings (with different Kubernetes distributions), load balancers, storage, databases, APIs, observability, security tools and so many other components. To me, it sounds very painful and difficult to learn and understand the details of every cloud provider.

Cross-cloud services alone don’t solve that problem. Obviously, neither Kubernetes solves that problem.

What if Kubernetes and centralized management and visibility are not “the” solution but rather something that sits on top of Kubernetes?

And Then Came PaaS

Kubernetes is a platform for building platforms and is not really meant to be used by developers.

The CNCF landscape is huge and complex to understand and integrate, so it is just a logical move that companies were looking more for pre-assembled solutions like platform as a service (PaaS). I think that Tanzu Application Service (formerly known as Pivotal Cloud Foundry), Heroku, RedHat OpenShift and AWS Elastic Beanstalk are the most famous examples for PaaS.

The challenge with building applications that run on a PaaS, is sometimes the need to leverage all the PaaS specific components to fully make use of it. What if someone wants to run her own database? What if the PaaS offering restricts programming languages, frameworks, or libraries? Or is it the vendor lock-in which bothers you?

PaaS solutions alone don’t seem to be solving the missing developer experience either for everyone.

Do you want to build the platform by yourself or get something off the shelf? There is a big difference between using a platform and running one. 🙂

Bring Your Own Kubernetes To A Portable PaaS

What’s next after IaaS has evolved to CaaS (because of Kubernetes) and PaaS? It is adPaaS (Application Developer PaaS).

Have you ever heard of the “Golden Path“? Spotify uses this term and Netflix calls it “Paved Road“.

The idea behind the golden path or paved road is that the (internal) platform offers some form of pre-assembled components and supported approach (best practices) that make software development faster and more scalable. Developers don’t have to reinvent the wheel by browsing through a very fragmented ecosystem of developer tooling where the best way to find out how to do things was to ask the community or your colleagues.

VMware announced Tanzu Application Platform (TAP) in September 2021 with the statement, that TAP will provide a better developer experience on any Kubernetes.

VMware Tanzu Application Platform delivers a prepaved path to production and a streamlined, end-to-end developer experience on any Kubernetes.

It is the platform team’s duty to install and configure the opinionated Tanzu Application Platform as an overlay on top of any Kubernetes cluster. They also integrate existing components of Kubernetes such as storage and networking. An opinionated platform provides the structure and abstraction you are looking for: The platform “does” it for you. In other words, TAP is a prescribed architecture and path with the necessary modularity and flexibility to boost developer productivity.

The developers can focus on writing code and do not have to fully understand the details like container image registries, image building and scanning, ingress, RBAC, deploying and running the application etc.

TAP comes with many popular best-of-breed open source projects that are improving the DevSecOps experience:

- Backstage. Backstage is an open platform for building developer portals, created at Spotify, donated to the CNCF, and maintained by a worldwide community of contributors.

- Carvel. Carvel provides a set of reliable, single-purpose, composable tools that aid in your application building, configuration, and deployment to Kubernetes.

- Cartographer. Cartographer is a VMware-backed project and is a Supply Chain Choreographer for Kubernetes. It allows App Operators to create secure and pre-approved paths to production by integrating Kubernetes resources with the elements of their existing toolchains (e.g. Jenkins).

- Tekton. Tekton is a cloud-native, open source framework for creating CI/CD systems. It allows developers to build, test, and deploy across cloud providers and on-premise systems.

- Grype. Grype is a vulnerability scanner for container images and file systems.

- Cloud Native Runtimes for VMware Tanzu. Cloud Native Runtimes for Tanzu is a serverless application runtime for Kubernetes that is based on Knative and runs on a single Kubernetes cluster.

At VMware Explore US 2022, VMware announced new capabilities that will be released in Tanzu Application Platform 1.3. The most important added functionalities for me are:

- Support for RedHat OpenShift. Tanzu Application Platform 1.3 will be available on RedHat OpenShift, running in vSphere and on baremetal.

- Support for air-gapped installations. Support for regulated and disconnected environments, helping to ensure that the components, upgrades, and patches are made available to the system and that they operate consistently and correctly in the controlled environment and keep data secure.

- Carbon Black Integration. Tanzu Application Platform expands the ecosystem of supported vulnerability scanners with a beta integration with VMware Carbon Black scanner to enable customer choice and leverage their existing investments in securing their supply chain.

The Power Combo for Multi-Cloud

A mix of different workloads like virtual machines and containers that are hosted in multiple clouds introduce complexity. With the powerful combination of Tanzu Mission Control and Tanzu Application Platform companies can unlock the full potential of their platform teams and developers by reducing complexity while creating and using abstraction layers on top your multi-cloud infrastructure.