Distributed Hybrid Infrastructure Offerings Are The New Multi-Cloud

Since VMware belongs to Broadcom, there was less focus and messaging on multi-cloud or supercloud architectures. Broadcom has drastically changed the available offerings and VMware Cloud Foundation is becoming the new vSphere. Additionally, we have seen big changes regarding the partnerships with hyperscalers (the Azures and AWSes of this world) and the VMware Cloud partners and providers. So, what happened to multi-cloud and how come that nobody (at Broadcom) talks about it anymore?

What is going on?

I do not know if it’s only me, but I do not see the term “multi-cloud” that often anymore. Do you? My LinkedIn feed is full of news about artificial intelligence (AI) and how Nvidia employees got rich. So, I have to admit that I lost track of hybrid clouds, multi-clouds, or hybrid multi-cloud architectures.

Cloud-Inspired and Cloud-Native Private Clouds

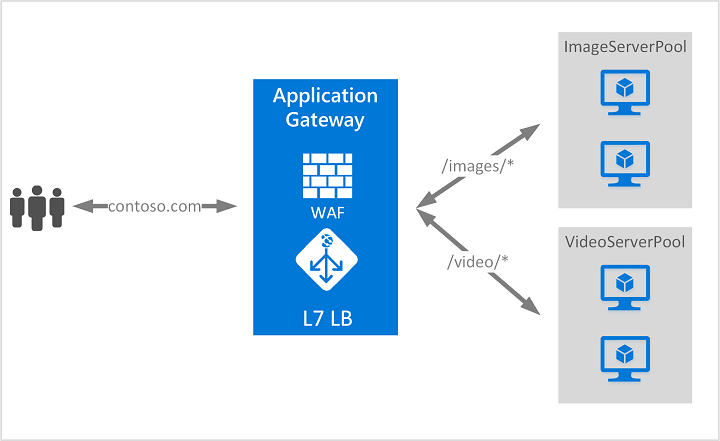

It seems to me that the initial idea of multi-cloud has changed in the meantime and that private clouds are becoming platforms with features. Let me explain.

Organizations have built monolithic private clouds in their data centers for a long time. In software engineering, the word “monolithic” describes an application that consists of multiple components, which form something larger. To build data centers, we followed the same approach by using different components like compute, storage, and networking. And over time, IT teams started to think about automation and security, and the integration of different solutions from different vendors.

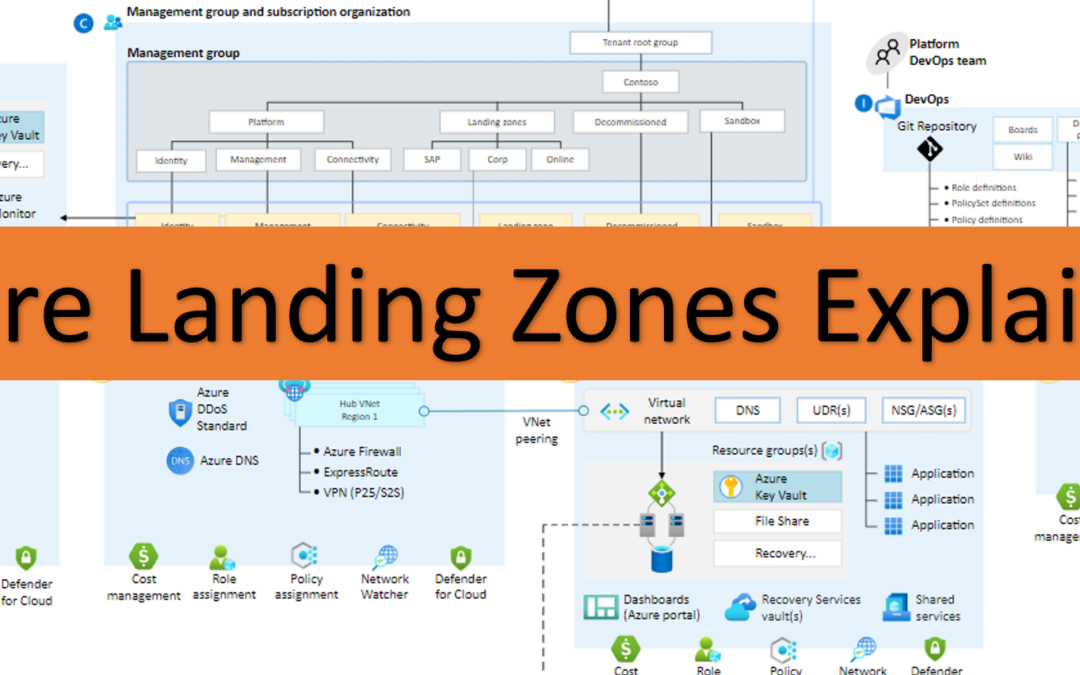

The VMware messaging was always pointing in the right direction: They want to provide a cloud operating system for any hardware and any cloud (by using VMware Cloud Foundation). On top of that, build abstraction layers and leverage a unified control plane (aka consistent automation and operations).

And I told all my customers since 2020 that they need to think like a cloud service provider, get rid of silos, implement new processes, and define a new operating model. That is VMware by Broadcom’s messaging today and this is where they and other vendors are headed: a platform with features that provide cloud services.

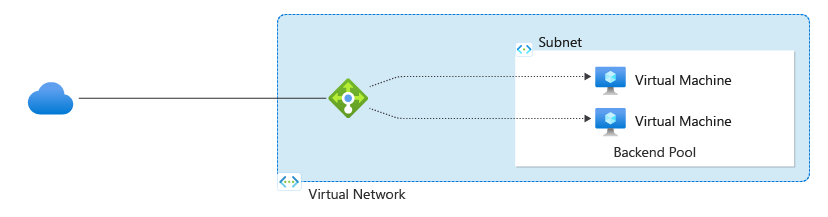

In other words, and this is my opinion, VMware Cloud Foundation is today a platform with different components like vSphere, vSAN, NSX, Aria, and so on. Tomorrow, it is still called VMware Cloud Foundation, a platform that includes compute, storage, networking, automation, operations, and other features. No more other product names, just capabilities, and services like IaaS, CaaS, DRaaS or DBaaS. You just choose the specs of the underlying hardware and networking, deploy your private clouds, and then start to build and consume your services.

Replace the name “VMware Cloud Foundation” in the last paragraph with AWS Outposts or Azure Stack. Do you see it now? Distributed unmanaged and managed hybrid cloud offerings with a (service) consumption interface on top.

That is the shift from monolithic data centers to cloud-native private clouds.

From Intercloud to Multi-Cloud

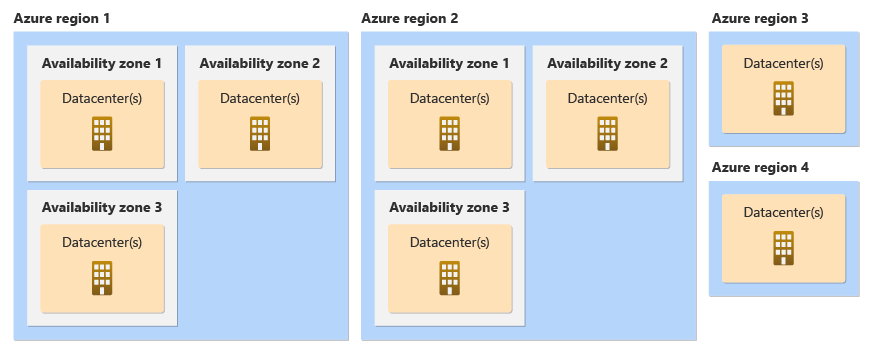

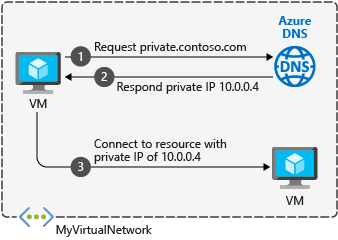

It is not the first time that I write about interclouds, that not many of us know. In 2012, there was this idea that different clouds and vendors need to be interoperable and agree on certain standards and protocols. Think about interconnected private and public clouds, which allow you to provide VM mobility or application portability. Can you see the picture in front of you? What is the difference today in 2024?

In 2023, I truly believed that VMware figured it out when they announced VMware Cloud on Equinix Metal (VMC-E). To me, VMC-E was different and special because of Equinix, who is capable of interconnecting different clouds, and at the same time could provide a baremetal-as-a-service (BMaaS) offering.

Workload Mobility and Application Portability

Almost 2 years ago, I started to write a book about this topic, because I wanted to figure out if workload mobility and application portability are things, that enterprises are really looking for. I interviewed many CIOs, CTOs, chief architects and engineers around the globe, and it became VERY clear: it seems nobody was changing anything to make app portability a design requirement.

Almost all of the people I have spoken to, told me, that a lot of things must happen that could trigger a cloud-exit and therefore they see this as a nice-to-have capability that helps them to move virtual machines or applications faster from one cloud to another.

And I have also been told that a lift & shift approach is not providing any value to almost all of them.

But when I talked to developers and operations teams, the answers changed. Most of them did not know that a vendor could provide mobility or portability. Anyway, what has changed now?

Interconnected Multi-Clouds and Distributed Hybrid Clouds

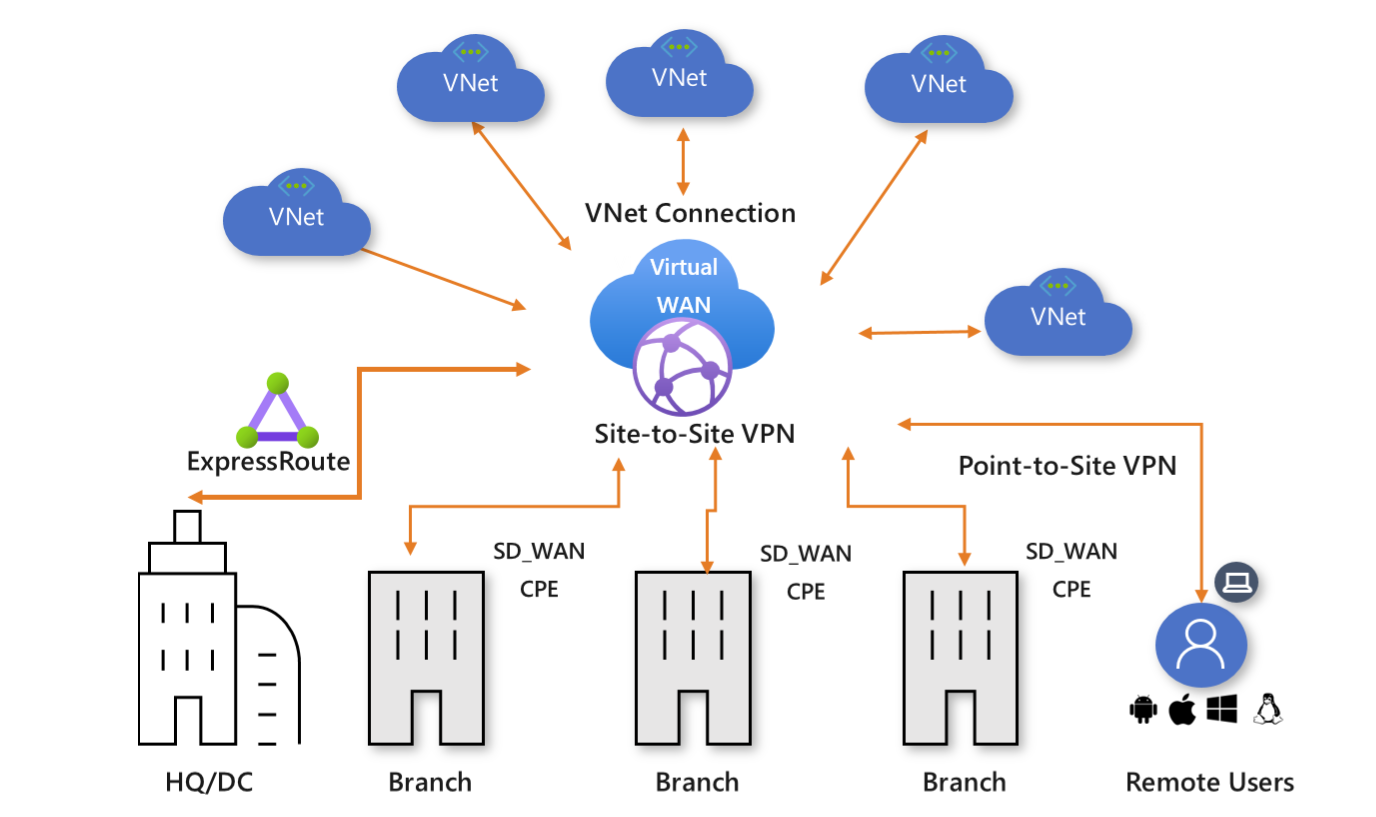

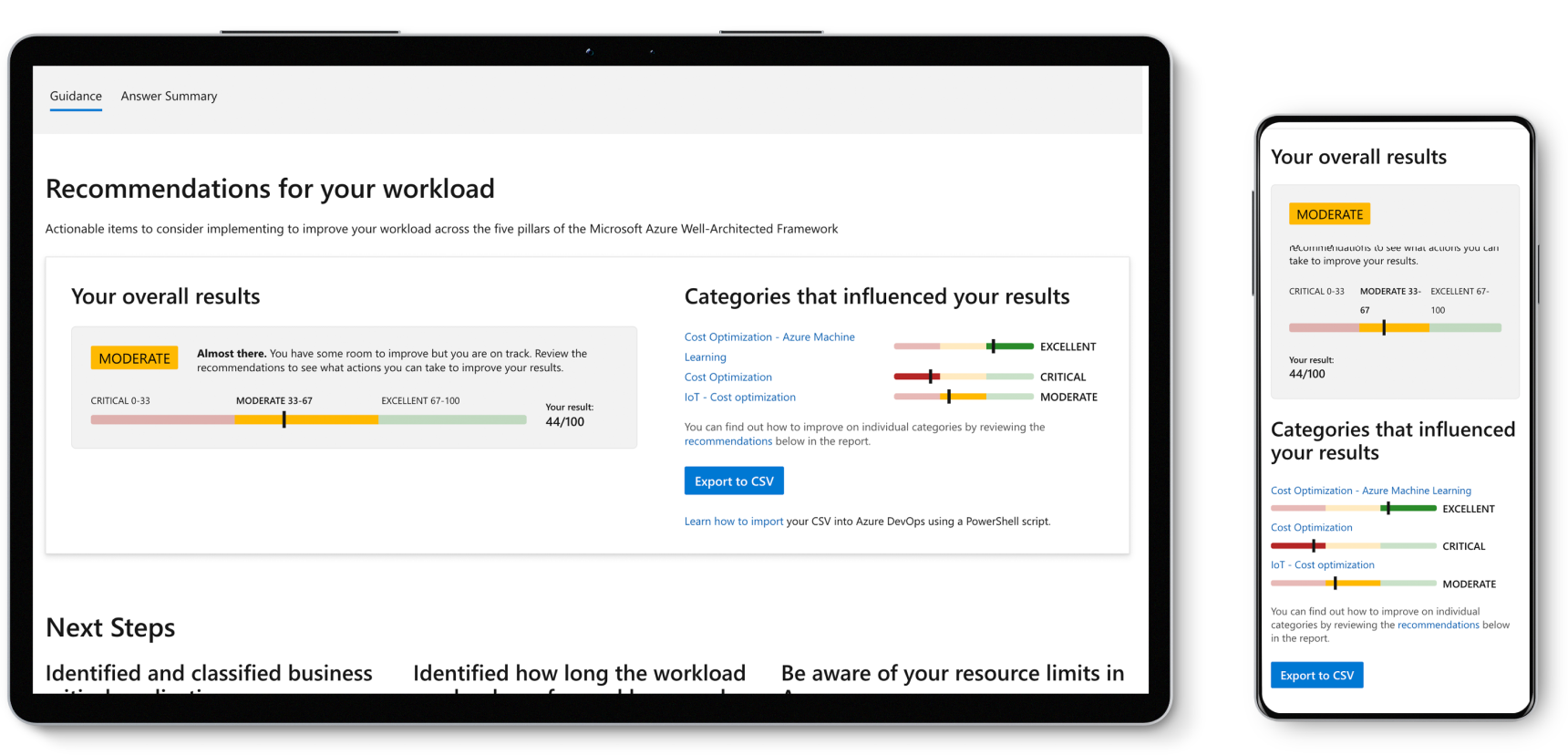

I mentioned it already before. Some vendors have realized that they need to deliver a unified and integrated programmable platform with a control plane. Ideally, this control plane can be used on-premises, as a SaaS solution, or both. And according to Gartner, these are the leaders in this area (Magic Quadrant for Distributed Hybrid Infrastructure):

In my opinion, VMware and Nutanix are providing a hybrid multi-cloud approach.

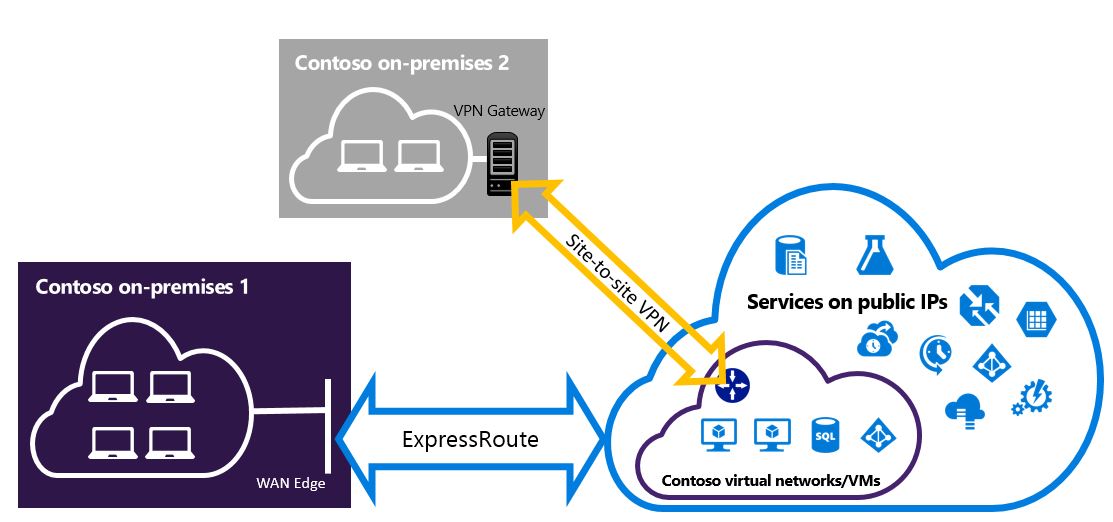

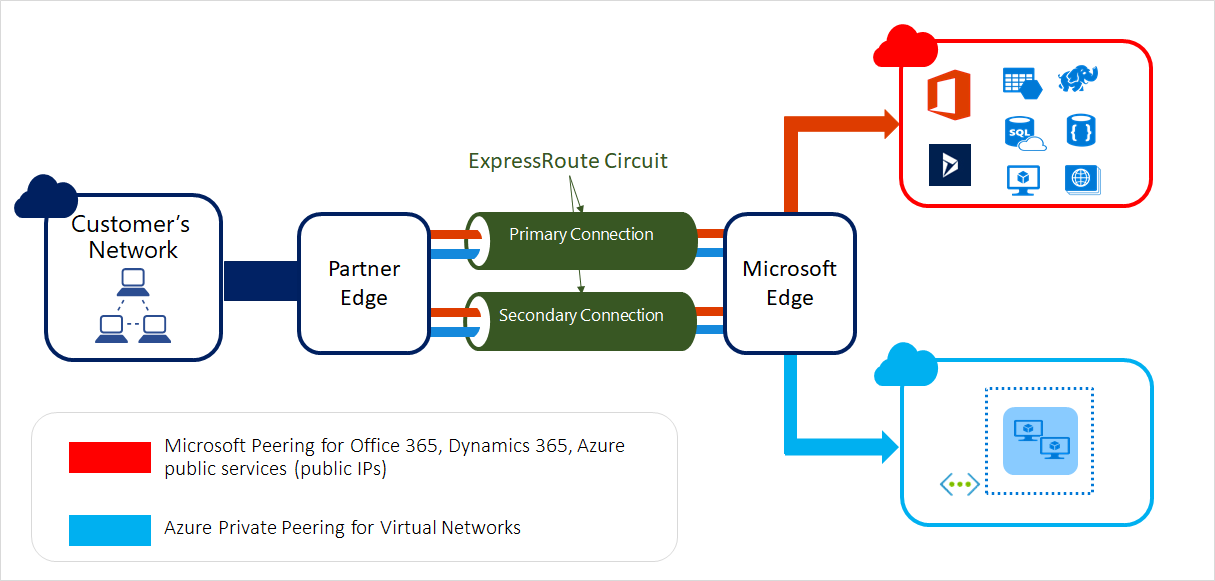

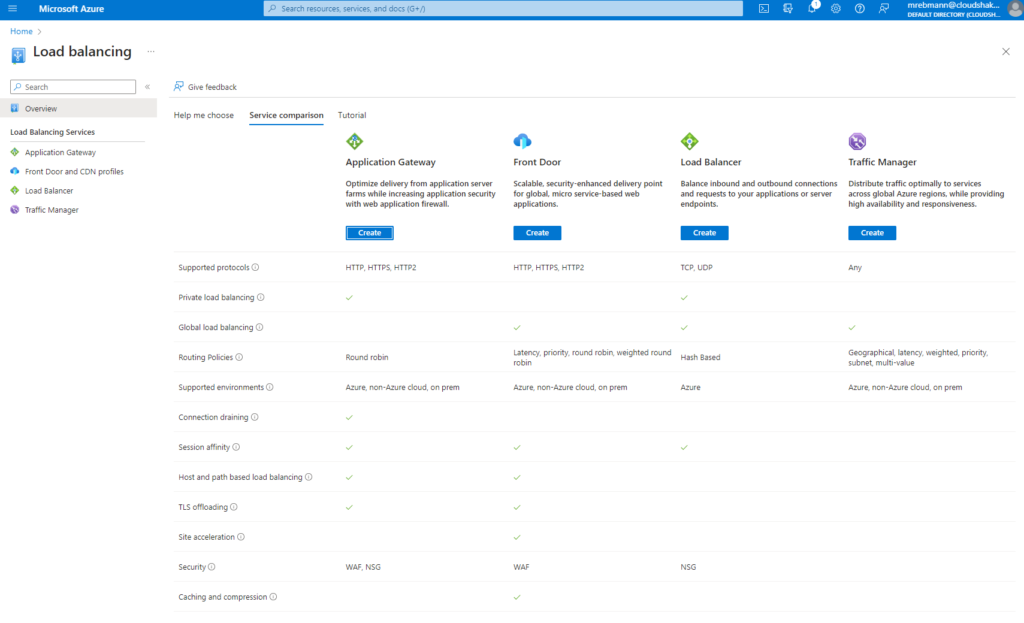

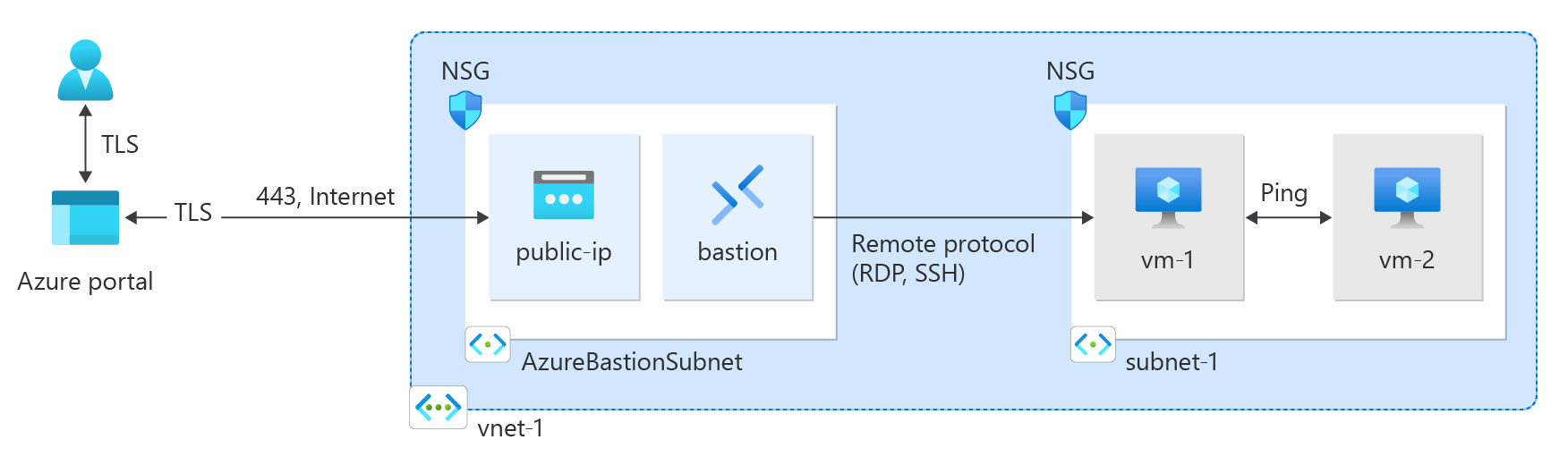

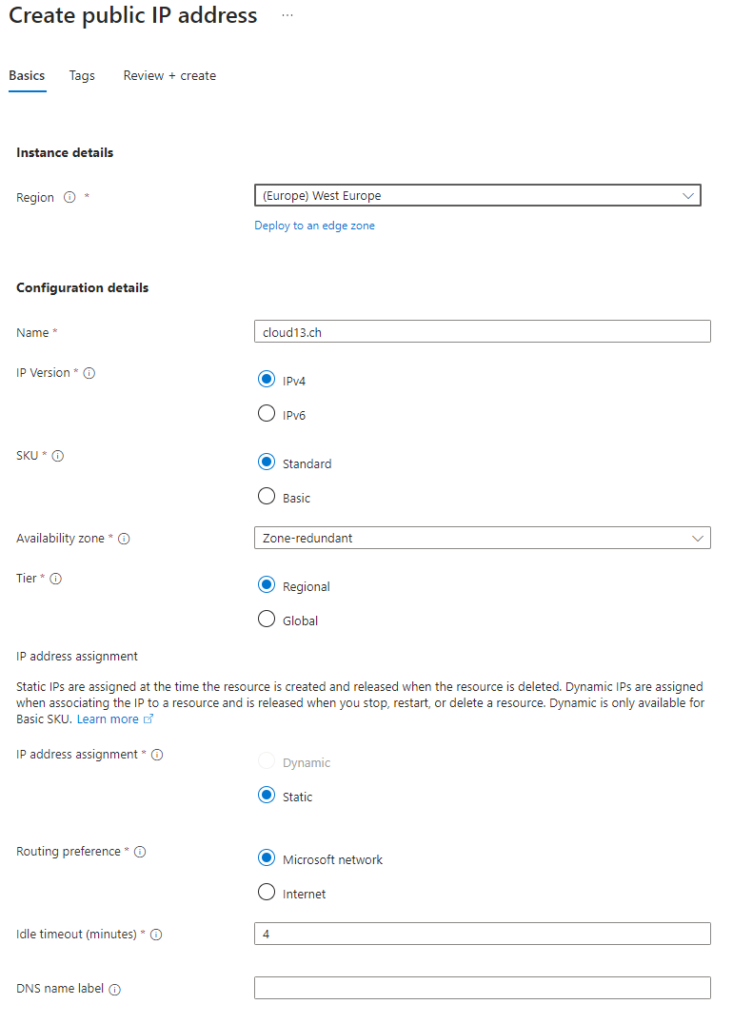

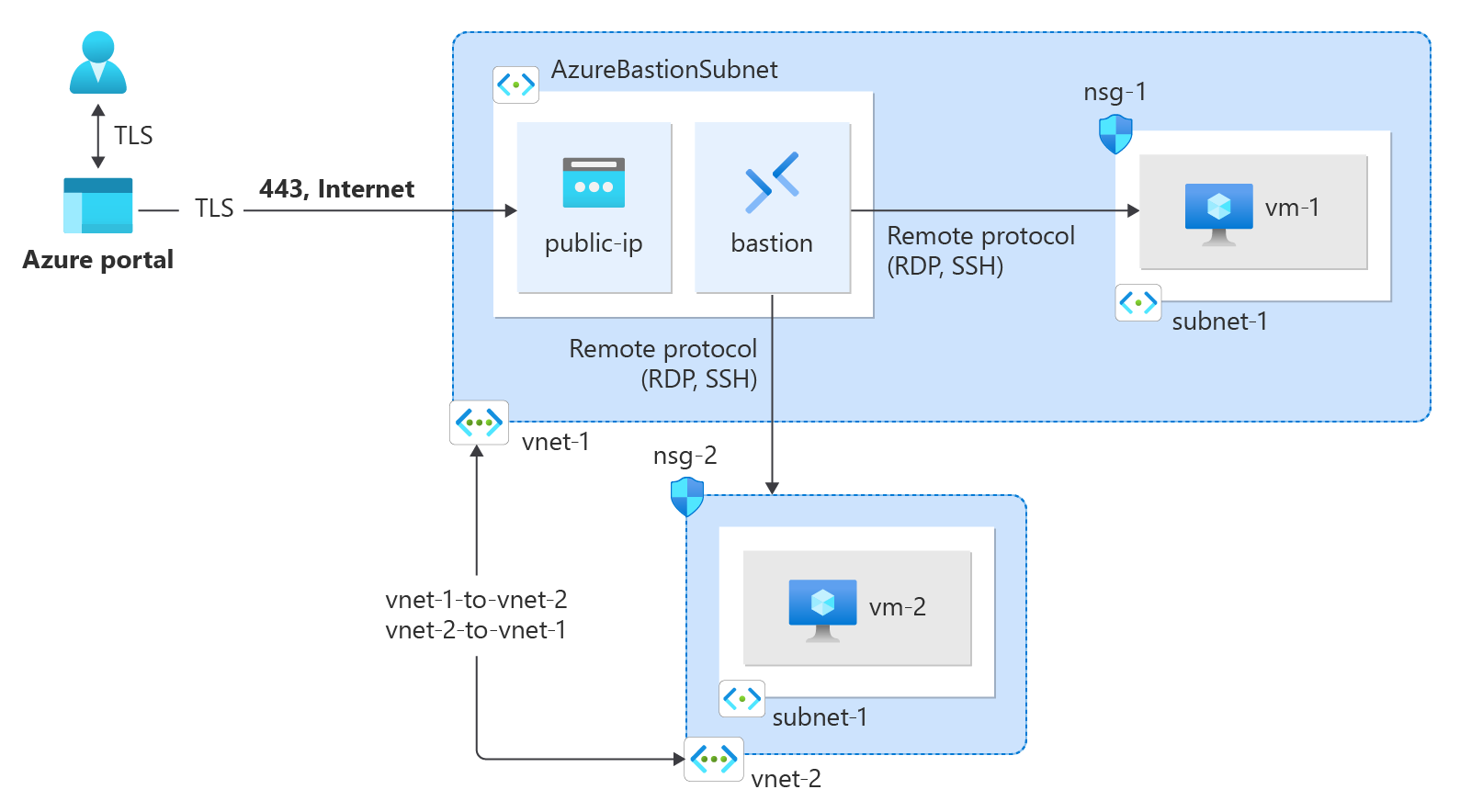

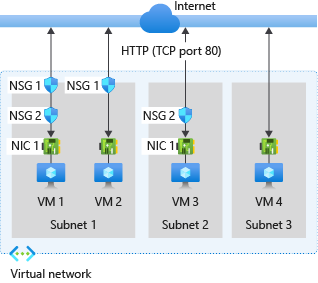

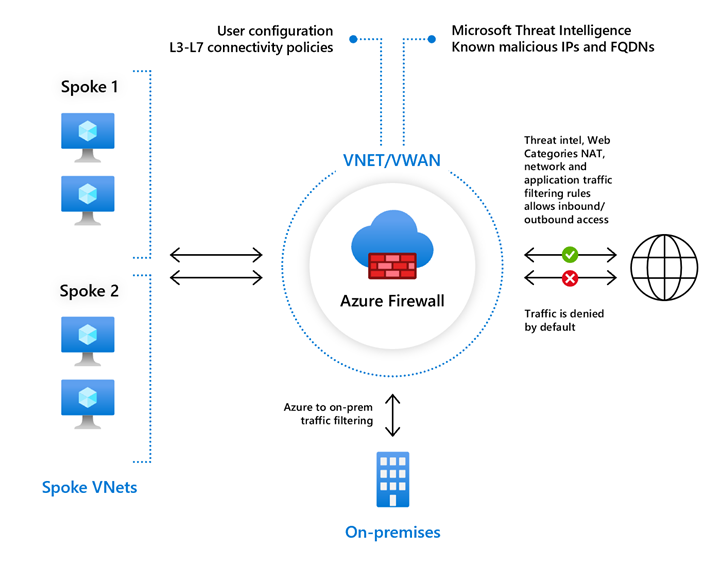

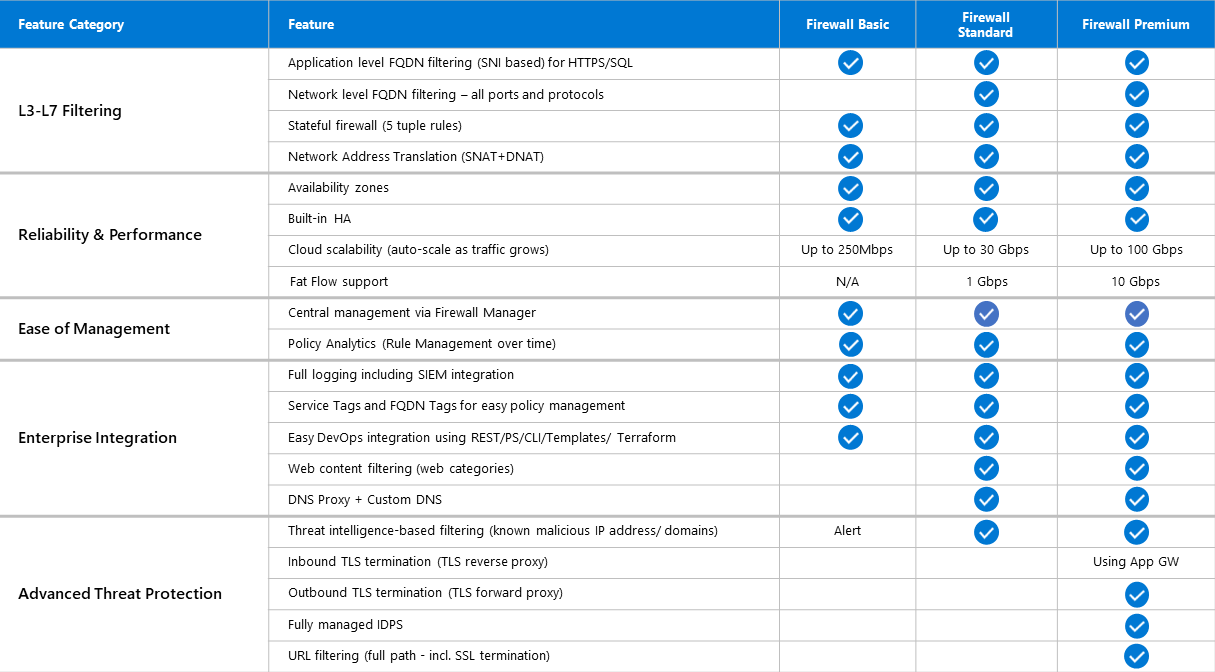

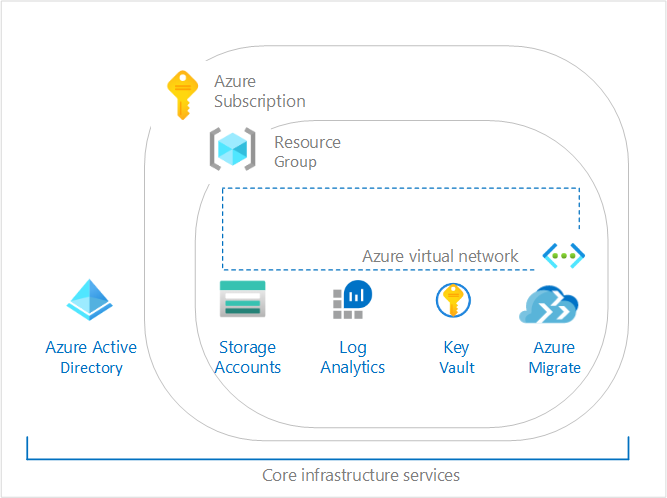

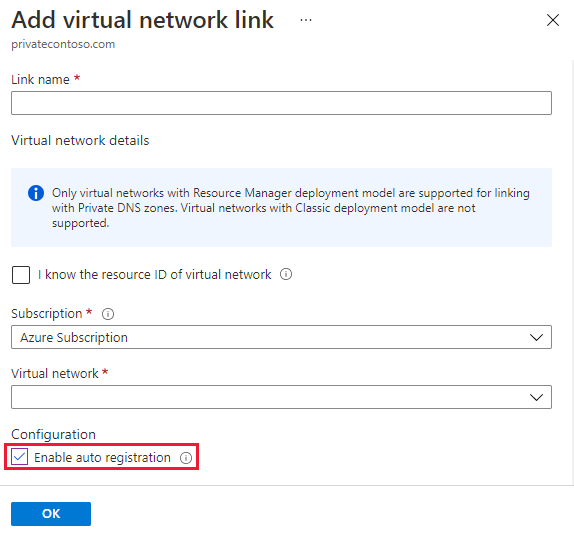

AWS and Microsoft are providing hybrid cloud solutions. In Microsoft’s case, we see Azure Stack HCI, Azure Kubernetes Service (AKS incl. Hybrid AKS) and Azure Arc extending Microsoft’s Azure services to on-premises data centers and edge locations.

The only vendor, that currently offers true multi-cloud capabilities, is Oracle. Oracle has Dedicated Region Cloud@Customer (DRCC) and Roving Edge, but also partnerships with Microsoft and Google that allow customers to host Oracle databases in Azure and Google Cloud data centers. Both partnerships come with a cross-cloud interconnection.

That is one of the big differences and changes for me at the moment. Multi-cloud has become less about mobility or portability, a single global control plane, or the same Kubernetes distribution in all the clouds, but more about bringing different services from different cloud providers closer together.

This is the image I created for the VMC-E blog. Replace the words “AWS” and “Equinix” with “Oracle” and suddenly you have something that was not there before, an interconnected multi-cloud.

What’s Next?

Based on the conversations with my customers, it does not feel that public cloud migrations are happening faster than in 2020 or 2022 and we still see between 70 and 80% of the workloads hosted on-premises. While we see customers who are interested in a cloud-first approach, we see many following a hybrid multi-cloud and/or multi-cloud approach. It is still about putting the right applications in the right cloud based on the right decisions. This has not changed.

But the narrative of such conversations has changed. We will see more conversations about data residency, privacy, security, gravity, proximity, and regulatory requirements. Then there are sovereign clouds.

Lastly, enterprises are going to deploy new platforms for AI-based workloads. But that could still take a while.

Final Thoughts

As enterprises continue to navigate the above mentioned complexities, the need for flexible, scalable, and secure infrastructure solutions will only grow. There are a few compelling solutions that bridge the gap between traditional on-premises systems and modern cloud environments.

And since most enterprises are still hosting their workloads on-premises, they have to decide if they want to stretch the private cloud to the public cloud, or the other way around. Both options can co-exist, but would make it too big and too complex. What’s your conclusion?

Note:

Note: